How Voice Call APIs Power Creator Platforms

How to build a futureproof relationship with AI

Voice call APIs are changing how creators interact with their audiences. These tools enable real-time engagement through live calls, interactive streams, and instant chats, creating more personal connections. They also offer advanced features like tone and sentiment analysis, AI-powered interactions, and multilingual support, making platforms accessible to a global audience.

Key takeaways:

Real-time engagement: Fans can join live voice conversations, adding depth beyond text chats.

Privacy tools: Number masking and admin controls protect creators and fans.

Accessibility: Speech-to-text (STT) and text-to-speech (TTS) improve usability for people with disabilities.

Monetization: Creators can earn through premium calls, AI avatars, and dynamic ads.

AI Twins: Platforms like TwinTone automate engagement and content creation.

Voice call APIs also address technical challenges like latency, ensuring smooth conversations, while maintaining data privacy and scalability for high-traffic events. These tools are reshaping creator platforms, offering new ways to connect and generate income.

Benefits of Voice Call APIs for Creator Platforms

Real-Time Creator-Fan Interactions

Voice call APIs bring a new dimension to creator-fan engagement by enabling live, voice-based conversations during streams. Instead of relying solely on text chats, fans can participate in real-time discussions where tone, timing, and even pauses add depth to the interaction. These nuances create a more personal and meaningful connection that text simply can't replicate.

"Voice adds intonation, timing, and even silence – important cues that help you better navigate a conversation." - Donal Toomey and Paul Heath, Twilions

To ensure privacy, these APIs use number masking, which assigns temporary proxy numbers so creators and fans can communicate without sharing personal phone information. Creators also have tools to maintain control - admin panels let them answer, mute, or end calls, authenticate callers, and even block users if necessary. For global audiences, APIs provide local phone numbers in multiple countries, making it affordable for fans to join without worrying about hefty international call charges.

These features not only enhance interaction but also pave the way for broader accessibility improvements.

Accessibility and Inclusivity Features

Voice APIs are breaking down barriers, making creator platforms more accessible to everyone. For fans who are deaf or hard of hearing, speech-to-text (STT) technology provides real-time transcriptions and captions during live calls. On the flip side, text-to-speech (TTS) converts written messages into natural-sounding audio, helping users with visual impairments or those who prefer listening to content.

"Speech recognition APIs can make the web and apps more accessible for people with disabilities." - Stream

Support for multiple languages and accents further expands accessibility. Modern APIs offer TTS and STT in various languages, enabling creators to connect with fans worldwide in their native tongues. Voice commands add another layer of inclusivity, allowing users with motor impairments to navigate streams or send virtual gifts using just their voice. With 62% of Americans already using voice assistants, these features align with how people naturally interact with technology.

In addition to accessibility, voice APIs open up exciting ways for creators to boost their earnings.

Monetization Opportunities

Voice call APIs aren't just about engagement - they also unlock fresh revenue streams for creators. By offering premium call-in access or pairing voice services with AI avatars, creators can host personalized consultations or virtual events, increasing revenue by 10–15%. These AI-driven features ensure creators can stay connected with their audience 24/7 while maintaining secure interactions.

Secure payment processing is another standout feature. Leading voice API solutions meet PCI DSS Level 1 compliance standards, ensuring financial transactions during voice calls are handled safely. Platforms can also monetize directly by incorporating dynamic audio ads or sponsored messages into live call streams, adding another layer of income potential.

"A voice API can transform your business... every touchpoint from inbound sales to follow-ups can be fully automated by a friendly, empathetic, and helpful AI." - Noah Kravitz, Chief Business Officer, Bland AI

Voice APIs are proving to be a game-changer, offering creators tools not just to connect but also to thrive financially in today's digital landscape.

How Voice Call APIs Work in Creator Platforms

API Integration Steps

To integrate a voice call API, the process starts with obtaining API keys and secrets from providers such as Twilio or OpenAI. For browser-based or mobile applications, it's crucial to generate short-lived AccessTokens (JWTs) on a secure backend server. This approach avoids exposing master API keys directly in the code, ensuring better security.

"AccessTokens serve as the credentials for your end users, and are the key piece that connects your SDK-powered application, Twilio, and your server-side application." - Twilio Documentation

The next step involves setting up a backend server, often using tools like Node.js or Express. This server manages webhooks, handles session configurations, and acts as a bridge, relaying audio data between the phone network and AI models.

For real-time media streaming, platforms typically use WebRTC or WebSockets to transfer raw audio with minimal delay. WebRTC is the preferred technology for browser-based applications due to its lower latency and more consistent performance compared to WebSockets. A 2021 demonstration highlighted how WebRTC enables seamless integration of live phone calls into streaming platforms with proper media stream configurations.

"When connecting to a Realtime model from the client (like a web browser or mobile device), we recommend using WebRTC rather than WebSockets for more consistent performance." - OpenAI Documentation

In October 2024, Twilio developers Phil Bredeson and Al Kiramoto demonstrated a streamlined integration between Twilio Voice and OpenAI's Realtime API. They used TypeScript and Express to build a server that proxied raw audio from Twilio Media Streams to OpenAI's WebSocket-based API, enabling real-time AI-powered voice interactions. This setup required environment variables like OPENAI_API_KEY and a HOSTNAME configured through ngrok for local testing.

Once the integration is complete, platforms can leverage AI to create more engaging and dynamic conversational experiences.

AI for Personalized Conversations

AI takes voice calls to the next level, transforming them into personalized, interactive experiences. The process begins with speech-to-text (STT) and natural language processing (NLP). Tools like Twilio's <Gather> verb and services like Deepgram transcribe user speech into text, which is then processed by advanced language models, such as OpenAI's GPT-4o, to understand the context and intent behind the conversation. To maintain a seamless flow, developers often store recent dialogue (usually the last 20 messages) using cookies or session data, enabling context-aware responses.

"Using ChatGPT to power an interactive voice chatbot is not just a novelty, it can be a way to get useful business intelligence information while reserving dedicated, expensive, and single-threaded human agents for conversations that only humans can help with." - Michael Carpenter, Product Manager for Programmable Voice, Twilio

After the AI generates a suitable response, neural text-to-speech (TTS) engines like Amazon Polly convert the text into lifelike audio, ensuring the voice aligns with a creator's brand identity. For platforms looking to add a visual element, tools like HeyGen can create photorealistic digital avatars that mimic human expressions and maintain eye contact during voice interactions.

"A photorealistic AI agent keeps eye contact and displays facial expressions to add warmth and emotional presence to a conversation." - Donal Toomey and Paul Heath, Twilio

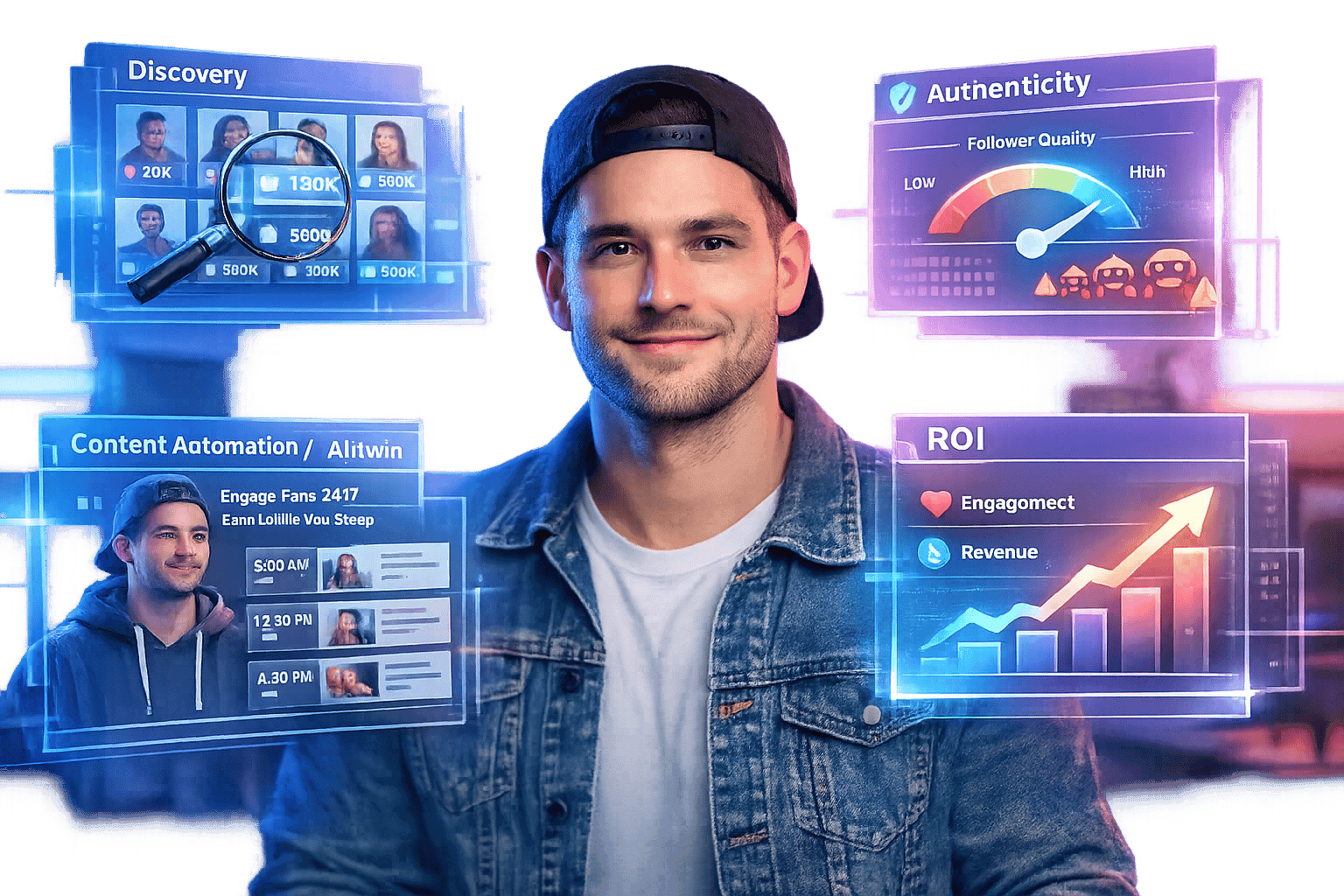

TwinTone's Role in Creator Communication

AI Twins for Round-the-Clock Creator Access

TwinTone transforms real creators into AI-powered Twins capable of managing fan interactions, conducting product demos, and hosting live shopping sessions - all without human intervention. By bridging PSTN and WebSocket media streams, the platform processes raw audio in real time, enabling AI to respond naturally and seamlessly.

Unlike traditional IVR systems with rigid menus, TwinTone's AI Twins use advanced conversational AI powered by large language models (LLMs). This allows them to handle complex, multi-step conversations and respond to unexpected questions with ease. This feature addresses a common frustration: 63% of consumers report dissatisfaction with long wait times to speak with a representative. By being available 24/7, AI Twins eliminate these delays entirely while maintaining the creator's distinct tone and personality.

The platform operates with impressively low latencies - down to 600ms. It also employs Branded Calling to display the creator's name and logo during calls, which is crucial since 87% of consumers are wary of answering unidentified calls. Additionally, real-time function calling enables AI Twins to book demos, process payments, and update social commerce records instantly.

These features make TwinTone a powerful tool for scaling user-generated content (UGC) and automating social commerce.

Automating UGC Creation and Social Commerce

TwinTone streamlines creator-led marketing by integrating voice call APIs with its content generation engine. When fans call, the AI Twin uses speech-to-text (STT) and natural language processing (NLP) to guide them to shoppable content or product demos. This removes the need for manual outreach while instantly generating UGC videos.

Through Streaming RAG, AI Twins pull accurate details from the creator's product catalog and content library . These voice interactions also collect valuable first-party data - like product preferences, purchase intent, and demographics - which is then integrated into customer engagement platforms. TwinTone's analytics dashboard provides real-time tracking across platforms like TikTok, Shopify, Amazon, YouTube, and Meta. Notably, 80% of global consumers express satisfaction with results from AI tools.

Part 2: How to Build an AI Voice Agent using OpenAI Realtime API: RAG + Function Calling

Challenges and Best Practices for Voice Call API Implementation

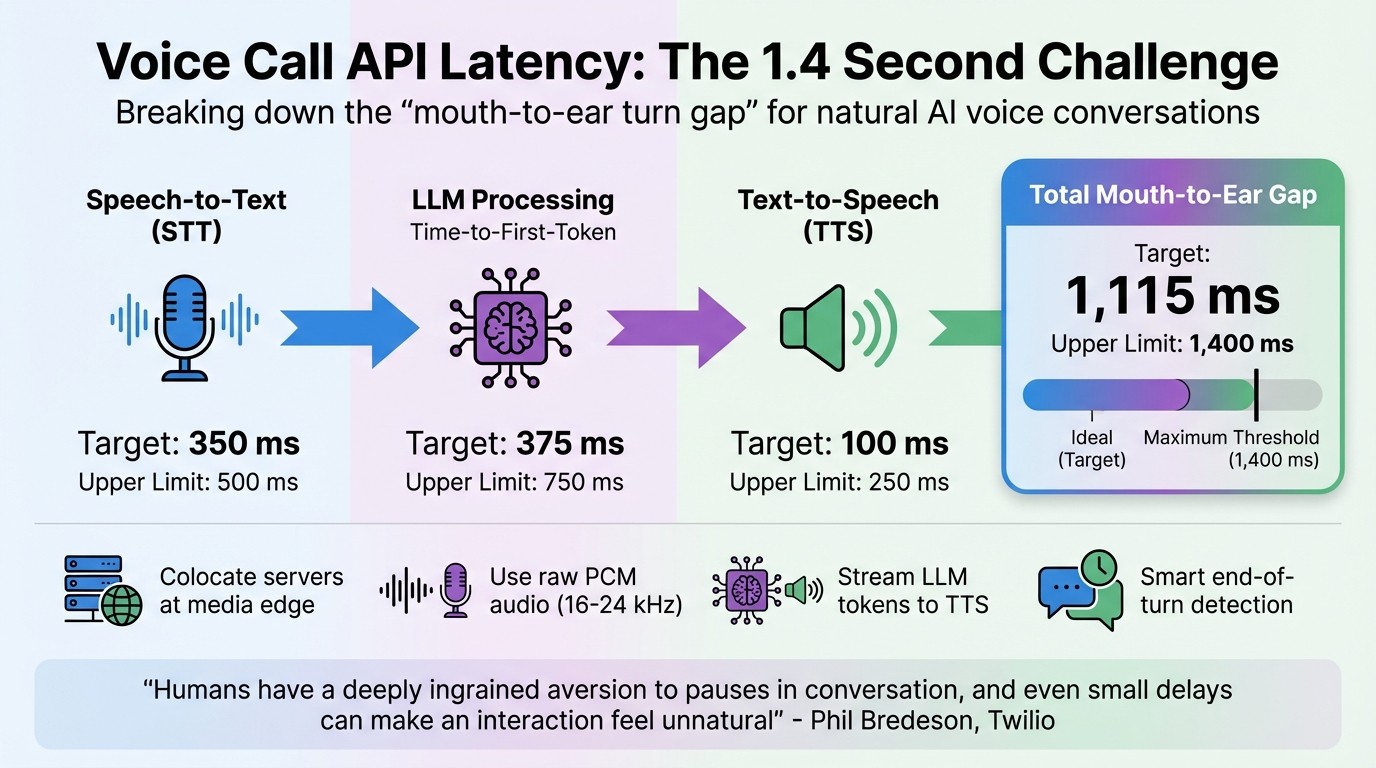

Voice Call API Latency Breakdown: Achieving Natural Conversation Flow

Managing Latency and Real-Time Performance

Voice call APIs open up exciting opportunities for creator-fan engagement, but keeping conversations smooth and natural requires tackling some tough technical challenges. One of the biggest hurdles? Latency. Humans are incredibly sensitive to conversational pauses. Research shows that a "mouth-to-ear turn gap" - the time it takes for someone to speak and the other person to hear a response - should ideally be around 1,115 milliseconds, with an upper limit of 1,400 milliseconds before things start to feel awkward. This total includes audio transmission, speech-to-text processing (STT), language model response (LLM), and text-to-speech synthesis (TTS).

Here’s how the latency goals break down:

Component | Target Latency | Upper Limit |

|---|---|---|

Speech-to-Text (STT) | 350 ms | 500 ms |

LLM Time-to-First-Token | 375 ms | 750 ms |

Text-to-Speech (TTS) | 100 ms | 250 ms |

Total Mouth-to-Ear Gap | 1,115 ms | 1,400 ms |

As Phil Bredeson from Twilio explains:

"Humans have a deeply ingrained aversion to pauses in conversation, and even small delays can make an interaction feel unnatural".

To hit these latency targets, you’ll need to optimize your setup. Start by colocating orchestration servers, STT, and TTS models at the media edge location closest to your users. Use raw PCM audio with matching sampling rates (16 kHz for STT and 24/48 kHz for TTS) to avoid delays caused by resampling. Stream text tokens from your LLM to the TTS engine as they’re generated instead of waiting for the full response. Additionally, rely on end-of-turn detection that uses prosody and semantics, rather than simple silence timers, to keep conversations flowing naturally. If a fan interrupts, clear outgoing audio buffers and cancel LLM streams immediately to avoid awkward "stutter" effects.

With latency under control, the next step is safeguarding user data during these interactions.

Data Privacy and Security

When it comes to voice interactions, protecting user data is non-negotiable. Start with secure communication protocols like HTTPS for all REST API requests. In production, replace Account SIDs and Auth Tokens with API Keys and Secrets for better access control. For web and mobile apps, authenticate devices using Access Tokens that are configured to expire and refresh automatically - usually about 30 seconds before expiration.

To further protect privacy, use subaccount architectures to isolate data and prevent cross-account exposure. For gating access to sensitive creator-fan interaction rooms, verification services like one-time passcodes (OTP) via SMS or voice can be highly effective. If you’re using WebRTC for browser-based calls, configure IP handling settings to avoid exposing private local IP addresses. For AI-powered interactions, route audio through a secure backend server instead of connecting directly to third-party AI APIs on the client side.

These measures not only protect user data but also lay the groundwork for handling high-traffic situations without compromising security.

Scaling for High Traffic During Events

High-demand events - like product launches or live shopping sessions - can push your system to its limits. By default, most accounts allow 1 Call Per Second (CPS) for outbound calls, and the standard cap for concurrent participants is 5,000 across all conferences. If these limits are exceeded, you’ll face 429 errors, and the system might queue up to 86,400 calls (representing 24 hours of traffic at 1 CPS) before failing.

To manage these spikes, use exponential backoff logic to handle 429 errors gracefully and monitor Twilio-Concurrent-Requests headers to track real-time load. Persistent HTTP connections with keep-alive can reduce handshake overhead and improve performance. Keep an eye on real-time metrics like jitter, packet loss, and Mean Opinion Score (MOS) using Voice Insights to spot and address quality issues early. For high-volume campaigns, application-side queues can help prioritize interactions that matter most. Additionally, registering numbers through the Free Caller Registry and implementing SHAKEN/STIR authentication can improve answer rates and avoid "Spam Likely" labels.

Conclusion

Voice call APIs are revolutionizing how we connect, enabling real-time conversations that feel effortless and natural. With the global voice API market projected to hit $2.5 billion by 2025 and 62% of Americans already interacting with voice assistants, the move toward voice-first engagement is quickly becoming the norm for creator platforms.

Key innovations like maintaining latency under 1.1 seconds, smart end-of-turn detection, and edge colocated services ensure these interactions remain quick and authentic. A standout example is TwinTone's AI Twins, which offer 24/7 engagement and automated content creation while preserving the individuality of each creator's voice and personality. These advancements don't just enhance the user experience - they also deliver measurable benefits for businesses.

Platforms integrating voice technology are seeing real results. Companies leveraging these tools to improve customer experiences report revenue increases of 10–15% and operational cost reductions of 20–50%. For creators, this means automated income streams through brand partnerships without the constant grind of content production. For brands, it provides instant access to genuine, on-demand creator content that drives conversions across platforms like TikTok, Instagram, YouTube, and Shopify - solving the 92% coordination challenges of traditional collaborations.

"Latency is the defining constraint in AI voice agent design. Humans have a deeply ingrained aversion to pauses in conversation, and even small delays can make an interaction feel unnatural." – Phil Bredeson, Twilio

The platforms that perfect this balance - combining lightning-fast voice APIs, AI-powered personalization, and multilingual capabilities (supporting over 40 languages) - are poised to lead the next wave of creator-driven social commerce.

This evolution not only scales creator expertise but also breaks down language barriers, delivering the real-time interactions fans crave - whether for local or global audiences, day or night.

FAQs

How do voice call APIs improve real-time communication on creator platforms?

Voice call APIs open up exciting opportunities for creator platforms by embedding real-time communication tools directly into apps or websites. This means creators can connect with their audiences in more interactive ways, like hosting live call-ins during streams, running Q&A sessions, or even offering instant product demos - all without relying on external tools.

Thanks to cloud-hosted infrastructure, these APIs deliver high-quality audio, low latency, and global accessibility, sparing creators the hassle of managing complex telecom systems. Features like call routing, muting, and AI-driven voice assistants add a layer of customization, making every interaction feel more dynamic and personal. For instance, platforms like TwinTone take it a step further by using AI to create "creator twins." These AI-powered replicas can handle live calls, host automated livestreams, and provide on-demand content for brands, creating a seamless and engaging experience for both creators and their audiences.

What privacy and security features do voice call APIs provide for creators and their audiences?

Voice call APIs prioritize privacy and security, creating a safe space for real-time communication between creators and their audiences. They rely on encrypted connections to safeguard audio data during transmission and implement token-based authentication to ensure that only authorized users can initiate or join calls.

These APIs go a step further with additional security measures like role-based access controls to manage user permissions. They also offer secure call recording options - always requiring user consent - and include features like automatic deletion of recordings to align with privacy regulations such as GDPR and CCPA. For example, platforms like TwinTone, which supports AI-powered livestreams and on-demand video content, use these tools to uphold high standards of security and maintain user trust.

With these protections in place, creators and their audiences can engage confidently, knowing their conversations are secure and responsibly managed.

How do voice call APIs help creators monetize their content?

Voice call APIs make it possible for creators to embed real-time calling into their platforms, unlocking fresh ways to generate income. With tools like call routing, conferencing, recordings, and interactive voice responses, creators can introduce paid services such as consultations, live Q&A sessions, or ticketed audio events. These APIs also support seamless integration with payment systems, simplifying access control and billing.

There’s also potential to earn through exclusive on-demand voice content. For example, creators can repurpose call recordings into podcasts, sell them as premium content, or host virtual workshops with tiered pricing options. By linking voice interactions to a payment structure, these APIs turn real-time communication into a steady revenue source. Platforms like TwinTone take it a step further by using voice call APIs to power AI-driven livestreams and always-available, shoppable content - cutting out delays and manual coordination entirely.