Identity Verification for Creators: Why AI Matters

How to build a futureproof relationship with AI

The rise of AI and deepfake technology has made it harder than ever to confirm if someone online is real. For creators, this is a growing problem. Scams, impersonation, and AI-generated fakes are damaging trust between creators and their audiences. In 2023 alone, scams cost Americans $12.5 billion, and AI-driven fraud is expected to keep increasing. Platforms relying on outdated verification methods vs. AI-powered MFA are struggling to keep up.

Here’s the bottom line:

AI-driven fraud is growing fast: Deepfake attacks now happen every five minutes, and fraud involving AI tools makes up 42.5% of detected financial fraud.

Old methods don’t work anymore: Manual checks, like photo uploads or document reviews, are too slow and easy to bypass with AI-generated fakes.

AI-powered verification is the solution: By using tools like biometric liveness detection, document forensics, and deepfake detection, platforms can verify identities in seconds while reducing fraud by up to 40%.

AI verification systems are faster, more accurate, and scalable, making them essential for protecting creators and their audiences in today’s digital landscape.

Challenges in Verifying Creator Identities

Impersonation and Fraud Risks

Creator platforms are facing a growing wave of sophisticated fraud tactics, fueled by advancements in AI. Techniques like face swaps, voice cloning, and synthetic avatars are now being used during live interactions to deceive platforms and users. In 2024, deepfake attacks are reported to occur every five minutes, while fraudulent activities on crypto platforms have surged by 50% year-over-year, now making up 9.5% of all activity in the sector. Adding to the problem, underground marketplaces are offering "fraud-as-a-service", where criminals can purchase AI-generated ID photos for as little as $15. These tools are specifically designed to bypass automated screening systems. Such fraud methods are setting the stage for even more advanced threats, particularly those involving deepfake technology.

Deepfake Technology Threats

The rapid evolution of deepfake technology is making traditional biometric security measures less reliable. Digital document forgeries have skyrocketed by 244% year-over-year in 2024, surpassing physical counterfeiting as the leading method of identity fraud. These digital forgeries now account for 57% of all document fraud globally. Since 2021, the manipulation of digital documents has increased by an astonishing 1,600%. On top of that, audio cloning has become alarmingly easy - just 10 minutes of recorded speech is enough to create a convincing voice clone. Injection attacks, where synthetic media is fed directly into verification systems to bypass cameras, rose by 200% in 2023. Looking ahead, Gartner predicts that by 2026, 30% of enterprises will stop relying on face biometric verification alone due to the growing threat of AI-generated deepfakes.

Scaling Verification for Global Platforms

For global platforms, verifying creator identities is further complicated by the need to comply with diverse regulations like KYC, AML, GDPR, and CCPA while handling thousands of document types in multiple languages. This requires a robust technical infrastructure, which comes with high costs for training, deploying, and maintaining AI models. Traditional verification methods, such as using paid subscriptions or credit card checks, can unintentionally exclude lower-income creators in certain regions. Adding to the urgency, projections suggest that AI-generated content could make up as much as 90% of all internet data by 2030. This makes distinguishing between real creators and synthetic identities an increasingly complex task. Steven Adler from OpenAI highlights the gravity of the situation:

"The Internet is inadequately prepared for the challenges highly capable AI may pose... deceptive AI-powered activity could overwhelm the Internet".

These mounting challenges underscore the pressing need for advanced AI-driven verification solutions to ensure the integrity of online platforms.

IDnow: AI Verification & Innovation | Plug and Play Portfolio Startup Stories

Why Traditional Verification Methods Fall Short

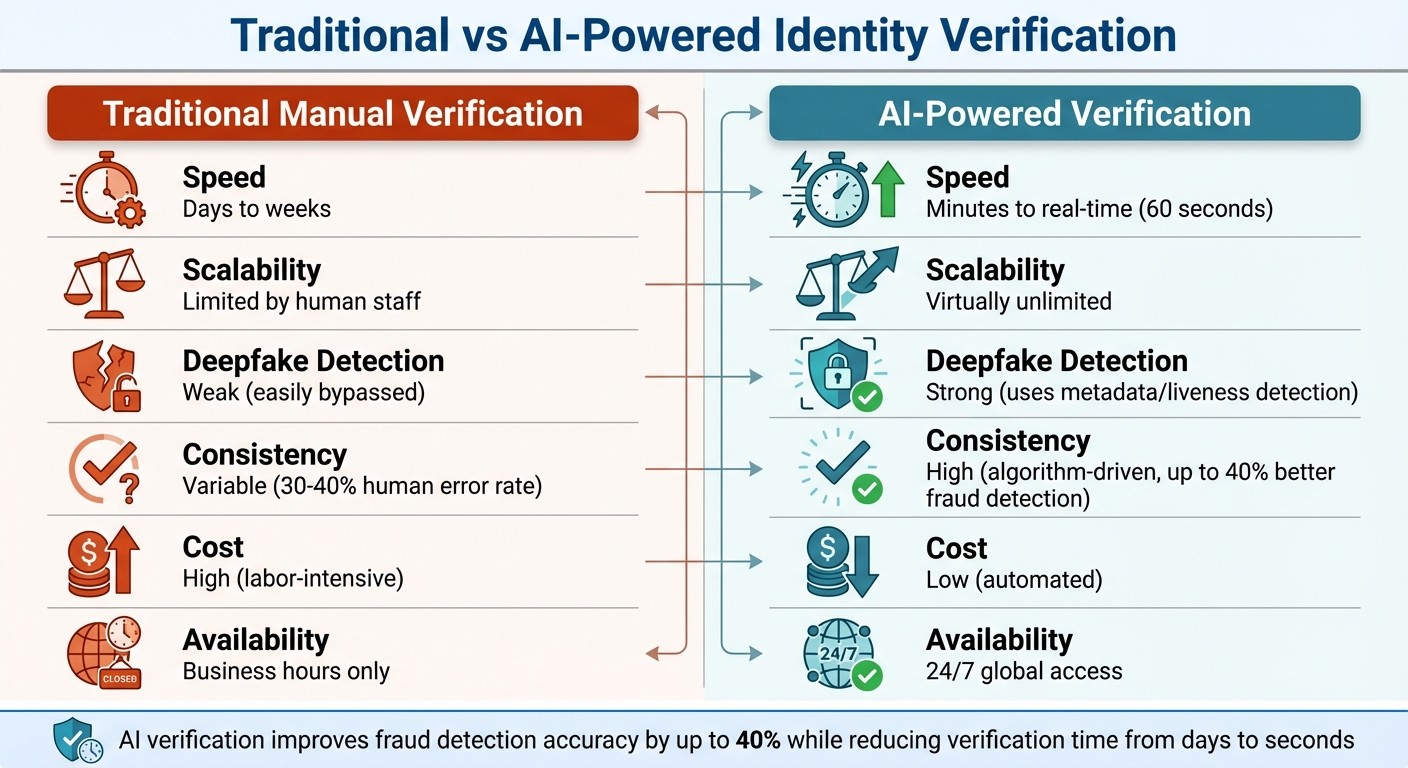

Traditional vs AI-Powered Identity Verification: Speed, Accuracy, and Cost Comparison

As deepfake technology and AI tools become increasingly advanced, older verification methods struggle to keep up with the growing challenges they pose.

Slow and Labor-Intensive Processes

Traditional Know Your Customer (KYC) methods rely heavily on manual reviews, physical documents, and in-person checks. These processes often create significant delays, especially for platforms needing to verify creators remotely. Each submission requires human oversight, which can take days - or even weeks - to complete. For global platforms handling thousands of sign-ups daily, this approach simply doesn’t scale. As operations grow, the demand for resources skyrockets, making these systems even less efficient.

Human Error and Inconsistent Results

One of the biggest weaknesses of manual verification is its inconsistency. Studies show that human reviewers fail to catch errors in 30–40% of cases. What one reviewer might approve, another could reject, creating a frustrating and unpredictable experience for users. These inconsistencies become even more problematic as AI-powered tools grow more sophisticated. For instance, bots can now bypass CAPTCHAs and mimic genuine user behavior, leaving human reviewers struggling to spot the difference.

Inability to Detect AI-Generated Fakes

Perhaps the most glaring issue with traditional verification is its inability to handle AI-generated fraud. As Phillip Shoemaker from Identity.com explains:

"Traditional KYC processes are ineffective against AI-generated fraud".

Manual checks like "selfie with ID" are no match for AI-generated images. In February 2024, a platform called OnlyFake exposed this vulnerability by using neural networks to create hyper-realistic ID photos for just $15 - photos that successfully passed standard automated verification systems. With over 2,000 deepfake tools readily available online, many designed to bypass KYC checks, these older methods are simply outclassed. Additionally, manual reviews often overlook critical metadata, such as device origin, timing, or light reflections, which are essential for verifying a user’s authenticity.

Feature | Traditional Manual Verification | AI-Powered Verification |

|---|---|---|

Speed | Days to weeks | Minutes to real-time |

Scalability | Limited by human staff | Virtually unlimited |

Deepfake Detection | Weak (easily bypassed) | Strong (uses metadata/liveness) |

Consistency | Variable (human error) | High (algorithm-driven) |

Cost | High (labor-intensive) | Low (automated) |

Traditional verification methods, while once effective, are no longer equipped to handle the sophisticated threats presented by modern AI and deepfake technologies. These shortcomings highlight the urgent need for more advanced, automated solutions.

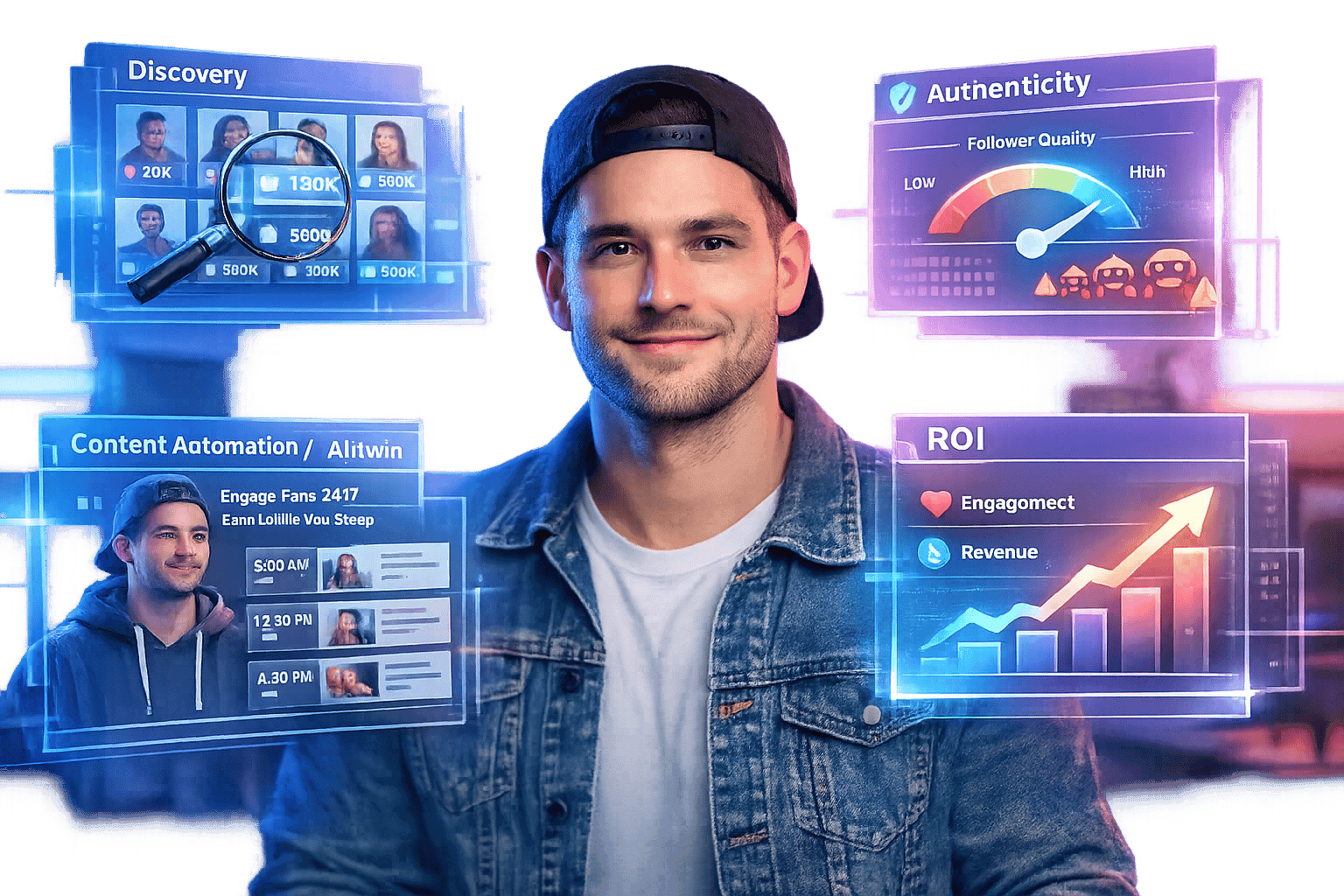

How AI-Powered Tools Solve Verification Problems

AI-powered verification systems are transforming how we tackle fraud, offering a significant leap over traditional manual methods. By improving fraud detection accuracy by up to 40% and utilizing standardized machine learning models, these systems ensure consistent results across millions of sessions. This shift, often referred to as Identity 3.0, combines AI-driven biometric analysis, document forensics, and behavior tracking to make the verification process faster and more reliable.

As Aniket Vaidya and Anurag Awasthi from Google note:

"AI-powered systems offer superior fraud detection speed and efficiency compared to manual methods, with real-time processing for document verification and facial recognition in seconds".

A key component of this advancement is biometric liveness detection, which ensures that a real person is behind the interaction.

Biometric Liveness Detection

Liveness detection is designed to confirm that the user is a live human rather than a static image or recording. In active liveness detection, individuals may be asked to perform simple actions, like blinking or nodding. Meanwhile, passive liveness detection uses deep learning to analyze a single image for subtle details such as skin texture, lighting variations, and micro-expressions. Some systems take it a step further, incorporating tools like 3D depth cameras or infrared sensors to differentiate between a three-dimensional face and a flat image. For example, the FaceMe® SDK demonstrated a 99.83% accuracy rate during NIST testing, completing verifications in less than half a second.

Beyond visual methods, AI also examines session metadata - such as timing patterns and bursts of activity - to verify that the interaction originates from a real person.

Facial Matching and Document Forensics

Facial matching involves comparing a live biometric scan with the photo on an official ID. AI systems use tools like Optical Character Recognition (OCR) and Machine Readable Zone (MRZ) parsing to cross-check information against established document formats and detect inconsistencies that might go unnoticed in manual reviews. Document forensics adds another layer of scrutiny, analyzing features like holograms, watermarks, and micro-printing to identify signs of tampering. These systems also ensure that biometric data is captured on a trusted device in real time, employing tools like Apple's DeviceCheck or Android's Play Integrity to confirm device authenticity.

Once documents and live captures are validated, the focus shifts to countering advanced threats like deepfakes.

Deepfake Detection and Prevention

Deepfake detection is now a critical part of verification systems. Neural networks are trained to identify presentation attacks, such as screen replays, 3D masks, and AI-generated avatars, by spotting digital artifacts that humans can't easily detect. Techniques like 3D depth analysis and infrared scanning further help distinguish a live person from high-resolution fakes. With over 2,000 deepfake tools available online and platforms like OnlyFake offering realistic ID photos for as little as $15, the stakes are high. To counter this, advanced systems use cryptographic binding and hardware-backed keys to ensure that even the most convincing deepfake cannot provide the necessary proof of identity.

As Phillip Shoemaker from Identity.com aptly states:

"The challenge is no longer just confirming a document or a face - it's proving that a real person is behind the interaction".

With predictions that up to 90% of online content could be synthetic or AI-generated by 2027, organizations are ramping up defenses. Many now conduct red-team testing, simulating deepfake attacks to uncover and address potential vulnerabilities.

These AI-driven solutions are crucial for combating fraud and ensuring authenticity. Platforms like TwinTone are already leveraging these tools to protect creator identities, enabling secure interactions that support on-demand content and social commerce.

Benefits of AI Verification for Creator Platforms

AI-powered verification systems are transforming the way creator platforms operate, offering speed, security, and scalability. These systems can verify identities in as little as 60 seconds, a stark contrast to manual methods that depend on staff availability during business hours. Plus, AI works 24/7, allowing creators to complete verification from anywhere, anytime. This not only cuts costs but also strengthens platform integrity, paving the way for long-term growth.

Faster Verification at Lower Cost

AI simplifies complex processes like document forensics, facial matching, and liveness detection, making onboarding faster and more cost-effective. With AI, platforms can handle growth without needing to scale up staff or increase operational expenses. Additionally, companies can avoid hefty penalties - on average, businesses spend $14.82 million on Anti-Money Laundering (AML) fines due to weak verification systems. By implementing strong AI-driven Know Your Customer (KYC) protocols, platforms not only meet regulatory requirements but also make the process smoother for legitimate creators.

Improved Trust and Security

AI verification doesn't just speed things up - it also builds trust by ensuring every creator's authenticity. For example, YouTube partnered with Creative Artists Agency (CAA) to pilot a likeness detection tool that helps identify and manage unauthorized deepfake content. By October 2025, this tool became available to YouTube Partner Program creators, enabling them to report deepfakes through the YouTube Studio Content Detection tab. This development is crucial as identity attacks have surged dramatically: face swap deepfake attacks increased by 300%, image and video injection attacks by 783%, and virtual camera attacks by a staggering 2,665% between 2023 and 2024.

Amjad Hanif, Vice President of Creator Products at YouTube, highlighted the platform's dedication:

"As AI evolves, we believe it should enhance human creativity, not replace it. We're committed to working with our partners to ensure future advancements amplify their voices".

Integration with Creator Tools

Platforms like TwinTone are taking AI verification a step further by integrating it directly into their onboarding processes. This ensures digital replicas are authorized and protects creators' intellectual property while boosting engagement. TwinTone requires creators to upload a "consent video" and link their social media profiles before creating an AI Twin, guaranteeing authenticity. By late 2025, TwinTone had scaled to support over 20,000 creators and 1,000+ brands, with AI-powered live shopping streams achieving up to 12x higher engagement rates compared to traditional human-led streams.

James Rowdy, Founder and CEO of TwinTone, shared the platform's vision:

"The future of social commerce isn't bots, it's AI versions of real creators driving engagement, storytelling, and shopping".

Conclusion: AI and the Future of Secure Creator Interactions

The creator economy stands at a pivotal moment. Traditional verification methods, once reliable, are now struggling to keep up with the sheer scale and sophistication of modern threats. With deepfake tools widely accessible and AI-generated IDs available for as little as $15, these outdated approaches simply can't meet today's challenges. The need for faster, stronger solutions has never been more urgent.

AI-powered verification offers a way forward. By using real-time biometric liveness detection, document forensics, and behavioral risk assessment, these tools can detect fraud with up to 40% greater accuracy. What’s more, they complete verifications in seconds rather than days and can scale to handle millions of users globally - all without compromising security or consistency.

But the progress doesn’t stop there. The move toward Identity 3.0 signifies a deeper transformation. It’s not just about faster checks; it’s about creating systems that establish trust at the infrastructure level. Frameworks like Proof of Humanity and Content Credentials (C2PA) are setting new standards by cryptographically verifying the origin of digital content. As Researcher Sebastian Barros puts it:

"The network layer is strategically positioned within the internet stack and equipped via Telco infrastructure to anchor certification of human-originated content".

This shift is timely, especially as synthetic content is expected to make up 90% of all internet data by 2030. Platforms adopting tools like TwinTone’s consent video system and social media linking aren’t just addressing today’s security concerns - they’re laying the groundwork for genuine, scalable connections between creators and their audiences in a world increasingly shaped by AI.

AI has already reshaped identity verification. The pressing question now is whether platforms will embrace these advancements quickly enough to outpace evolving threats. Those that do will lead the way in fostering secure, authentic interactions that will define the future of the creator economy.

FAQs

How does AI verification enhance security for creators?

AI verification steps up security by incorporating cutting-edge tools like biometric analysis, document verification, and real-time risk assessment. These technologies excel at detecting fraud, deepfake impersonations, and synthetic identities, offering a level of precision that traditional methods simply can't match.

Through cryptographic credentials and automated processes, AI can swiftly and securely verify a creator's identity. This not only shields creators from identity theft but also fosters trust within their communities, ensuring safer and more reliable interactions between creators and their audiences.

What challenges do traditional methods face in verifying creator identities?

Traditional identity verification methods - like photo IDs or basic facial recognition - are increasingly falling short against modern threats such as AI-generated forgeries, deepfakes, and synthetic identities. These advanced tactics can open the door to impersonation, data breaches, and other security risks.

As fraudsters get more sophisticated, sticking to static or outdated verification methods leaves critical vulnerabilities. This makes it much tougher to maintain secure interactions between creators and their audiences. To close these gaps, adopting advanced AI-driven solutions is becoming essential for protecting identity verification processes.

How does deepfake technology pose a risk to identity verification?

Deepfake technology has reached a point where it can produce incredibly lifelike fake videos, audio, and images that convincingly imitate real people. This capability gives fraudsters a powerful tool to impersonate individuals, making it possible to slip past traditional identity verification methods, like photo or selfie-based checks.

With these synthetic media tools in hand, bad actors pose a serious risk to the integrity of Know Your Customer (KYC) and eKYC processes. To counter these threats, adopting advanced solutions such as AI-driven verification tools is becoming more critical than ever.