How AI Detects Harmful Content in Livestreams

How to build a futureproof relationship with AI

Livestreaming happens in real-time, which means harmful content can spread to thousands of viewers in seconds. AI solves this by moderating video, audio, and chat data instantly, flagging or removing violations like offensive comments, explicit visuals, or violent speech. Platforms use AI because human moderators can’t keep up with the sheer scale of livestreams.

Here’s how it works:

Video Analysis: AI detects weapons, explicit imagery, or banned faces using computer vision.

Audio Processing: Speech is transcribed and scanned for threats or abuse.

Chat Moderation: AI reviews messages before they’re seen, filtering toxic language or spam.

AI assigns confidence scores to potential violations, acting immediately or escalating unclear cases to human moderators. This approach helps platforms comply with laws like the EU Digital Services Act and avoid fines, while also keeping livestreams safe and engaging for viewers.

How to moderate live videos with AI

How AI Moderation Works in Real Time

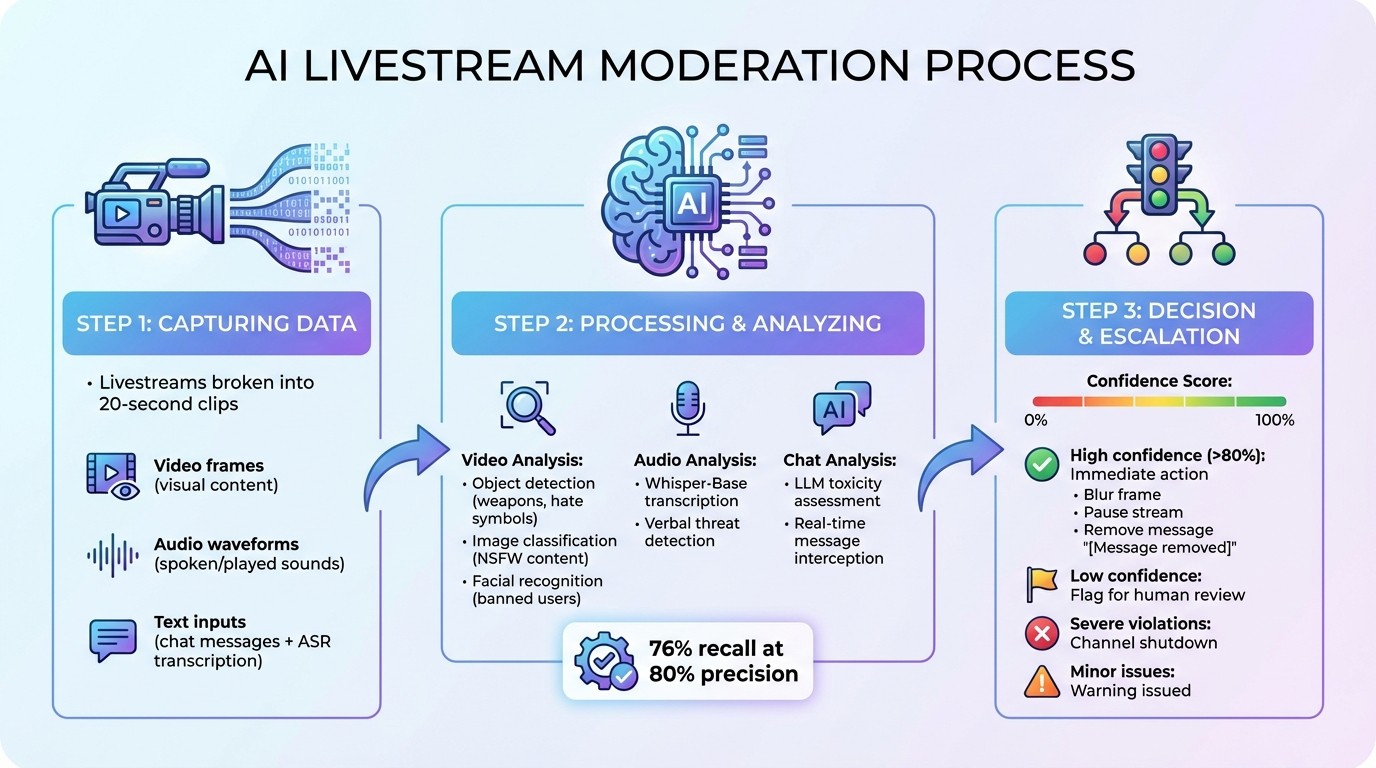

How AI Moderates Livestream Content in Real-Time: 3-Step Process

Livestreaming is unpredictable, making fast and accurate content moderation for live shopping streams absolutely essential. AI moderation unfolds in three main steps: capturing raw data, processing it through advanced algorithms, and making quick decisions about the appropriate action to take. All of this happens in real time.

Capturing Video, Audio, and Text Data

AI systems break down livestreams into 20-second clips to keep the analysis efficient and manageable. Video frames are extracted at regular intervals, gathering enough data for analysis without overloading the system.

The moderation system works with three data streams simultaneously: video frames for visual content, audio waveforms to capture spoken or played sounds, and text inputs that include live chat messages and speech converted to text using Automatic Speech Recognition (ASR). By analyzing these multiple formats together, the system can catch violations that might go unnoticed if only one data type were monitored.

Once the data is segmented, it moves to specialized AI models for rapid processing.

Processing and Analyzing the Data

Each type of data is routed to AI models specifically designed for that format. For video, computer vision algorithms handle tasks like object detection to identify weapons or hate symbols, image classification to flag NSFW content, and facial recognition to spot banned users. On the audio side, tools like Whisper-Base transcribe speech, enabling the system to quickly detect verbal threats.

Meanwhile, chat messages are intercepted before they appear to viewers. Large Language Models (LLMs) analyze these messages in real time to assess toxicity. Advanced systems also use fusion modules, such as METER, to combine visual and audio data into a single representation. This approach significantly enhances the system's ability to detect violations that span multiple formats.

For instance, a hybrid framework demonstrated 76% recall at 80% precision for real-time violation detection, showcasing the effectiveness of these advanced models.

Making Decisions and Escalating Issues

After detecting a potential violation, the AI assigns a confidence score (ranging from 0–100%) to indicate how likely it is that the content breaches platform rules. If the score surpasses a set threshold - such as 80% for violent content - the system acts immediately, possibly by blurring the frame or pausing the stream. Cases with lower confidence scores are flagged for human moderators to review.

What happens next depends on the type and severity of the violation. Minor issues might result in a warning, while severe infractions, like explicit content, can lead to the channel being shut down instantly. For chat moderation, LLMs replace offending messages with "[Message removed]".

Interestingly, cost studies show that real-time moderation remains financially viable, even when systems operate at high capture rates.

This split-second decision-making process highlights how AI is transforming content moderation, making it faster and more efficient than ever before.

AI Technologies Used to Detect Harmful Content

AI technologies play a crucial role in identifying and addressing harmful content in real time. These tools are designed to analyze and moderate content across various formats, ensuring safer online environments.

Computer Vision for Video Content

Computer vision systems excel at analyzing livestream videos frame by frame. They use object detection to identify prohibited items like weapons, drugs, or hate symbols, image classification to flag explicit imagery or violent scenes, and face recognition to spot banned users or blur faces to comply with privacy regulations.

To enhance performance, modern systems combine supervised classification with similarity matching. For example, TikTok Singapore's research team, including Wei Chee Yew and Hailun Xu, introduced a hybrid framework in December 2025. This system achieved 76% recall at 80% precision for similarity-based detection, reducing user views of unwanted livestreams by 6%–8%.

"Computer vision is not just a tool - it's a necessity for the future of livestream moderation." - Oleg Tagobitsky, api4.ai

Audio Analysis for Speech and Sound

Audio moderation works by analyzing both spoken language and raw audio waveforms. Automatic Speech Recognition (ASR) converts spoken words into text, enabling NLP algorithms to scan for hate speech or abusive language. Beyond transcripts, AI examines audio waveforms for tone and inflection, integrating these insights with visual and text data. By comparing audio embeddings to violation databases, the system can detect subtle or emerging harmful content.

When flagged, the system can take immediate action - muting the audio, pausing the stream, or alerting human moderators. While visual analysis handles inappropriate imagery, audio processing focuses on harmful verbal content.

Natural Language Processing for Chat Messages

NLP systems are used to screen chat messages before they appear on livestreams. These platforms rely on large language models to understand the intent and context of messages. Using sentiment analysis and intent recognition, NLP can distinguish between casual slang and actual abuse. Hybrid moderation pipelines combine supervised classification for known violations with similarity matching to address new or less obvious cases.

When harmful messages are detected, the system may replace them with placeholders like "[Message removed]" before they reach viewers. NLP also works in tandem with ASR to transcribe livestream audio into text, ensuring harmful spoken words are moderated alongside chat messages.

Together, these AI technologies create a safer and more engaging livestream experience for users.

How AI Responds to Harmful Content

AI doesn’t just detect harmful content quickly - it also takes immediate action to block, filter, or escalate it, ensuring it doesn’t reach viewers.

Blocking and Filtering Content

AI systems act as the first line of defense, intercepting harmful content before it reaches the audience. For example, toxic chat messages might be replaced with "[Message removed]" instantly, while objectionable visuals in video streams are blurred in real time. This ensures viewers experience uninterrupted content without delays that could disrupt the stream.

In cases of severe violations - such as explicit violence or illegal activity - AI can take stronger actions. For instance, it might trigger a "StopChannel" command (via services like AWS Elemental MediaLive) to immediately halt the broadcast.

"AI is an ideal moderator in these scenarios. It can quickly react to any incoming message in any language from thousands of users, removing it from the chat, and taking appropriate action." - Raymond F, Developer

When the situation isn’t clear-cut, AI escalates the issue for human review.

Escalating to Human Moderators

For ambiguous cases, AI flags the content and sends it to human moderators for further evaluation. The flagged material - whether a video frame, audio clip, or chat log - is automatically stored in cloud storage (e.g., Amazon S3), ensuring moderators have access to the necessary context.

Moderation teams are alerted in real time through notifications sent via webhooks or messaging services like Amazon SNS. These alerts include metadata, such as the channel ID and the reason for the flag, enabling moderators to quickly assess the situation. Using specialized dashboards, moderators can review the flagged content, confirm violations, or override AI decisions. This process also helps refine the AI system, as flagged edge cases are fed back into the training data to improve accuracy over time.

AI-Only vs. Hybrid Moderation

The choice between AI-only and hybrid moderation depends on the severity and complexity of the violations. Here's a comparison of the two approaches:

Method | Latency | Accuracy | Scalability | Cost |

|---|---|---|---|---|

AI-Only | Milliseconds | High for common cases | Unlimited | Low |

Hybrid | Seconds | Higher for nuanced cases | Dependent on human moderators | Moderate to High |

AI-only moderation is perfect for high-volume environments, where immediate action is needed to remove explicit violations like profanity or nudity. It can handle thousands of streams at once with near-instant response times. However, it sometimes struggles with nuance. For instance, an analysis of Twitch’s AutoMod between December 2024 and January 2025 revealed it missed up to 94% of hateful content in specific datasets due to over-reliance on slur-based detection.

Hybrid moderation, on the other hand, blends AI’s speed with human judgment. TikTok Singapore researchers, including Wei Chee Yew and Hailun Xu, implemented a hybrid framework in December 2025 that combined supervised classification with similarity matching. Large-scale A/B tests showed the approach reduced user views of unwanted livestreams by 6% to 8%. The classification pipeline achieved 67% recall at 80% precision, while the similarity pipeline reached 76% recall at the same precision level.

"The best systems combine automation with clear review workflows, configurable thresholds, and human input." - Gcore Team

AI Moderation in Social Commerce and Livestream Shopping

AI has stepped up as a crucial tool for protecting brand reputation during high-stakes social commerce events. Livestream shopping, in particular, presents unique challenges. With thousands of viewers watching in real time, even a single inappropriate comment or visual can cause significant harm to a brand's image. Following best practices for AI moderation acts as a safeguard, ensuring brands can maintain their reputation while keeping audiences engaged.

AI-Powered Livestreams for Brands

Livestream shopping events generate an immense volume of interactions. During major product launches or peak shopping periods, traffic surges dramatically, and AI steps in to manage this scale. It monitors thousands of streams simultaneously, identifying violations that would otherwise overwhelm human moderation teams. Just as with broader livestream platforms, social commerce demands swift action to protect a brand’s image.

Brand safety is a top priority. AI uses computer vision to detect unauthorized logos, counterfeit items, and copyrighted material in real time, ensuring compliance with legal standards and brand agreements. When unauthorized content is identified, the system flags or blocks it immediately. Meanwhile, audio analysis and natural language processing work together to evaluate spoken content and filter out toxic chat messages on the spot.

For instance, in 2025, Decathlon implemented the Utopia AI Moderator across multiple markets and reduced its reliance on human moderators by 92.8%, saving the workload equivalent to four full-time employees. Alma Media Corporation also saw impressive results, cutting its average publishing delay in half and achieving a 30% boost in daily comments after switching to automated AI moderation.

These advancements in moderation pave the way for platforms that seamlessly integrate automation with live commerce.

TwinTone and AI Livestream Automation

Platforms like TwinTone are pushing the boundaries of AI moderation by blending safety with advanced automation. TwinTone turns real creators into AI-powered Twins, capable of hosting 24/7 interactive livestreams on platforms like TikTok, Amazon, YouTube, and Shopify. These streams not only engage audiences but also generate shoppable videos and on-demand user-generated content, helping brands scale their social commerce efforts effectively.

The platform’s built-in moderation ensures a secure and engaging shopping environment. AI monitors chat activity in over 40 languages, removing toxic messages before they can disrupt the stream. Visual analysis ensures product presentations meet compliance standards, while audio processing keeps the AI Twin’s speech aligned with the brand’s tone and messaging. This comprehensive system creates a trustworthy and enjoyable experience for viewers, safeguarding both the brand’s image and the audience's trust.

Conclusion

AI plays a crucial role in keeping livestreams safe while ensuring viewers stay engaged. With real-time processing, AI can analyze thousands of streams and flag harmful content in just milliseconds. This swift action helps prevent inappropriate material from ever reaching audiences, protecting both brands and communities.

Modern AI has evolved to understand not just content but also its context, tone, and even subtle cultural differences, significantly reducing false positives. Instead of shutting down entire streams, AI now pinpoints and addresses specific problematic segments as they occur. By combining AI's efficiency in handling high volumes with human moderators' ability to tackle more complex cases, platforms achieve a balanced and highly effective moderation system. This hybrid approach has been validated through extensive testing.

Advances like multimodal analysis, knowledge distillation, and temporal consistency algorithms have empowered AI to simultaneously monitor video, audio, and text for violations. As livestream shopping and social commerce continue to expand, leveraging AI grouping for live shopping events to boost engagement, the importance of AI moderation will only grow. Platforms that integrate these advanced safety measures can provide round-the-clock engagement while maintaining brand safety and audience trust. AI also evolves continually, learning from new challenges and emerging threats.

"Computer vision is not just a tool - it's a necessity for the future of livestream moderation."

– Oleg Tagobitsky, CEO, API4AI

These advancements make AI the backbone of safe and engaging livestream experiences, ensuring platforms can thrive in an ever-changing digital landscape.

FAQs

How does AI identify harmful content during livestreams?

AI monitors livestreams for harmful content by analyzing video, audio, and chat data through multimodal models. These models integrate information from various sources to detect violations like nudity, violence, or hate speech. Pretrained classifiers handle known issues, while large language models (LLMs) identify more subtle or emerging forms of abuse.

The system evaluates each segment against preset thresholds. If harmful content is found, it can act immediately - removing the content or sending alerts. This real-time approach helps keep livestreams safe and within guidelines.

How do human moderators support AI in livestream content moderation?

Human moderators are essential for keeping livestream content moderation accurate and effective. They step in to review content flagged by AI, resolve tricky or context-dependent cases, and handle instances where AI might overlook or mislabel harmful material.

When the speed and reach of AI are paired with the discernment of human judgment, livestream platforms can tackle complex situations more effectively and create a safer, more enjoyable space for their audiences.

How does AI protect brands during livestream shopping events?

AI plays a crucial role in maintaining brand safety during livestream shopping events by monitoring video, audio, and chat in real time. With the help of computer vision and natural language processing (NLP) models, it can spot potentially harmful content, including profanity, inappropriate imagery, hate speech, or unapproved mentions of competing brands.

Once such content is detected, the AI acts immediately. It can mute audio, block visuals, or remove flagged material altogether. This real-time intervention creates a secure and professional environment, ensuring that both brands and viewers enjoy a seamless and positive shopping experience.