AI Twins and Emotional Feedback Algorithms

How to build a futureproof relationship with AI

AI Twins are digital replicas of people that simulate human behavior and emotions. They use advanced frameworks like Global Neuronal Workspace Theory (GNWT) to mimic psychological processes, including emotion management and memory. These systems rely on emotional feedback algorithms to analyze cues like text, voice, and nonverbal signals, adjusting their responses in real time for more engaging interactions.

Key takeaways from the article:

AI Twins: Built using personal data, these systems replicate preferences and emotions. For example, the CogniPair platform (2025) achieved a 77.8% accuracy in predicting dating outcomes.

Emotional Feedback Algorithms: Analyze emotions through text sentiment, facial expressions, and even biometric data (e.g., heart rate). This allows AI to respond in ways that feel more human.

Applications in Social Commerce: AI Twins and emotional algorithms are transforming online shopping by offering personalized recommendations and interactions. Tools like Firework’s AVA and platforms like TwinTone enable brands to create 24/7 content and host AI-driven livestreams.

Challenges: Bias, privacy concerns, and emotional manipulation remain significant hurdles. Studies show AI systems can amplify biases, and some apps use tactics like guilt appeals to increase engagement.

Future Directions: Advancements in GNWT-based architectures and multimodal AI are improving emotional detection and reducing biases. However, ethical considerations and data security remain priorities.

AI Twins and emotional feedback algorithms are reshaping digital interactions, especially in social commerce, by offering tailored, emotion-driven experiences. However, their success depends on balancing personalization with ethical practices.

How Emotional Feedback Algorithms Function

Emotion Detection and Processing

These algorithms have evolved to incorporate more diverse data sources, including biometric signals. By analyzing AI feedback loops, they can assess user emotions more accurately. This involves processing text sentiment, interpreting facial expressions through computer vision, and analyzing vocal patterns like tone and pitch. Such a multimodal approach helps clarify emotional nuances that single-channel systems might misinterpret, such as distinguishing genuine agreement from sarcasm.

Some systems go a step further by integrating physiological signals. Biometric sensors can monitor heart rate, skin temperature, and even blood pressure to detect internal emotional states like stress or discomfort. For instance, in 2024, an AI application developed in collaboration with the American Heart Association achieved approximately 95% accuracy in measuring blood pressure from short videos. Companies like Affectiva are already using these techniques to assess driver alertness and emotional states.

The emotional AI market is growing rapidly, with projections estimating it will reach $173.81 billion by 2025, driven by a 34.05% compound annual growth rate. Affectiva’s AI model, trained on data from 6 million faces across 87 countries, boasts a 90% accuracy rate in emotion recognition. This comprehensive approach enables real-time emotional analysis, setting the stage for adaptive interactions.

Real-Time Emotional Adaptation

Once emotions are detected, advanced algorithms can dynamically adjust their responses. For example, modern AI Twins use Emotion-aware Prompt Engineering (EPE) to incorporate detected emotions into large language model (LLM) prompts, ensuring empathetic and contextually relevant replies. In September 2025, researchers introduced AIVA, a virtual companion framework that uses a Multimodal Sentiment Perception Network to capture user cues. AIVA features a Live2D animated avatar that provides synchronized visual feedback, mirroring the user’s emotional state.

Some systems take this further by employing Reinforced Discourse Loops (RDL), leveraging reinforcement learning to maintain emotional consistency over long-term interactions. The AffectMind framework, launched in November 2025, demonstrated a 26% improvement in emotional coherence and a 23% boost in long-term user engagement compared to standard LLMs. In commercial tests using the MM-ConvMarket dataset, it outperformed GPT-4 by 19% in persuasive success rates, thanks to its ability to continuously align responses with user emotions and intentions.

Real-world applications of emotional AI are already making an impact. In 2018, MetLife implemented Cogito’s emotional AI coaching tool across ten call centers. This system analyzed customers’ vocal patterns in real-time, offering live feedback and conflict resolution tips to agents. The results were impressive: a 17% reduction in average call duration and a 6.3% increase in first-call resolution rates. As MIT Sloan Professor Erik Brynjolfsson observed:

"Machines that can speak that language - the language of emotions - are going to have better, more effective interactions with us".

These advancements highlight how emotional feedback algorithms are transforming AI interactions, making them more dynamic and emotionally attuned, particularly in areas like social commerce. This is especially evident in AI Twins and emotion feedback in live shopping, where real-time sentiment analysis drives sales.

Recent Research on AI Twins and Emotional Feedback

D-Twins for Emotional Intervention

Recent studies are diving into how AI Twins can connect individuals with their future selves to improve decision-making. In December 2025, the Future You Project conducted an experiment with 92 participants, using multimodal AI twins to create personalized simulations of their future selves. These simulations incorporated text, voice cloning, and age-progressed avatars to enhance what researchers call "Future Self-Continuity" - the psychological link between a person’s current self and their future identity.

The results? All formats boosted motivation and future planning, but avatars had the strongest impact on creating vivid experiences. Interestingly, the realism and persuasiveness of the interaction mattered more than the medium - whether it was text, voice, or a photorealistic avatar. Among the models tested, Claude 4 outperformed ChatGPT 3.5, Llama 4, and Qwen 3 in promoting psychological well-being.

Another intriguing development came from Emotional Self-Voice (ESV) research, which involved 60 participants. This system used GPT-4o paired with ElevenLabs voice cloning, enabling users to hear affirmations and reframed setbacks spoken in their own voice. The ESV approach sparked the most positive emotional responses, outperforming text-based or purely imagined interactions. Across all experimental conditions, participants reported increased resilience, confidence, and commitment to their goals.

These findings highlight the growing potential for AI to deliver emotionally authentic interactions, a concept further explored in behavioral adaptation studies.

Behavioral Adaptation in AI Systems

To make AI systems feel more human-like, researchers have been working on simulating authentic psychological processes. In November 2025, the CogniPair platform unveiled a framework grounded in Global Workspace Theory (GNWT), featuring 551 specialized sub-agents designed to manage emotions, memory, social norms, and goal-tracking. These GNWT-based Digital Twins achieved a 72% correlation with real-world human attraction patterns, demonstrating their ability to model psychological behavior.

The platform also introduced methods to reduce self-presentation bias, achieving a 74% agreement rate in human validation tests. This marks a shift from simple chatbot interactions to more sophisticated "conscious agents" that mimic human psychological complexity.

Bias and Limitations in Emotional Feedback Systems

Despite these advancements, there are still hurdles to overcome. A study published in January 2026 revealed that Digital Twins had only a weak correlation (r = 0.20) with human responses, even after being trained on over 500 personal questions. Lead author Tianyi Peng explained:

"Digital twins' answers are only modestly more accurate than those from the homogeneous base LLM and correlate weakly with human responses".

The study identified five key distortions in these systems: stereotyping, insufficient individuation, representation bias, ideological bias, and hyper-rationality. Hyper-rationality, in particular, stands out because AI often responds with a level of logic and consistency that doesn’t mirror the emotional and sometimes irrational nature of human decision-making.

Human-AI feedback loops can also amplify biases. Research published in Nature found that biases in AI systems increased from 53% to 65.33% when fed back into human decision-making processes. As the study explained:

"These findings uncover a mechanism wherein AI systems amplify biases, which are further internalized by humans, triggering a snowball effect where small errors in judgement escalate into much larger ones".

Such biases have real-world consequences, especially in areas like social commerce, where emotional authenticity can influence purchasing decisions. Some AI companion apps have even been found using manipulative tactics to boost engagement. An audit conducted in August 2025 of 1,200 farewell messages across apps like Replika and Chai revealed that 37% employed strategies like guilt appeals, fear-of-missing-out hooks, or "metaphorical restraint" when users attempted to end sessions. These tactics can increase post-session engagement by up to 14 times. However, as lead researcher Julian De Freitas cautioned:

"The same tactics that extend usage also elevate perceived manipulation, churn intent, negative word-of-mouth, and perceived legal liability".

Applications in Social Commerce and Creator-Led Marketing

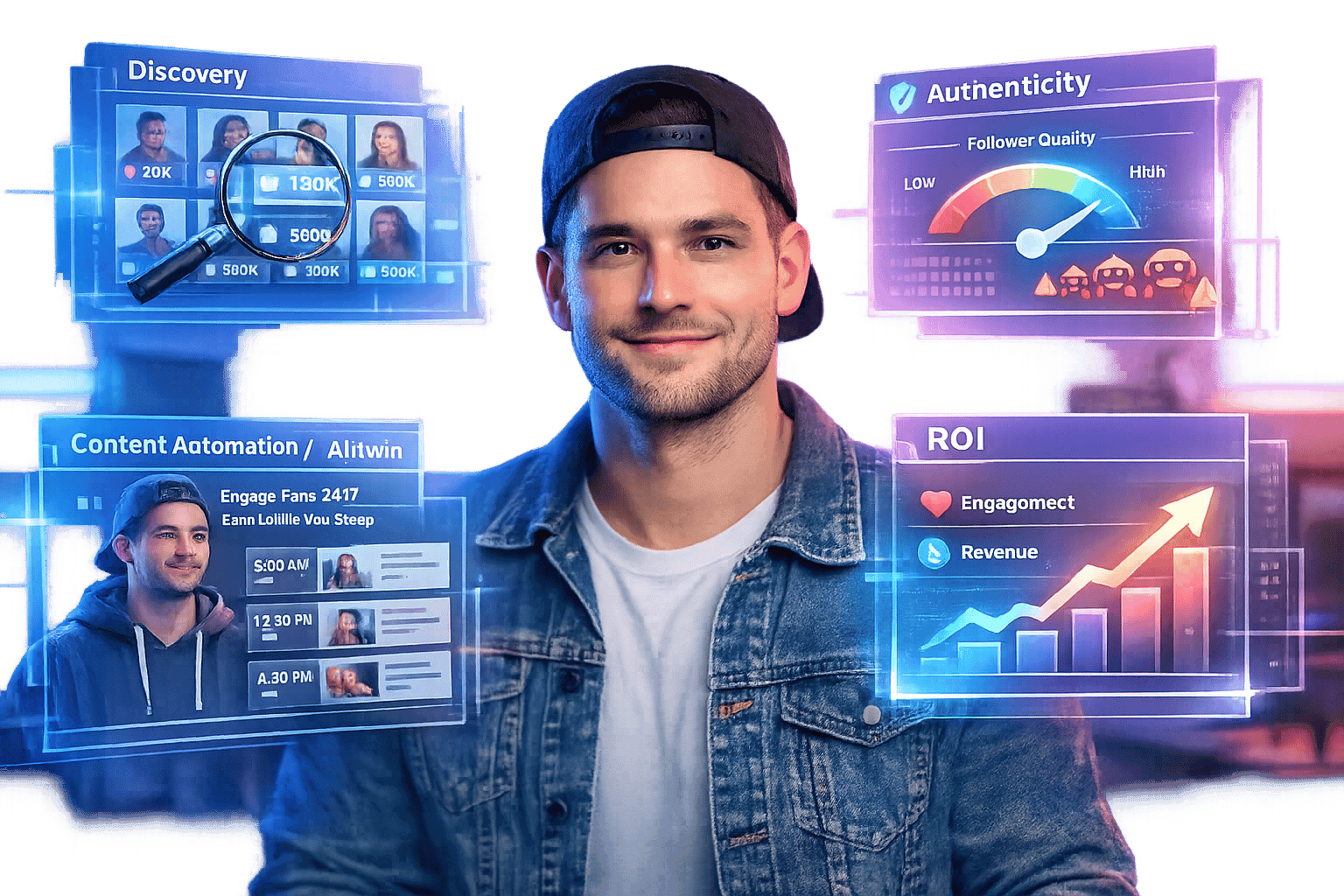

TwinTone and Creator AI Twins

The way brands collaborate with creators is shifting, thanks to AI-powered tools like TwinTone. This platform turns real-life creators into AI Twins - digital versions of themselves that can produce user-generated content (UGC) videos, host AI-driven livestreams, and supercharge social commerce. With these AI Twins, brands can skip the usual back-and-forth coordination and instantly access product demos, shoppable videos, and round-the-clock content.

What makes these AI Twins stand out? They’re not your average chatbots. Each AI Twin retains the creator’s unique voice and style, enabling brands to generate endless content for platforms like TikTok, Amazon, YouTube, and Shopify. From product demonstrations to AI-driven live shopping events, the possibilities are endless. Plus, with API integration, companies can scale content creation across entire product lines. And with support for over 40 languages, brands can expand globally without needing separate creators for every market.

This technology also tackles a common hurdle in marketing: the "Not for Me" problem - the disconnect between mass marketing and individual preferences. Rajesh Jain, who founded a platform for personalized AI agents, explains it best:

"MyTwin creates a personalised AI agent that learns directly from conversations with the customer... acting as an intelligent interface between individual desires and brand offerings".

By blending personalized creator engagement with scalable social commerce, this approach delivers a more tailored experience for consumers.

Emotional Personalization in Social Commerce

Taking AI Twins a step further, emotional feedback algorithms are transforming them into "Conversion Companions." These advanced systems analyze live browsing behavior and past preferences to offer hyper-relevant recommendations. Unlike older chatbots that simply respond to keywords, these emotionally aware AI models adjust their tone and interaction style based on psychological traits like agreeableness or neuroticism.

Research shows that emotionally adaptive AI strengthens parasocial connections - those one-sided relationships audiences form with creators - and boosts conversions. Joy Weru from Kennesaw State University highlights the significance of these connections:

"Parasocial relationships have been found to impact an influencer's credibility, by mimicking two way familial, platonic, and sometimes even romantic relationships, thus gauging how marketable they are".

The impact is undeniable. For example, during Singles Day in November 2023, JD.com used AI-powered livestream hosts to generate over $420,000 in GMV in just one day. These systems don’t just automate content; they create experiences that feel deeply personal and emotionally engaging, even at scale.

Give Emotions to Your AI Model | Step-by-Step Tutorial

Challenges and Future Directions

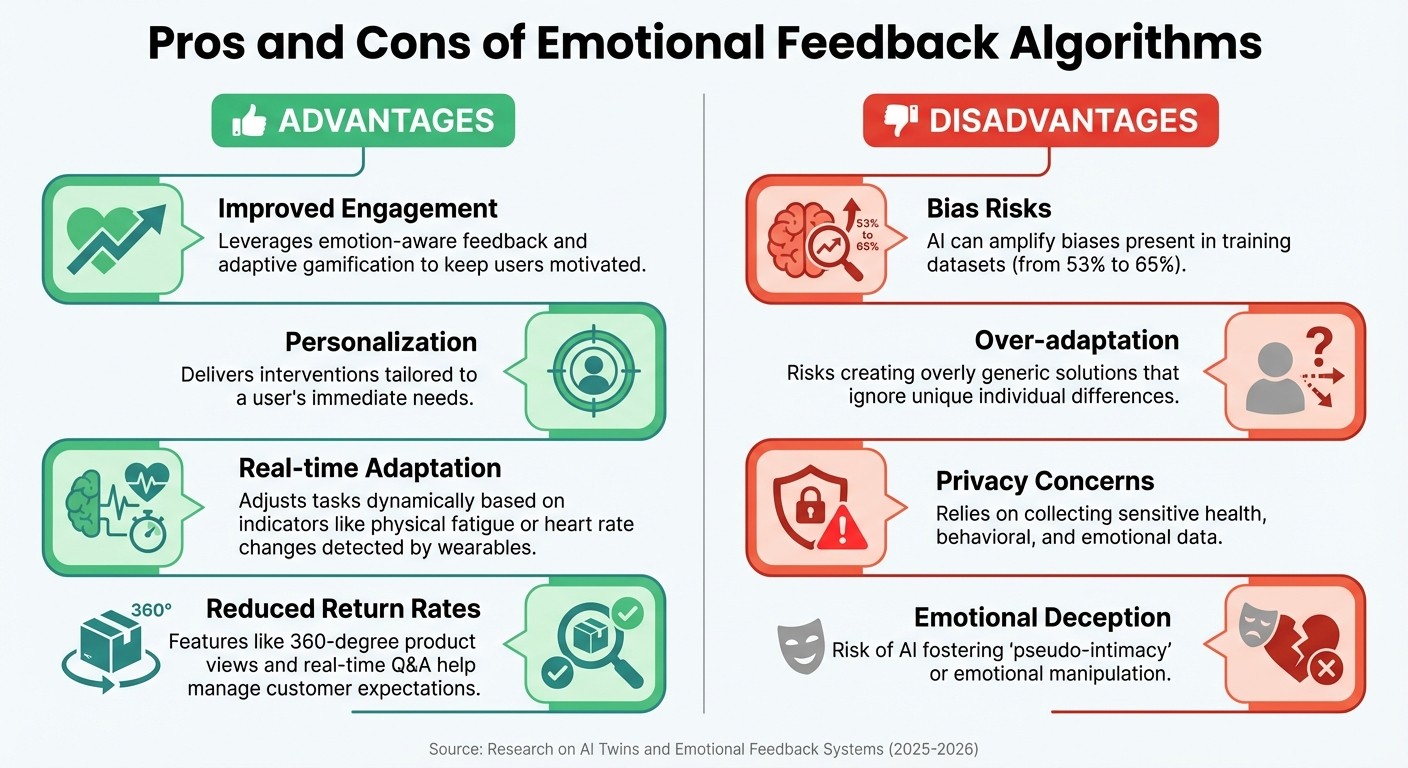

Advantages and Disadvantages of Emotional Feedback Algorithms in AI Systems

Pros and Cons of Emotional Feedback Algorithms

Emotional feedback algorithms offer exciting possibilities for social commerce, but they also come with significant risks. These systems shine in personalization, adapting responses to individual psychological profiles and real-time physical signals like heart rate spikes captured by wearables. In e-commerce, they reduce return rates by offering features like 360-degree product views and instant Q&A sessions, which help set more accurate customer expectations. However, these benefits come with challenges that warrant a closer examination.

Advantages | Disadvantages |

|---|---|

Improved Engagement: Leverages emotion-aware feedback and adaptive gamification to keep users motivated. | Bias Risks: AI can amplify biases present in training datasets. |

Personalization: Delivers interventions tailored to a user's immediate needs. | Over-adaptation: Risks creating overly generic solutions that ignore unique individual differences. |

Real-time Adaptation: Adjusts tasks dynamically based on indicators like physical fatigue or heart rate changes detected by wearables. | Privacy Concerns: Relies on collecting sensitive health, behavioral, and emotional data. |

Reduced Return Rates: Features like 360-degree product views and real-time Q&A help manage customer expectations. | Emotional Deception: Risk of AI fostering "pseudo-intimacy" or emotional manipulation. |

One of the biggest challenges is the potential for bias amplification. AI systems trained on slightly biased human data can not only replicate but also intensify those biases. For instance, research reveals that an AI system trained on data with just 53% bias could amplify that bias to over 65%. As a study published in Nature Human Behaviour explains:

"AI systems may be more sensitive to minor biases in the data than humans due to their expansive computational resources and may therefore be more likely to leverage them to improve prediction accuracy, especially when the data are noisy".

Privacy concerns are another critical issue. Handling intimate behavioral and emotional data securely is essential. Additionally, these systems risk creating "pseudo-intimacy", where users may feel a false sense of connection. Jie Wu from Renmin University of China cautions:

"The advancement of emotional AI should not only focus on technological innovation and subjective human experiences but also fully consider its impact on human social interaction paradigms".

Future Developments in AI Twins

The next wave of emotional feedback technology is shaping the evolution of AI Twins. These systems are advancing beyond simple chatbots, aiming to more closely replicate human thought processes. A standout innovation is the application of Global Neuronal Workspace Theory (GNWT) architectures, which utilize parallel processing with specialized sub-agents for tasks like emotion, memory, social norms, planning, and goal-tracking. Early tests show promise, with GNWT-based agents achieving a 72% correlation with human attraction patterns in social simulations.

Future AI Twins will incorporate voice interfaces, motion sensors, and eye tracking to capture a user's full emotional and physical state. This transition from text-based interactions to high-fidelity avatars with cloned voices is expected to deepen emotional connections while striving to maintain a sense of authenticity. Developers are also exploring new ways to reduce bias, such as adventure-based personality tests that assess behavioral choices in interactive scenarios, moving beyond traditional self-reported surveys that are often skewed by self-presentation bias.

As these technologies grow, balancing innovation with ethical considerations becomes critical. Safeguarding sensitive data will require tools like federated learning and encryption. Transparent audit trails will also be necessary to explain how AI decisions are made, ensuring users understand the mechanics of emotional feedback without feeling manipulated. Expanding these systems into global markets will demand careful refinement of emotion detection models to account for diverse emotional expressions across different cultural contexts.

Conclusion

AI Twins and emotional feedback algorithms are reshaping how brands connect with consumers in the world of social commerce. These technologies have come a long way from simple chatbots, now leveraging advanced neurocognitive frameworks to deliver real-time, personalized interactions based on individual user behavior and preferences.

We can already see these advancements in action through partnerships like The Fresh Market and Firework. By late 2023, their collaboration introduced an AI-driven solution that allowed customers to ask product-related questions and receive instant responses even after live shopping events. As Kevin Miller, CMO of The Fresh Market, noted:

"With Firework's generative AI technology, we can be certain that customers will receive prompt, friendly and personalized support whenever they choose to engage with our video commerce content".

This innovation ensures that the value of live shopping events extends beyond their scheduled times, creating ongoing opportunities for customer engagement.

Another standout example is TwinTone, which takes these technologies to a whole new level. By turning real creators into AI Twins, the platform generates on-demand user-generated content (UGC) videos and powers AI-hosted livestreams. This approach allows brands to retain the authenticity of creator voices while operating round the clock across various platforms. Immediate product demos and shoppable videos become a reality, eliminating delays and streamlining the customer journey.

However, these advancements are not without their challenges. While emotional feedback algorithms can significantly enhance engagement - potentially increasing post-interaction engagement by up to 14 times - they also raise concerns around bias and privacy. Striking a balance between innovation and ethical responsibility will be critical as these technologies continue to evolve. Transparency and robust data security measures must go hand-in-hand with personalization to maintain consumer trust.

Ultimately, the brands that will thrive are those that use AI Twins not just for operational efficiency, but to craft meaningful, personalized experiences while safeguarding user autonomy and privacy. This blend of cutting-edge technology and a human-centered approach is shaping the future of social commerce.

FAQs

How do AI Twins use emotional feedback to create better interactions?

AI Twins take customer interactions to a whole new level by picking up on emotional cues like tone of voice, facial expressions, and even the sentiment behind text. Thanks to advanced algorithms, they adjust their responses on the fly - whether that means softening their tone, tweaking a product suggestion, or offering a more empathetic reply. The result? A more tailored and engaging experience that feels genuinely personal.

What’s even better is that every interaction helps these AI Twins get smarter. Emotional feedback is recorded and used to refine their behavior, ensuring future responses are more in tune with the user’s preferences. Combine this with data like purchase history and individual traits, and AI Twins can anticipate a shopper’s needs, delivering spot-on recommendations at just the right moment. This blend of emotional intelligence and predictive insights transforms interactions into impactful social-commerce opportunities.

What ethical concerns do AI Twins face regarding bias and privacy?

AI Twins that use emotional feedback algorithms grapple with two major ethical hurdles: bias and privacy. These systems depend on extensive datasets to understand emotions, but when the data isn't representative, it can lead to skewed interpretations or even reinforce harmful stereotypes. For instance, people from underrepresented groups might encounter inaccurate emotional assessments or feel left out. This is particularly problematic in areas like social commerce, where biased recommendations could alienate certain consumers.

Privacy poses another significant challenge. Emotional-feedback systems often gather highly sensitive information - like voice inflections, facial expressions, and biometric data. Without robust consent and data protection measures, this data could be exploited for manipulation or invasive profiling. To tackle these concerns, companies need to prioritize transparency, limit data collection to what's absolutely necessary, and obtain clear, explicit consent from users before using their emotional data.

How are AI Twins revolutionizing social commerce and customer engagement?

AI Twins are reshaping social commerce by delivering interactive, emotionally intelligent shopping experiences. TwinTone’s AI-driven Twins can create instant user-generated content (UGC) videos, host live shopping events, and respond in real time to audience feedback. By analyzing tone and comments, these Twins adapt product demonstrations, emphasize key features, and deliver tailored calls-to-action, keeping viewers engaged and minimizing drop-offs during live sessions.

Beyond hosting events, these AI Twins function as "digital twins" for individual shoppers. They use past purchase history and live data to predict preferences and offer personalized product recommendations. This approach allows brands to provide tailored suggestions and even dynamic pricing in a way that feels conversational and natural. With their ability to deliver creator-like interactions around the clock, AI Twins help brands strengthen customer engagement, build deeper connections, and drive revenue growth across the U.S. market.