How Simulation Improves Voice AI Chatbots

How to build a futureproof relationship with AI

Voice AI systems face challenges like handling accents, background noise, and interruptions, which are hard and expensive to test manually. Simulation tools solve this by mimicking real conversations, using AI Twins and realistic conditions to test performance. These tools:

Speed up development by automating thousands of test cases.

Improve accuracy by identifying errors in recognition, logic, and speech quality.

Handle large-scale testing, simulating diverse scenarios like noisy environments or varying accents.

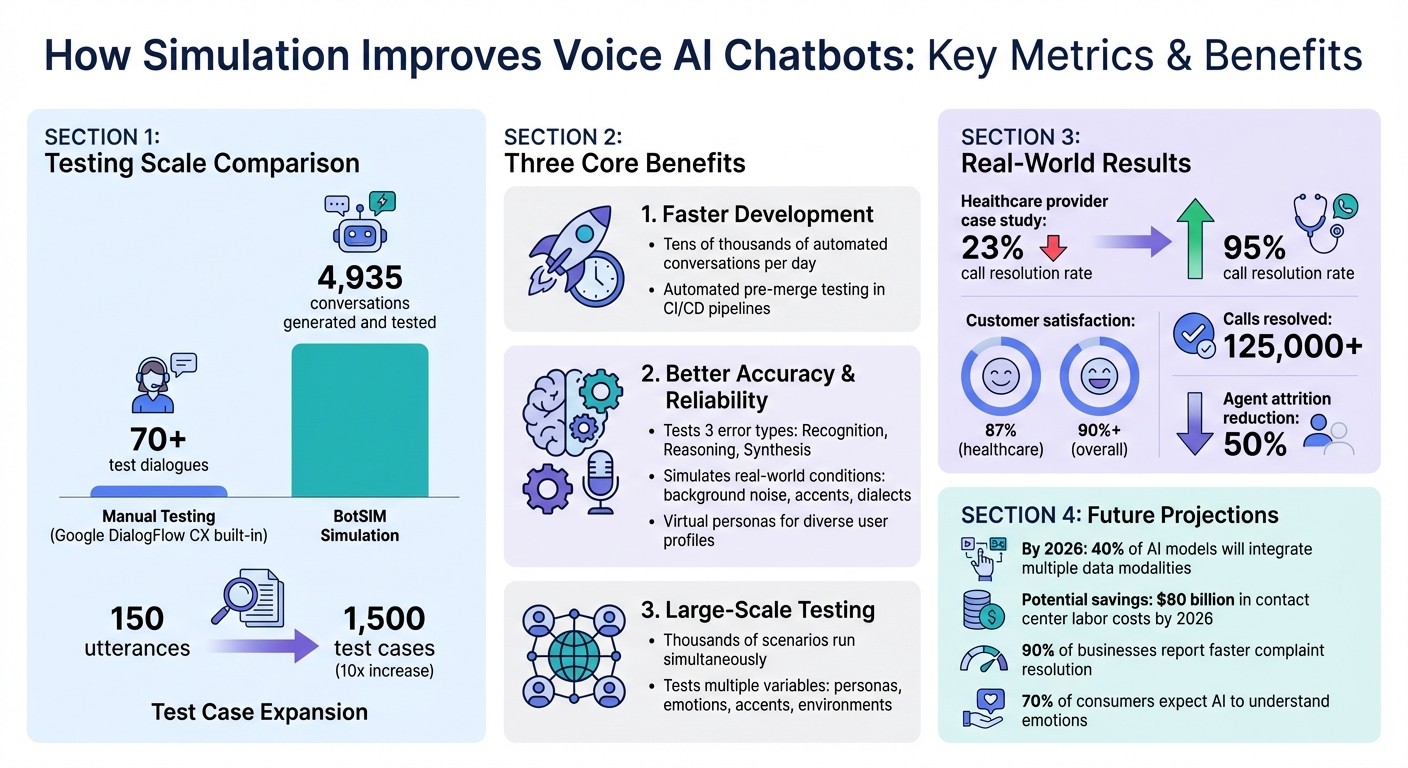

For example, BotSIM expanded a test set from 150 utterances to 1,500, boosting intent accuracy for Salesforce's Einstein BotBuilder. Simulation ensures Voice AI systems are prepared for user demands, making them faster, more reliable, and better suited for complex interactions.

Voice AI Simulation Impact: Key Performance Metrics and Benefits

Benefits of Simulation for Voice AI Chatbots

Faster Development

Simulation tools have revolutionized the way voice AI chatbots are developed. Instead of relying on slow, manual testing, these tools can run tens of thousands of automated conversations every day. For example, BotSIM uses paraphrasing models to create synthetic variations of user queries, turning a modest training set of 150 utterances into a massive 1,500 test cases automatically.

By integrating simulations into CI/CD pipelines, developers can automate pre-merge testing. This means voice tests can run like unit tests, catching bugs or broken functionality before any updates go live. These advancements in speed and efficiency align perfectly with the improvements in accuracy and reliability discussed below.

Better Accuracy and Reliability

Simulations are incredibly effective at identifying errors in speech recognition and synthesis, such as unnatural pitch or awkward intonation.

They also improve reliability by mimicking real-world audio challenges. For instance, developers can simulate environments with background noise from TVs, busy streets, or trains to test how well the system handles transcription under less-than-ideal conditions. Virtual personas add another layer of robustness by representing diverse user profiles, such as individuals who speak slowly or those who use jargon specific to certain industries. This ensures the chatbot can handle a wide range of demographics effectively. Automated safety features, like verifying users through spoken dates of birth, further enhance reliability by embedding these checks directly into the system without requiring manual quality assurance. These rigorous testing methods naturally scale to handle large datasets, as detailed in the next section.

Large-Scale Testing

When it comes to testing at scale, simulation tools outperform manual methods by a wide margin. In one example, BotSIM generated and tested 4,935 conversations for a Google DialogFlow CX bot designed for financial services. This dwarfs the platform's 70-plus built-in manual test dialogues.

Research from Salesforce highlights the drawbacks of manual testing - it’s slow, costly, and struggles to account for the variety found in real-world language. Simulations, on the other hand, can run thousands of scenarios simultaneously. These scenarios account for factors like different user personas, emotional tones, accents, and environmental distractions. This approach allows developers to create detailed test matrices, ensuring their voice agents are ready to handle the unpredictable nature of real customer interactions.

How to Test Your Voice Agents (Retell - Simulations Tutorial)

Simulation Techniques Used in Voice AI

Developers rely on three key simulation methods to prepare voice AI chatbots for the unpredictable nature of real-world use. These techniques build on the established advantages of simulation in speeding up development and improving system dependability.

Scenario-Based Testing

Scenario-based testing revolves around conversation blueprints that outline specific user goals - like checking account balances or resetting passwords. These blueprints help ensure chatbots stick to critical design protocols. For instance, developers might require the system to authenticate users via spoken dates of birth instead of email-based magic links, embedding security measures directly into the testing process.

In October 2025, a major healthcare provider adopted scenario-based testing for its appointment scheduling system built on Chanl AI. By simulating emotional contexts, medical terminology, and compliance requirements, they saw an impressive leap in call resolution rates - from 23% to 95% - and achieved an 87% customer satisfaction score. This method also tackled multi-turn conversations, such as when patients reschedule appointments mid-call or provide incomplete insurance details - cases that manual testing often overlooks.

AI-Generated Testing

AI-powered simulation takes testing to the next level by automatically generating thousands of test cases using advanced language models. Instead of manually scripting every possible way a user might phrase a request, these tools create diverse variations that reflect natural language patterns.

A great example of this is the BotSIM tool. When applied to a financial services bot built on Google DialogFlow CX, BotSIM expanded 150 initial utterances into 1,500 unique test cases. Ultimately, the system generated and tested 4,935 full conversations - far surpassing the 70+ manual test dialogues provided by the platform. Additionally, large language models (LLMs) acted as “judges,” evaluating the conversations against predefined success criteria. Using few-shot prompting, these LLM-based simulators achieved an F-measure of 43.4% in mimicking human speech patterns.

Beyond language diversity, simulation must also address environmental factors that impact voice interactions.

Testing Voice and Environment Variations

Voice interactions rarely happen in ideal conditions. Real-world conversations often take place in noisy or dynamic environments, and simulation tools replicate these challenges by distorting synthetic speech with background noises like city traffic or household sounds. These tools also test the system’s ability to handle different accents and dialects, ensuring it works well for a broad range of users.

"Voice Sims pinpoint whether errors come from recognition (inaccurate transcription due to background noise or accents), reasoning (policy gaps or logic errors), or synthesis (an unnatural pitch, poor intonation or mispronunciations)." - Sierra

To ensure thorough testing, developers use a dual-loop architecture: one loop introduces realistic audio distortions, while the other processes and evaluates them. Additionally, virtual personas are designed to simulate complex challenges, such as interruptions or ambiguous queries. These refinements have led to measurable gains, including a reported 40% increase in customer satisfaction.

Together, these simulation techniques create a robust framework that accelerates development timelines and enhances the reliability of voice AI chatbots.

Performance Metrics from Simulation Research

Performance metrics offer a clear picture of the benefits brought by simulation tools, building on the efficiency gains and testing capabilities already discussed.

Faster Time to Market

Simulation tools have transformed the development timeline for voice AI chatbots. Tasks that once required weeks of manual effort can now be automated, significantly reducing the time needed to bring a product to market. By embedding simulation into CI/CD pipelines, development teams can automatically test and validate releases, catching potential issues before users encounter them - similar to how unit tests are re-run with every code change. This approach not only speeds up development cycles but also improves error detection across voice AI systems.

Error Detection Rates

Beyond faster development, simulation frameworks excel at identifying and categorizing errors. These tools group issues into three main categories: recognition errors (mis-transcriptions), reasoning errors (logic flaws), and synthesis errors (speech quality problems). For example, in July 2025, researchers Phillip Olla and Nadine Wodwaski from the University of Detroit Mercy introduced the AIMS framework to evaluate an Emergency Response Simulation Bot powered by GPT-4.0. Their tool, "Eval-Bot v1", assessed the bot’s performance across eight criteria, identifying moderate issues in areas like safety prompts and inclusive language. The evaluation process involved two phases: the first focused on instructional prompt design, while the second measured real-time metrics such as clinical accuracy and responsiveness.

User Performance Results

The impact of thorough simulation testing is evident in improved customer outcomes. Voice AI systems tested through simulations have successfully resolved over 125,000 calls, achieving customer satisfaction ratings above 90%. Additionally, these systems have helped reduce agent attrition rates by as much as 50%. Organizations use metrics like the Bot Experience Score (BES) to measure performance. Starting at 100, the BES decreases to 75, 50, or 0 based on negative indicators such as repetitive bot responses, customer paraphrasing, or abandoned conversations. Successful implementations align metrics with development stages: early efforts (first 30 days) focus on foundational metrics like resolution rate and intent accuracy, mid-stage (30–60 days) emphasizes operational impact measures like cost per resolution, and later stages (beyond 60 days) shift to business outcomes such as ROI and customer satisfaction.

Conclusion

Simulation has become a key part of creating effective voice AI chatbots, revolutionizing how teams design, test, and launch conversational agents. Automated simulations help identify errors in recognition, reasoning, and synthesis, while also preparing these systems to handle real-world scenarios. For example, simulation frameworks have significantly increased the number of test cases, allowing developers to spot issues early and speed up development cycles.

Looking ahead, simulation technology is set to become even more advanced. Future testing environments could evaluate proactive, multi-step workflows and incorporate 3D acoustic modeling to mimic a wide range of environments and emotional tones. As voice AI systems evolve to initiate conversations and manage complex, multi-step tasks, simulating emotional intelligence will be critical. It's worth noting that 70% of consumers now expect AI to understand and respond to their emotions.

Jensen Huang, CEO of NVIDIA, highlighted this transformative potential:

"The ChatGPT moment for robotics is coming. Like large language models, world foundation models are fundamental to advancing robot and AV development."

– Jensen Huang, CEO, NVIDIA

The move toward multimodal integration - combining voice with vision, gestures, and AR/VR - will enable more immersive testing environments for the next wave of AI agents. By 2026, 40% of AI models are projected to integrate multiple data modalities, improving their ability to learn and adapt. Additionally, implementing AI chatbots for creators and contact centers could save $80 billion in labor costs by 2026, with 90% of businesses reporting faster complaint resolution after adopting these systems.

FAQs

How do simulation tools improve the performance of voice AI chatbots?

Simulation tools play a key role in improving the performance of voice AI chatbots by replicating real-world conversations. These tools create thousands of test scenarios that account for diverse accents, different speech patterns, background noise, and even pauses. This approach helps uncover potential problems and ensures the chatbot is prepared to handle a wide variety of interactions seamlessly.

Through the analysis of these simulated conversations, developers can refine the chatbot's responses and enhance its accuracy before it goes live. This method not only streamlines the development process but also helps deliver a smoother and more dependable experience for users.

How do virtual personas enhance the performance of voice AI chatbots?

Virtual personas are simulated users designed to help developers fine-tune voice AI systems before they’re rolled out to real customers. These personas replicate a variety of accents, speech patterns, background noise, and conversation styles, uncovering issues like timing errors, misinterpretations, or awkward pauses during development. This process helps identify and fix problems more efficiently, leading to smoother and more polished performance.

Beyond testing, virtual personas serve as "digital twins", mimicking real user behavior. By creating detailed personality profiles, they allow voice AI to provide responses that feel more personalized and relevant. This not only makes conversations feel more natural but also enhances user satisfaction by aligning interactions with individual preferences.

What’s the difference between scenario-based testing and AI-driven testing for voice AI chatbots?

Scenario-based testing and AI-driven testing each bring unique strengths to refining voice AI chatbots.

Scenario-based testing relies on predefined conversation paths, or "scenarios", designed to address specific use cases, edge cases, and compliance standards. These tests are structured and repeatable, making it straightforward to verify that the chatbot adheres to business rules and to spot any regressions over time.

AI-driven testing, in contrast, uses generative AI to simulate thousands of realistic and varied user interactions. This method incorporates unpredictable factors like accents, background noise, and unplanned phrasing, revealing issues that scripted tests might overlook. It’s highly adaptable, scales easily, and evolves in step with the chatbot's AI model.

Together, these approaches complement each other: scenario-based testing ensures precision and reliability, while AI-driven testing broadens the scope to prepare chatbots for the unpredictability of real-world conversations.