Ethical AI for Personalized Content

How to build a futureproof relationship with AI

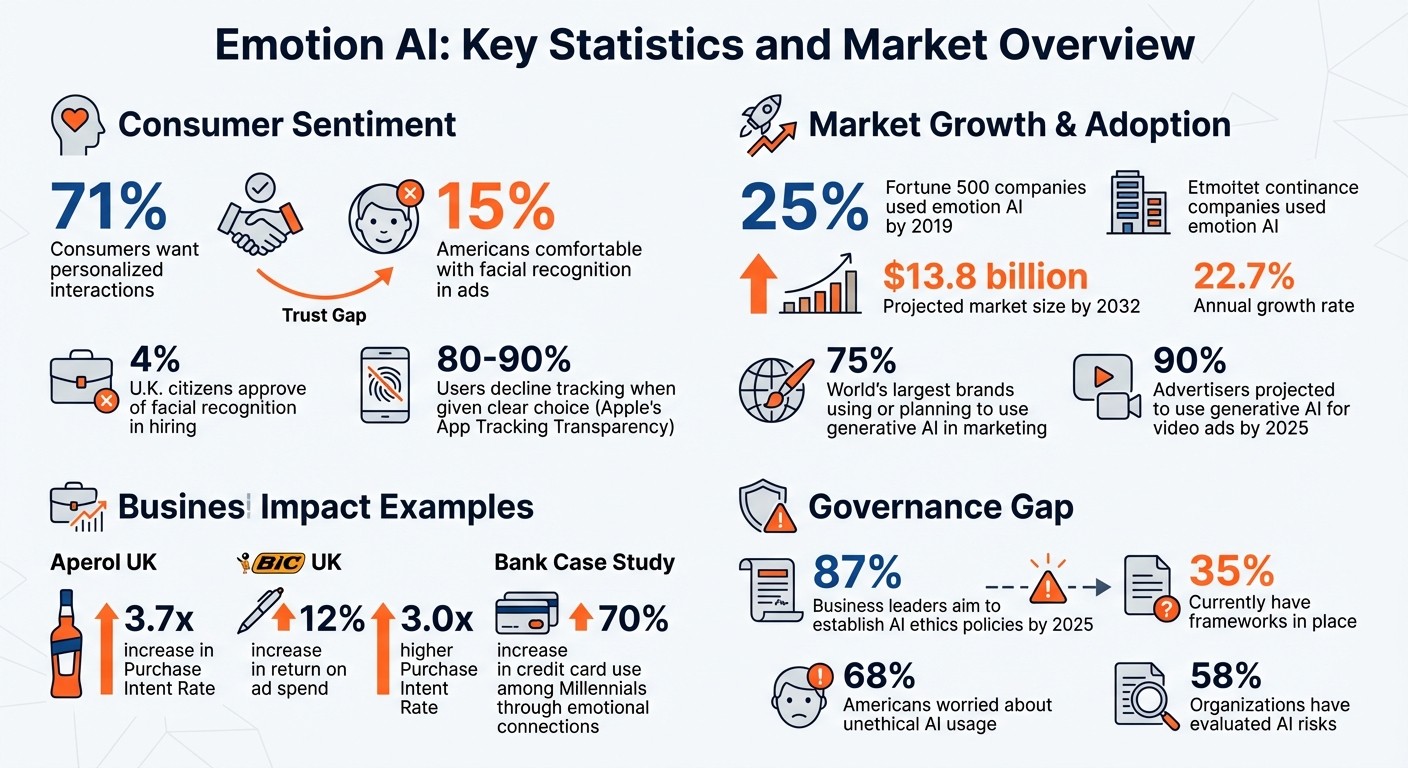

AI can personalize your experience, but at what cost? Emotion AI, which interprets human feelings through facial expressions, voice, and physiological signals, is reshaping industries like retail, advertising, and customer service. While 71% of consumers want personalized interactions, only 15% of Americans are comfortable with facial recognition in ads, highlighting a trust gap.

Businesses are leveraging emotion AI for mood-based recommendations, dynamic ads, and empathetic chatbots. However, ethical concerns - privacy risks, emotional manipulation, and bias - are significant. Recent regulations like the EU AI Act and U.S. state laws aim to address these challenges by enforcing transparency, consent, and data protection.

To use emotion AI responsibly:

Collect minimal data and ensure informed consent.

Be transparent about how AI analyzes emotions.

Test for and reduce bias in AI systems.

Give users control over their emotional data.

Emotion AI has potential, but its success depends on ethical implementation that prioritizes user autonomy and trust.

Emotion AI Statistics: Market Growth, Consumer Trust, and Adoption Rates

The ethics of emotional AI: Should machines build consumer trust? | MDS Fest 3.0

How Emotion AI Works in Personalized Content

Emotion AI works by interpreting human emotions through various detection methods. One of the most common techniques is facial recognition, which examines micro-expressions and subtle facial movements to identify feelings like happiness, frustration, or surprise. Another method, voice analysis, focuses on speech patterns - tone, rhythm, and pitch - to determine if someone sounds engaged, stressed, or joyful. Meanwhile, natural language processing (NLP) analyzes text-based interactions, such as messages or emoji use, to uncover the emotional context behind written communication.

Some advanced systems go even further by incorporating physiological signals like heart rate or typing patterns to create a more detailed emotional profile. A growing trend in the field is multimodal detection, which combines data from multiple sources - facial expressions, voice, and physiological signals - to improve accuracy and depth. This layered approach allows brands to move beyond basic personalization based on past behaviors, enabling them to deliver hyper-personalized experiences that respond to a user’s current emotional state.

The market for emotion AI is expanding rapidly. By 2019, at least 25% of Fortune 500 companies were already using these technologies, and the market is projected to hit $13.8 billion by 2032, with an annual growth rate of 22.7%. Additionally, three out of four of the world’s largest brands are either using or planning to implement generative AI in their marketing efforts. While the potential is vast, these advancements underscore the importance of ethical considerations.

Where Brands Use Emotion AI for Content

Emotion AI is finding applications across industries like retail, entertainment, advertising, and customer service. In e-commerce, retailers use emotion AI to tailor product recommendations based on a shopper's mood. For instance, if someone seems stressed, the system might suggest relaxing aromatherapy products. Streaming platforms like Netflix and Spotify also leverage this technology, curating content to match a user’s emotional state - offering comforting shows or upbeat playlists depending on how they feel.

In advertising, emotion AI helps craft personalized and emotionally engaging ad scripts by analyzing consumer reactions. Some systems even adjust ads dynamically to maximize impact. Shoppable content platforms take this concept further, turning emotional engagement into sales opportunities. For example, in 2024, Aperol UK launched a shoppable ad campaign across social media, video, and email channels. By collecting first-party data and optimizing in real time, they achieved a 3.7x increase in their Purchase Intent Rate, with in-feed ads generating twice as many purchase intent clicks as Reels. Similarly, BIC UK used first-party data to create targeted lookalike audiences, leading to a 12% increase in return on ad spend and a 3.0x higher Purchase Intent Rate on Meta platforms.

Customer service is another key area benefiting from emotion AI. Chatbots and virtual assistants powered by this technology can adapt their tone and responses based on whether a user seems frustrated or satisfied, making interactions feel more empathetic and human. This is particularly evident in AI messaging platforms for creator-fan interaction, where 24/7 engagement relies on maintaining a consistent emotional connection. These varied applications highlight the fine line between ethical personalization and manipulative practices.

Helpful Personalization vs. Emotional Manipulation

There’s a crucial distinction between personalization that helps users and tactics that exploit their emotions. Helpful personalization focuses on improving the user experience. For instance, a music app might play uplifting songs when it detects sadness, or an educational platform could adjust its pace based on a student’s engagement levels. These uses align with user goals without taking advantage of their vulnerabilities.

Manipulative practices, on the other hand, exploit emotional data to influence decisions in harmful ways. Examples include using eye-tracking technology to steer shoppers toward unnecessary, high-priced items, or targeting individuals with gambling addictions during moments of emotional vulnerability. The Federal Trade Commission (FTC) has addressed these concerns. Michael Atleson, an attorney at the FTC, has noted:

"The agency is targeting deceptive practices in AI tools, particularly chatbots designed to manipulate users' beliefs and emotions".

The European Union’s AI Act also tackles these issues by banning AI systems that employ "subliminal methods" or manipulative tactics that significantly alter behavior in harmful ways. It further restricts the use of emotion recognition in educational and workplace settings unless it serves clearly defined safety or healthcare purposes. Ethical emotion AI should empower users by enhancing their ability to make informed choices, not undermine their autonomy.

How to Implement Emotion AI Ethically

Ensuring that emotion AI operates ethically requires embedding safeguards throughout its entire lifecycle. According to the European Commission's Ethics Guidelines for Trustworthy AI, human agency and oversight must remain at the core of any AI system. This means incorporating human oversight mechanisms, whether through Human-in-the-Loop (HITL) or Human-on-the-Loop (HOTL) approaches, to maintain user autonomy. Additionally, conducting rights impact assessments and rigorous testing, like red teaming, can help identify potential risks before they escalate.

Data minimization plays a critical role in ethical implementation. Only collect and process emotional data that directly aligns with your stated objectives. Whenever possible, opt for anonymized, synthetic, or de-identified data instead of personal information. Access to sensitive emotional data should be restricted to qualified personnel with a legitimate need. Diversifying your development team with individuals from various backgrounds and disciplines can also help mitigate bias and improve the fairness of the system. These steps lay the groundwork for strong privacy measures.

Build Privacy Into Your System

Protecting sensitive emotional data starts with robust privacy practices. A cornerstone of this is informed consent. When gathering emotional data, ensure the consent process is clear, specific, and free of manipulative tactics, such as deceptive design patterns. Be upfront about what data you're collecting - whether it’s facial expressions, voice tone, or physiological signals - and how long you plan to retain it. Since emotion AI often deals with sensitive information like mental health indicators or biometric data, explicit opt-in consent is often required under laws like GDPR and various state regulations in the U.S..

Establish and publish clear retention schedules for securely deleting biometric and emotional data once it has served its purpose. For instance, Illinois's Biometric Information Privacy Act (BIPA) mandates written retention policies and informed consent. Transparency in data handling is crucial, especially as studies show that 80% to 90% of users decline tracking when given a clear choice, as seen with Apple’s App Tracking Transparency framework.

Before processing personal data, conduct Data Protection Impact Assessments (DPIAs), particularly for systems involving automated decision-making or large-scale sensitive data. Assign Senior Responsible Owners (SROs) and data owners to oversee data quality and access controls. On the technical side, implement safeguards to prevent your AI from storing or outputting personally identifiable information (PII). Lastly, ensure users are well-informed about the AI’s role and limitations in their interactions.

Be Clear About How You Use AI

Transparency is key to building trust. The European Commission emphasizes:

"AI systems should not represent themselves as humans to users; humans have the right to be informed that they are interacting with an AI system".

Users should always know when emotion AI is analyzing their responses and how that data is being used to personalize their experience.

Provide detailed privacy notices that explain what data is being collected, how it’s processed, and its intended use. Avoid vague or overly technical language; instead, be specific about whether you’re analyzing facial expressions, voice patterns, or behavioral cues. Also, explain your automated decision-making processes in a way that aligns with your audience’s level of understanding.

Equally important is being upfront about the system’s limitations. Let users know the AI's accuracy level and its constraints in interpreting emotions. This openness prevents over-reliance on the technology and reduces the risk of misunderstandings about its capabilities. Regularly review your practices to ensure data isn’t misused for unauthorized purposes.

Test and Reduce Bias in Emotion AI

Bias in emotion AI can result in unfair or discriminatory outcomes. Past examples, such as biased hiring tools and facial recognition errors, highlight the risks.

To address this, implement a framework of "fairness explanations" that examines four key areas: dataset fairness (ensuring diverse representation in training data), design fairness (avoiding systemic favoritism), outcome fairness (ensuring equitable results), and implementation fairness (monitoring real-world performance). The Information Commissioner’s Office (ICO) states:

"Any processing of personal data using AI that leads to unjust discrimination between people, will violate the fairness principle".

Regular audits are essential to identify and address performance discrepancies across demographic groups. Use personal data specifically to mitigate bias, ensuring your system delivers accurate and equitable results. Treat DPIAs as ongoing evaluations rather than one-time tasks, and maintain meaningful human oversight to ensure the AI’s outcomes align with established ethical standards. These efforts reinforce a broader ethical framework, ensuring that emotion AI systems remain user-focused and fair.

Setting Boundaries for Emotion AI Use

Even with strong privacy safeguards and bias testing in place, companies need to establish clear limits on how emotion AI is deployed. This prevents the technology from veering into manipulation or exploitation. The American Bar Association has cautioned that "Emotional AI, if not operated and supervised properly, can cause severe harm to individuals and subject companies to substantial legal risks".

Use Emotion AI Only for Its Intended Purpose

Emotion AI should be used exclusively to improve user experiences. For instance, when emotional data is leveraged to recommend calming content during stressful times, it fosters trust. However, using that same data to exploit emotions for profit can severely damage that trust.

The EU AI Act underscores this principle by banning emotion recognition in workplace and educational settings unless it's for specific safety or healthcare needs. This reflects a broader ethical stance: emotion AI must never be used to manipulate or control decisions, particularly in situations where there are power imbalances.

Another critical practice is updating privacy notices whenever emotional data is repurposed. For example, if voice tone data initially collected to enhance customer service is later used for churn prediction, this constitutes a new purpose. In such cases, updated privacy notices and renewed consent are required. The Federal Trade Commission (FTC) has been vigilant about these practices. FTC Attorney Michael Atleson highlighted this concern:

"The agency is targeting deceptive practices in AI tools, particularly chatbots designed to manipulate users' beliefs and emotions".

Establishing these boundaries is essential for empowering users to maintain control over their data.

Give Users Control Over Their Emotional Data

Users need the ability to directly manage their emotional data through detailed settings that allow them to view, correct, or delete their information. Laws like GDPR and state regulations in Illinois, California, and Colorado classify emotional data as sensitive personal data (SPD). This means companies must obtain explicit opt-in consent rather than relying on passive opt-out mechanisms.

Make sure users can limit the use of their emotional data to what’s strictly necessary for the service they’re using. For instance, if someone uses an app to track their mood, they should have the option to prevent that data from being used for targeted advertising. A recent example is the FTC’s December 2023 settlement with Rite Aid Corporation over discriminatory facial recognition practices. The settlement required Rite Aid to notify consumers, offer dispute options, and conduct rigorous bias testing - setting a precedent for fairness in algorithmic systems.

Additionally, users should have the right to challenge decisions made by emotion AI. For example, if an AI system determines someone is "unengaged" based on facial expressions and restricts their access to premium content, they should have the opportunity to appeal this decision with human oversight. This approach isn't just about legal compliance - it’s about honoring user autonomy and building trust that lasts.

Building Governance Systems for Emotion AI

Creating strong governance systems is essential for turning ethical data practices and bias reduction into actionable outcomes. Without formal governance, even companies with the best intentions may face unethical results. Consider this: while 87% of business leaders aim to establish AI ethics policies by 2025, only 35% currently have frameworks in place. This gap highlights the urgent need for both regulatory and internal solutions.

Current and Upcoming Emotion AI Regulations

The EU AI Act, effective as of August 1, 2024, introduces strict guidelines. It bans emotion recognition in workplaces and schools starting February 2, 2025, and requires detailed risk assessments for high-risk applications like law enforcement or migration. By August 2, 2026, the act will also mandate that users be informed when interacting with AI systems and that AI-generated content, such as deepfakes, is clearly labeled.

In the United States, regulation is more fragmented. For example, Colorado passed a comprehensive law in May 2024 targeting AI discrimination. It requires high-risk systems to implement risk management programs and report any discriminatory practices. Meanwhile, Illinois's Biometric Information Privacy Act (BIPA) demands written consent before processing biometric identifiers like facial geometry or voiceprints. On a federal level, the Federal Trade Commission (FTC) has intensified its focus on deceptive AI practices. FTC Attorney Michael Atleson emphasized:

"The agency is targeting deceptive practices in AI tools, particularly chatbots designed to manipulate users' beliefs and emotions".

Additionally, the European Commission introduced an AI Act whistleblower tool in November 2025, enabling individuals to report violations of the act.

How Brands Can Govern Emotion AI Internally

While external regulations set the baseline, companies should aim to exceed these standards through well-structured internal policies. A strong governance framework should prioritize AI security, data protection, and transparency from the start.

To ensure accountability, assign specific roles within your organization. For instance, a Senior Responsible Owner can oversee risk management, a Data Steward can ensure data quality, and an AI Asset Owner can maintain responsibility for AI systems. Many companies are also establishing cross-functional AI Ethics Committees that bring together experts from legal, IT, HR, and ethics to manage projects and resolve ethical challenges.

Before rolling out emotion AI systems, conduct Ethical Impact Assessments to identify risks such as emotional manipulation, bias, or privacy violations. However, only 58% of organizations have evaluated AI risks, even though 68% of Americans are worried about unethical AI usage.

Internal policies should require informed consent for collecting emotional data, with clear communication about its purpose and associated risks. Use bias mitigation protocols by employing diverse datasets and fairness metrics to assess outputs. For critical decisions, implement human-in-the-loop protocols to ensure human oversight of AI systems.

Building consumer trust is crucial - strong governance can increase trust by 30%. To achieve this, publish transparency reports that include Data Protection Impact Assessments (DPIAs) and Equality Impact Assessments (EIAs). Additionally, provide mandatory training on AI ethics and data privacy.

Integrate governance into everyday operations by embedding ethical checklists and fairness audits into your machine learning processes. Maintain detailed audit logs to track access to emotional data and content recommendations, such as AI sync for live shopping, and require third-party tools to provide comprehensive documentation on data sources, training set diversity, and any known biases in their models.

"AI governance will evolve as quickly as AI itself. The future will involve self-regulation, real-time auditing, and AI that explains its own decision-making processes".

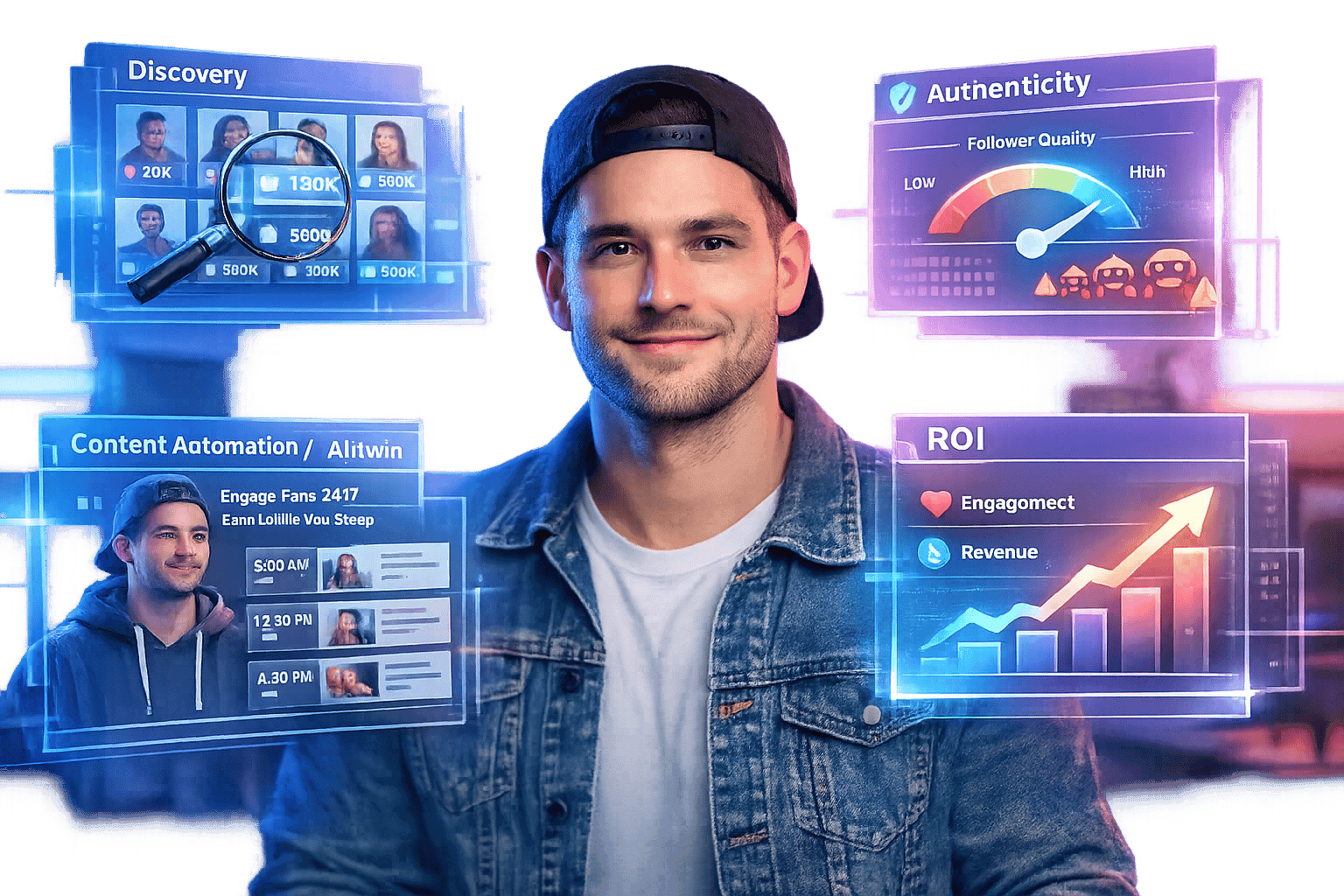

TwinTone: Ethical AI for Scalable Content Creation

TwinTone takes the principles of ethical AI and applies them to content creation, offering a scalable solution that ensures transparency and respects creator authenticity. By turning real creators into AI Twins, TwinTone enables on-demand UGC video production, AI-powered livestream hosting, and automated social commerce - all while adhering to ethical standards.

AI Twins That Respect Creator Identity

At the heart of TwinTone's approach is a commitment to informed consent and identity preservation. Creators must provide explicit permission before an AI Twin is developed, giving them control over how their likeness and personality are used. This addresses a major concern, as more than 50% of leading brands worry about generative AI's impact on intellectual property, copyright, and privacy.

TwinTone also amplifies creators' output without compromising their individuality. By integrating human oversight, the platform ensures that creators' unique voices remain intact, aligning with ethical guidelines for synthetic media. This approach enhances creativity rather than replacing it.

Transparency in Shoppable Videos and Livestreams

TwinTone prioritizes transparency by clearly labeling all AI-generated content, including shoppable videos and 24/7 livestreams on platforms like TikTok, Amazon, YouTube, and Shopify. This is especially critical as nearly 90% of advertisers are projected to use generative AI for video ads by 2025.

The platform also adheres to GDPR and CCPA standards by using anonymized data for targeting. With a real-time analytics dashboard, brands can track engagement and conversions while meeting data minimization requirements set by emerging regulations. This commitment to transparency reinforces a user-first, ethical framework for AI-driven content.

Key Takeaways

Exploring governance and technical safeguards highlights a clear reward: ethical emotion AI not only fosters consumer trust but also drives business success. The strongest brands aren't just known - they're trusted, valued, and emotionally connected to their audience.

Consider this: only 15% of Americans are okay with advertisers using facial recognition in public ads, and a mere 4% of U.K. citizens approve of its use in hiring practices. This trust gap is both a challenge and an opportunity. Brands that prioritize opt-in consent, minimize data collection, and conduct bias audits can distinguish themselves in a market expected to hit $13.8 billion by 2032.

Legal cases underscore the importance of ethical practices. For example, in December 2023, the Federal Trade Commission reached a settlement with Rite Aid Corporation over discriminatory facial recognition practices. The settlement requires the company to implement a strict "algorithmic fairness program" and bans facial recognition for five years. This case illustrates that ethical AI isn't just a moral choice - it's a legal and financial safeguard against fines, investigations, and lawsuits.

Developing ethical emotion AI requires human oversight, diverse datasets, and strict data boundaries. Giving users control over their emotional data and being transparent about AI usage can transform doubt into trust. One bank demonstrated this by increasing credit card use among Millennials by 70% after building stronger emotional connections.

Brands that respect emotional data, communicate openly about their AI practices, and actively work to eliminate bias can forge lasting relationships. This commitment to ethical and transparent emotion AI is essential for sustainable growth in an increasingly AI-driven world.

FAQs

What ethical challenges does emotion AI pose in personalized content delivery?

Emotion AI, designed to analyze facial expressions, voice tones, or physiological signals, presents a range of ethical challenges when applied to personalized content. One of the biggest concerns is privacy. This technology often gathers and processes deeply personal emotional data, sometimes without explicit user consent. This could lead to constant monitoring, where even fleeting emotions are turned into data points that might be stored, shared, or misused.

Another key issue is accuracy and bias. Emotion AI often struggles to interpret emotions consistently across different cultural and individual contexts. This can result in misjudgments or even discriminatory outcomes. Moreover, the data these systems rely on can reinforce existing biases, unfairly impacting certain groups.

There’s also the risk of manipulation. Companies could use emotion AI to influence user behavior, steering individuals toward specific choices or exploiting their emotional states with highly targeted ads. To navigate these ethical pitfalls, businesses need to prioritize transparency, secure clear user consent, and establish strong safeguards to ensure this technology is used responsibly.

How can businesses ethically use emotion AI while building user trust?

To ensure ethical use of emotion AI and maintain user trust, businesses must focus on transparency, consent, and data privacy. Always seek explicit permission before analyzing emotional data, and provide a clear explanation of how this data will be used to tailor content. Clearly label AI-generated recommendations or videos to avoid misunderstandings, and limit data collection to only what is absolutely necessary to minimize privacy risks.

It’s also essential to adopt practices like conducting bias audits, enforcing robust security measures, and providing users with opt-out options. Adhering to established ethical guidelines and complying with U.S. privacy laws helps maintain accountability while safeguarding consumer trust.

By integrating these principles into its platform, TwinTone responsibly delivers AI-generated UGC videos and livestreams. With transparent labeling, creator consent, and ongoing fairness monitoring, TwinTone exemplifies how emotion AI can create personalized experiences without sacrificing privacy or trust.

What laws regulate privacy and bias in emotion AI technologies?

In the United States, privacy and bias in emotion AI are addressed through a combination of federal and state laws. At the federal level, the Federal Trade Commission (FTC) plays a key role by treating deceptive or discriminatory AI practices as violations of consumer protection laws. The FTC also enforces regulations against AI-driven activities like surveillance, fraud, and bias. Additionally, companies that handle the personal data of EU residents must adhere to the General Data Protection Regulation (GDPR), which places strict requirements on consent, data usage, and biometric information.

On the state level, more targeted laws are being introduced to tackle specific issues. For instance, Arkansas bans the commercial use of AI-generated replicas of a person’s voice, photograph, or likeness without their consent. In California, AI providers are required to disclose their systems' capabilities, include detection tools, and limit contracts involving unauthorized digital replicas. These measures aim to protect individual privacy and address bias in emotion AI, promoting greater transparency and ethical standards in its application.