Building AI Identity Risk Models for Brands

How to build a futureproof relationship with AI

AI identity risk models are transforming how brands detect and prevent fraud, safeguard their reputation, and ensure secure interactions. With professional criminals responsible for 71% of fraud cases and generative AI enabling $15 fake IDs, the stakes have never been higher. These systems monitor onboarding, logins, and high-risk transactions in real-time, flagging issues like deepfakes, synthetic identities, and biometric spoofing.

Key Takeaways:

Why It Matters: Generative AI has made fraud easier and more accessible, amplifying risks for brands in areas like social commerce and user-generated content.

How It Works: AI models use tools like biometric scans, liveness detection, and risk scoring frameworks to verify identities and prevent fraud.

Steps to Build Models:

Identify where verification is critical (e.g., onboarding, creator contracts).

Build a risk scoring framework based on likelihood and impact.

Use secure AI tools with monitoring and governance protocols.

Test and refine models with adversarial scenarios and feedback loops.

Benefits: Protects financial assets, maintains reputation, and ensures compliance with regulations like GDPR and the EU AI Act.

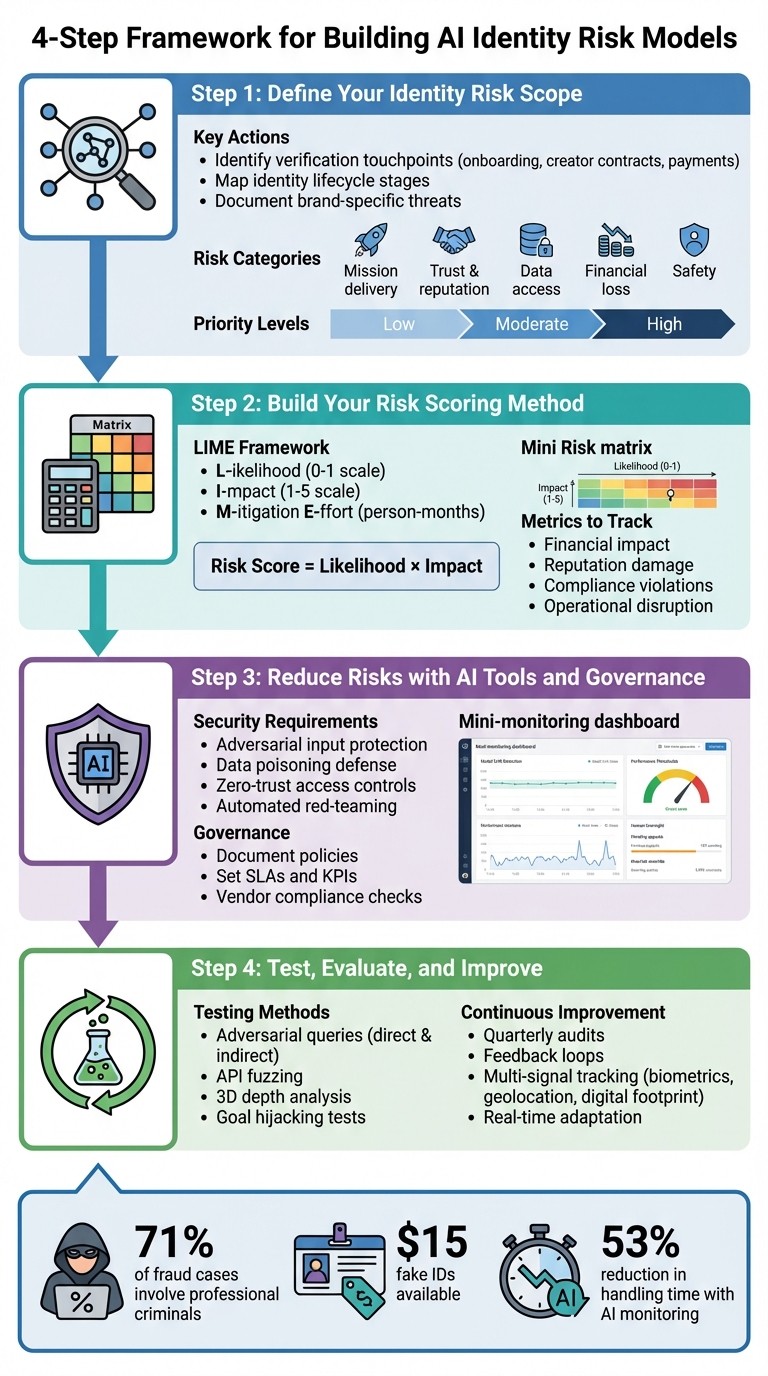

4-Step Framework for Building AI Identity Risk Models

The Smartest Way to Use AI for Fraud Prevention

Step 1: Define Your Identity Risk Scope

Before diving into building a risk model, it’s crucial to map out where identity threats are most likely to occur within your ecosystem. This step helps pinpoint the critical areas where verification plays a key role. Think about every touchpoint where identity matters - whether it’s a creator signing up or a customer using AI-powered tools. This mapping process sets the stage for creating a solid risk scoring framework in the next step.

Identify Where Identity Verification Matters

Start by identifying the key stages in your identity lifecycle where verification is essential. For instance, during onboarding and registration, you need to confirm that a real person is entering your system. This step acts as your first line of defense.

When it comes to creators and influencers, extra care is needed. Verifying that they are who they claim to be is vital before signing contracts or processing payments. With high-quality fake IDs costing as little as $15, relying solely on traditional document checks won’t suffice. Incorporate biometric liveness detection to confirm physical presence and guard against injection attacks.

For added security, require biometric re-verification during sensitive actions like password resets, payment updates, and account recovery. This helps counter social engineering attempts.

If your brand uses customer-facing AI interactions, such as AI-powered livestreams or chatbots, it’s important to ensure transparency. Make it clear that the AI agents are identified as such, and verify that users engaging with them are real people, not bots. This level of authenticity protects your brand's reputation. Similarly, in social commerce workflows, verifying the originators of user-generated content helps distinguish genuine fans from bots while ensuring copyright compliance.

"Generative AI has significantly lowered the technical barrier for committing fraud, making it alarmingly accessible to novices while also empowering organized criminal networks to exploit human and digital vulnerabilities with unprecedented speed and sophistication." – Mitek Systems

List Your Brand-Specific Risk Scenarios

After mapping out your verification points, document the specific threats your brand may face. Different industries and business models attract unique attack vectors. For example, if you run user-generated content (UGC) campaigns, you might encounter challenges like fan impersonations, unauthorized content repurposing, or AI-generated deepfakes that could harm your reputation.

Evaluate potential risks across five key areas:

Mission delivery: Can your operations continue effectively?

Trust and reputation: Could customer confidence take a hit?

Unauthorized information access: Is sensitive data at risk of exposure?

Financial loss: What’s the potential monetary impact?

Safety concerns: Could someone be physically harmed?

Assign each risk scenario a priority level - Low (minimal impact), Moderate (serious impact), or High (severe or catastrophic impact) - to determine which issues need immediate attention.

If your brand uses AI Twins or automated content generation, consider risks like unauthorized AI impersonation, where synthetic content mimics real individuals, or template attacks that replicate official ID designs to bypass verification. Additionally, physical-to-digital connections, such as retail stores or pop-up events, require consistent identity standards. Fraudsters are increasingly targeting these physical touchpoints with social engineering tactics and forged documents.

Compile these scenarios into a straightforward risk register for your team to reference. Focus on vulnerabilities that could affect brand trust, revenue, or operations. This documentation will help your team prioritize risks and streamline the process of building a scoring framework.

Step 2: Build Your Risk Scoring Method

Once you've established your risk register, the next step is turning the identified threats into actionable priorities. By using your defined risk scenarios, you can translate qualitative concerns into measurable, ranked risks. A risk scoring framework helps you figure out which threats need immediate attention and which can be addressed later. This process revolves around evaluating each risk based on two key factors: likelihood (how likely it is to happen) and impact (the damage it could cause if it does).

Create a Risk Scoring Framework

To build an effective scoring matrix, start by defining clear categories. A practical tool for this is the LIME framework, which evaluates risks across three dimensions: Likelihood (probability on a 0–1 scale), Impact (severity on a 1–5 scale), and Mitigation Effort (estimated person-months required to address the issue).

For instance, if you're running user-generated content (UGC) campaigns, a deepfake impersonating a brand ambassador might score high on both likelihood (0.7) and impact (5). For brands using platforms like TwinTone, applying this framework can help protect their reputation and maintain trust. On the other hand, an account takeover attempt at a physical retail location might have a lower likelihood (0.3) but still pose a significant financial risk (impact score of 4). By multiplying likelihood and impact scores, you can rank threats and prioritize your responses.

Your framework should also include intrinsic risks (like model bias or data leakage) and extrinsic risks (such as financial loss or reputational harm). Some systems, particularly those affecting health, safety, employment, education, or biometrics, are automatically labeled as high-risk under standards like the EU AI Act. These classifications can guide you in setting thresholds - if a risk surpasses a certain level, it should trigger automated alerts or even prompt you to take a model offline.

"High-risk AI models require continuous oversight, as unmanaged risks, such as bias, privacy breaches, or system failures, can lead to significant legal, financial, and reputational damage." – Fergal Glynn, Mindgard

This scoring approach lays the groundwork for precise risk measurement and ongoing evaluation.

Use Metrics to Measure Risk Severity

To gauge the severity of risks, focus on four metrics: financial impact, reputation, compliance, and operations. Financial losses can be calculated directly, while shifts in reputation may be tracked using sentiment analysis. Compliance risks, like potential fines under GDPR or the EU AI Act, should also be monitored, as these penalties can reach millions of dollars - especially in cases involving unauthorized biometric data use. Operational disruptions, such as system downtime or recovery efforts, should be assessed as well.

For example, account takeover incidents can lead to stolen funds and costly recovery efforts. Customer trust can be tracked through brand health scores, which is increasingly important as 71% of fraud incidents now involve professional criminals or organized crime rings. Compliance risks require special attention, as regulatory fines can be steep. Operational metrics should capture downtime, recovery time, and the effort needed to address issues like injection attacks that bypass authentication measures. One major brand, for example, cut handling time by 53% by using AI to monitor social media, showing how effective metrics can drive improvement.

To ensure consistency across your organization, create internal rubrics to assess impacts on users, data, and operations. Present risk estimates as ranges to reflect potential variability. Finally, use these metrics to implement "good friction." For example, allow low-risk users to proceed smoothly while requiring additional biometric verification for high-risk actions, such as password resets from new devices. This approach balances security with user convenience, ensuring that high-risk actions are closely monitored without slowing down everyday operations.

Step 3: Reduce Risks with AI Tools and Governance

Once you've assessed and scored potential risks, the next step is to implement safeguards. This involves selecting secure AI tools, keeping a close eye on their performance, and putting governance protocols in place to address any identity-related issues quickly and effectively.

Choose AI Models with Built-In Security

When selecting AI tools, prioritize those designed to withstand adversarial inputs, data poisoning, and other vulnerabilities like jailbreaking or prompt injections that could disrupt identity verification processes. Statistics show that a significant number of organizations have experienced attacks on their AI systems.

Look for tools that protect against data leakage and model inversion, while also offering transparency and auditability to meet regulations like GDPR’s right-to-explanation. Vendors should provide features like automated red-teaming and constant artifact scanning to identify weaknesses. Additionally, ensure that these tools support zero-trust access controls, employing role-based permissions for sensitive areas such as model code, training data, and production environments. This step builds on your earlier risk scoring efforts and strengthens your overall risk management strategy. For AI systems handling biometrics, education, employment, or critical infrastructure, note that these are classified as high-risk under the EU AI Act and demand the highest level of security measures.

Once you've deployed secure tools, the focus shifts to continuous monitoring to catch any performance issues before they escalate.

Set Up Monitoring and Drift Detection

Ongoing monitoring is essential to ensure your AI systems remain reliable. Pay special attention to model drift, which occurs when an AI's performance starts to deviate from its original baseline due to changes in real-world data patterns. This can significantly impact accuracy and effectiveness.

Define clear thresholds for model drift that trigger immediate action. Document these thresholds and establish formal policies outlining how often monitoring should occur. Keep a close watch on both model drift and operational metrics to catch any anomalies early.

"AI systems are dynamic and may perform in unexpected ways once deployed or after deployment." – NIST AI RMF Playbook

Introduce human oversight mechanisms, such as "appeal and override" processes, to allow real-time review of flagged incidents and problematic outcomes. Maintain an inventory for each model, noting when it was last updated, its purpose, and the number of users it affects. Feedback loops are equally critical - affected individuals can often spot issues that automated systems might overlook. Regularly review business impacts, fraud rates, and unintended consequences on privacy to ensure your AI systems are functioning as intended. These monitoring efforts should seamlessly integrate with your governance protocols.

Document Governance Policies and Compliance

A solid governance framework is key to managing AI risks effectively. Start by documenting policies that define critical terms, scope, and usage guidelines to ensure compliance and operational safety. These policies should align with your overall data governance practices, especially when dealing with sensitive identity data.

Adopt a structured Digital Identity Risk Management (DIRM) process. This involves defining your online service, conducting impact assessments, determining assurance levels (such as Identity Assurance Level or Authenticator Assurance Level), and continuously evaluating system performance. Use standardized formats like "Model Cards" or "FactSheets" to document the methodology and training data behind your algorithms.

When working with third-party vendors, ask detailed questions about their governance practices.

"I would want to know what kind of governance policies they have. I would want to know: how do you monitor? How do you check the drift?" – Naveen Balakrishnan, TD Securities

Set clear Service Level Agreements (SLAs) and Key Performance Indicators (KPIs) for any third-party models you use. Keep in mind that 53% of organizations cite weak identity management as a major security concern, and nearly half of cloud-related attacks stem from identity vulnerabilities.

Finally, establish protocols for safely decommissioning AI systems. This ensures compliance with data retention laws and prevents security gaps during transitions.

"You can never outsource your accountability. So if you decide to place reliance on these AI models... and something goes terribly wrong, the accountability is still going to fall on the organization." – David Cass, CISO at GSR

Step 4: Test, Evaluate, and Improve Risk Models

Thorough testing and ongoing refinement are crucial to ensure your AI identity risk models can handle real-world threats and adapt to new attack methods as they emerge.

Run Adversarial Tests on Identity Models

Once you've established risk scores and safeguards, it's time to test your models under adversarial conditions. Start by defining prohibited actions, like identity spoofing or prompt injection, and create test datasets with both direct and indirect adversarial queries. This could include subtle language variations designed to challenge your model's decision-making. Begin small, using "seed" datasets with a few dozen examples per category, and then expand them with data synthesis tools to increase diversity - both in wording and in the range of identity-based scenarios covered.

Focus on AI-specific vulnerabilities such as "Goal Hijacking" and "Chain-of-Thought Attacks." Use techniques like API fuzzing to uncover data leaks, adversarial input generation to test model boundaries, and 3D depth analysis to distinguish live faces from 2D spoofing attempts.

"Adversarial testing involves proactively trying to 'break' an application by providing it with data most likely to elicit problematic output." – Google for Developers

Integrate adversarial testing into your CI/CD pipeline during the staging phase to ensure no model goes live without passing stringent security checks. Leverage safety classifiers to flag violations, but don't rely solely on automation - human raters are better at spotting subtle adversarial failures that machines might miss. This type of testing helps uncover rare edge cases and out-of-distribution examples that standard evaluations often overlook.

Check Risk Treatments Against Brand Goals

Your risk reduction strategies should reflect your brand’s specific goals and tolerance for risk. Use an orchestration layer to dynamically adjust friction based on threat levels, validate treatments against custom rules tailored to your brand’s needs, and track metrics like conversion rates, average order value (AOV), and customer acquisition cost (CAC) to measure their effectiveness.

Apply "good friction" thoughtfully. For instance, biometric security measures like face scans with passive liveness detection are less intrusive than manual forms and can enhance both security and user experience. Schedule quarterly audits to identify potential risks and ensure compliance with regulations like GDPR and CCPA.

"Treat privacy not as a box to tick but as a core brand value." – Lior Torenberg, Northbeam

Use tools that provide visual evidence, such as screenshots or placement trails, to verify that your risk treatments are enforcing brand safety and compliance effectively. With professional criminals now responsible for 71% of fraud incidents, it’s essential to recalibrate your models regularly to keep up with evolving threats.

Use Feedback Loops for Continuous Improvement

The monitoring data from Step 3 plays a key role in refining your models. Follow a structured Digital Identity Risk Management (DIRM) process, which includes continuous evaluation, documented redress mechanisms, and iterative improvements based on new threat patterns. Modern identity systems should have an intelligence layer that learns and adapts from signals across various channels.

Combine multiple signals - such as biometric face matching, document liveness, geolocation, and digital footprint analysis - to adjust security measures in real time. Keep an eye on specific attack methods like template attacks (replicating official ID layouts), deepfakes (AI-generated images with subtle flaws), and injection attacks (using synthetic media via virtual cameras).

Track key performance indicators like face velocity (detecting repeated use of the same face across applications), fraud rates (successful versus blocked fraud attempts), and usability barriers (legitimate users failing due to system friction). Consult with a representative sample of your users to shape design decisions and prevent unintended harms to privacy or access.

"Generative AI and automation have changed the game for fraud and the way we defend against it. As tactics grow more sophisticated and collaborative, businesses must respond with strategies that are equally dynamic." – Kim Martin, VP Global Growth Marketing, Mitek

Finally, establish clear and transparent processes to address cases where the system fails or causes unintended harm. Staying ahead of increasingly complex fraud tactics requires constant adaptation and improvement.

Conclusion

Developing AI identity risk models is an ongoing effort to safeguard both your brand and your customers. Start by identifying the key areas in your workflows where identity verification is critical. From there, build a risk scoring framework tailored to your business needs, evaluating potential threats in context. Integrate AI tools equipped with security features, establish real-time monitoring to detect anomalies, and rigorously test your defenses using adversarial scenarios that mimic actual fraud strategies. This comprehensive approach not only fortifies your defenses but also enables swift, real-time responses to evolving threats.

The stakes are higher than ever, with fraud tactics growing more sophisticated and costly. Traditional defenses are no match for advanced threats like GenAI fraud kits, which utilize template-based attacks, deepfakes, and injection techniques. To counter these, your strategy must be just as advanced - incorporating document verification, biometric face matching, and liveness detection into a multi-layered defense system.

"Risk Management in AI is different both conceptually and in implementation... there is an urgent need to create a culture of 'risk tolerance' instead of 'risk avoidance' or 'risk denial'." – Somil Gupta, AI Strategy and Monetization Advisor

This perspective highlights the importance of fostering a proactive, risk-tolerant mindset as you refine your models.

As you scale your identity risk solutions, think about how AI-driven platforms can help you strike the right balance between security, speed, and user experience. Tools like TwinTone can strengthen the layered defenses mentioned earlier. TwinTone supports secure, on-demand content creation, reinforcing trust while maintaining operational efficiency. Clear governance policies and a well-defined "social contract" further enhance your brand's safety and fuel sustainable growth.

Finally, ensure your models continuously evolve by incorporating feedback loops to track emerging threats and adjust your defenses dynamically. With a robust framework in place, your AI identity risk models can become a powerful asset - protecting your revenue, maintaining customer trust, and ensuring compliance at every interaction.

FAQs

How do AI-powered identity risk models help brands prevent fraud?

AI-powered identity risk models offer brands a smart way to tackle fraud before it even occurs. These models sift through massive amounts of data, analyzing transaction patterns and behavioral cues to uncover subtle warning signs. For instance, they can flag mismatched device usage, odd combinations of addresses and phone numbers, or inconsistencies in public records. This makes it easier to identify synthetic identities - those fake personas fraudsters create to carry out scams - early in the game.

As fraud tactics become more sophisticated, these AI models keep learning and evolving, staying one step ahead of emerging threats. When a high-risk profile is flagged, the system can automatically take action. This could mean initiating biometric checks, verifying documents, or even temporarily restricting accounts to prevent fraud from escalating. By pairing advanced risk scoring with real-time responses, brands can minimize financial losses, safeguard their revenue, and strengthen customer trust.

What makes a strong identity risk scoring model?

A solid identity risk scoring model depends on four interconnected components working together seamlessly.

First, thorough data collection pulls in reliable inputs like document verification, behavioral patterns (such as device or IP irregularities), business information, and external intelligence like watchlists or legal records. This step ensures the model has a well-rounded and accurate understanding of each identity.

Next, AI-powered analysis leverages machine learning to spot patterns, flag anomalies, and stay ahead of emerging threats by analyzing both historical and real-time data. Then, the scoring engine takes these insights and converts them into a flexible, easy-to-understand risk score tailored to specific use cases, such as lending decisions or customer onboarding. Finally, smooth integration and governance allow the model to fit effortlessly into existing workflows, maintain transparency, and ensure compliance through monitoring and auditing.

When these components come together, businesses can create a dependable system to tackle shifting identity risks and safeguard their operations effectively.

How can brands ensure their AI identity risk models comply with regulations like GDPR?

To align with GDPR requirements, brands should approach the creation of AI identity risk models with a strong focus on data protection. The first step is conducting a Data Protection Impact Assessment (DPIA). This helps identify the personal data being used, how it will be processed, and potential privacy risks to individuals. From the outset, integrate privacy-by-design principles like data minimization, pseudonymization, and stringent security protocols.

It's also essential to categorize sensitive data, anonymize or de-identify it wherever feasible, and establish controls to restrict access and enforce specific usage purposes. Regular audits are a must to identify and address biases or inequitable outcomes in the model. Transparency is equally important - offer clear explanations of how the model operates and provide users with straightforward options to opt out or correct their data.

To ensure continued compliance, maintain thorough documentation of all compliance measures, keep risk assessments up-to-date, and monitor changes in privacy laws. This proactive strategy not only supports GDPR alignment but also fosters trust with your audience.