AI Twins and Licensing Risks for Brands

How to build a futureproof relationship with AI

AI twins - digital replicas of real people - are transforming branding by enabling 24/7 content creation, livestream hosting, and product demos. While the technology offers efficiency, it introduces critical legal and ethical challenges. Brands must navigate risks such as:

Publicity rights violations: Using someone’s likeness without proper consent can lead to lawsuits, especially with new laws in states like California, New York, and Tennessee.

Copyright disputes: Ownership of AI-generated content and the datasets used to train these models is often unclear.

Misuse of likeness: Without strict controls, AI twins can appear in unapproved contexts, causing reputational damage.

FTC compliance: Undisclosed AI-generated endorsements may result in regulatory penalties.

To mitigate these risks, brands need robust licensing agreements, clear consent protocols, and adherence to state-specific laws. Transparency in AI usage is no longer optional - it’s essential for maintaining trust and avoiding legal pitfalls.

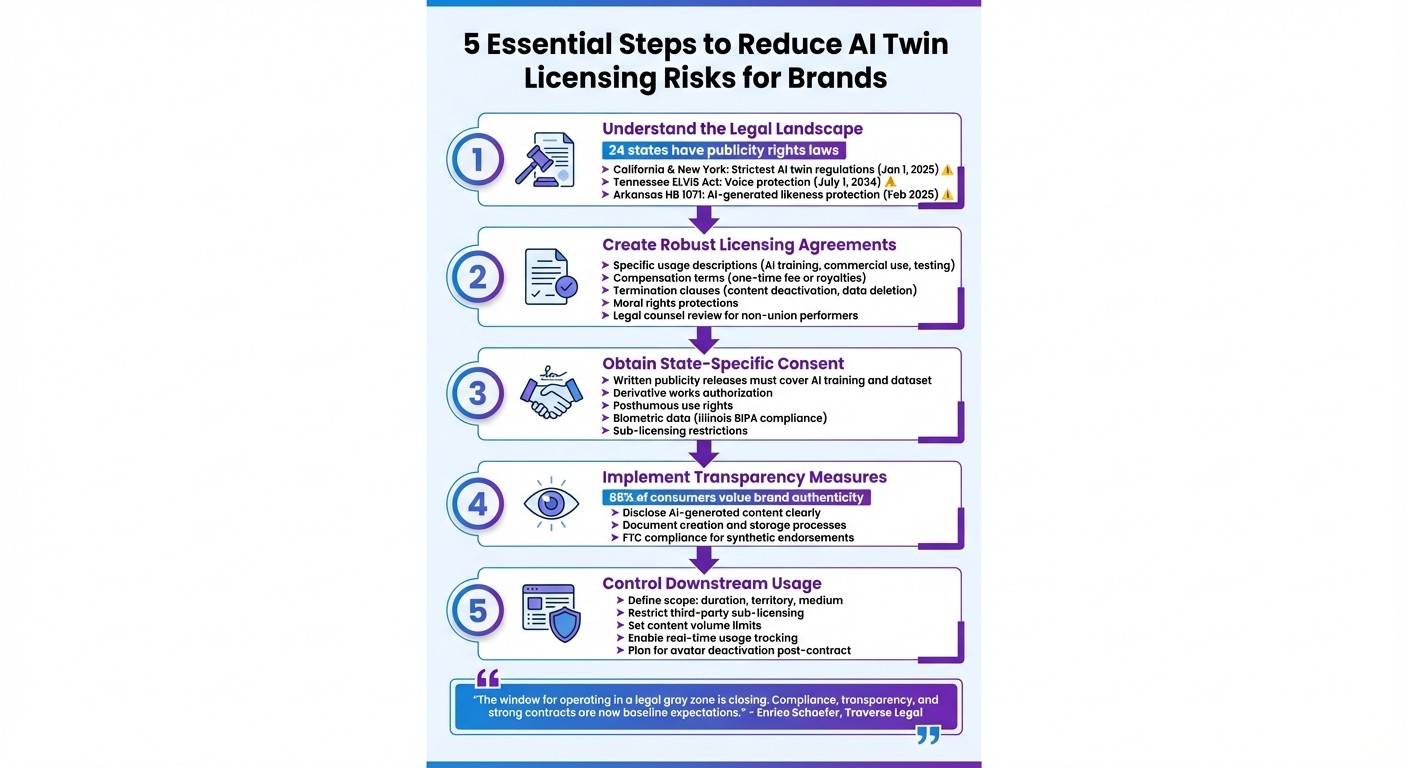

5 Essential Steps to Reduce AI Twin Licensing Risks for Brands

In-Ear Insights: Ethics of AI Digital Clones and Digital Twins

Main Licensing Risks When Using AI Twins

Using AI twins comes with a host of legal challenges that can lead to lawsuits, regulatory fines, and damage to a brand's reputation. For companies already navigating the delicate balance between operational efficiency and legal compliance, these risks are not to be taken lightly. Below, we break down some of the key legal concerns, from issues around personal identity to intellectual property conflicts.

Right of Publicity Violations

Right of publicity laws safeguard individuals from having their likeness, voice, or identity used commercially without consent. The tricky part? There's no federal standard - only a patchwork of state laws across about 24 states. States like California and New York have particularly strict rules for AI twin contracts. For instance, starting January 1, 2025, New York's SB 7676B and California's AB 2602 will invalidate contracts for digital replicas if they replace work the individual would have done in person, lack a "reasonably specific description" of intended use, or if the individual wasn’t represented by legal counsel or a union. Other states, like Tennessee with its ELVIS Act (effective July 1, 2024) and Arkansas with HB 1071, have extended these protections to include voice - whether real recordings or AI-generated simulations.

Courts don’t take these violations lightly. A notable example is a California case where the Ninth Circuit ruled that an ad featuring a robot in a blonde wig and gown infringed on Vanna White’s right of publicity. Even though it wasn’t an exact photographic likeness, it was deemed to have appropriated her distinct identity. This case sets a clear precedent: if an AI twin evokes someone's identity, the legal risks can be substantial.

Copyright Ownership Disputes

Copyright issues around AI twins are thorny, raising questions about who owns the AI-generated content and the training data used to create it. This creates a clash between copyright law, which protects the creator of a digital work, and the right of publicity, which protects the individual being represented.

The U.S. Copyright Office has been diving into these challenges. Its multi-part report on AI started with Part 1 in July 2024, focusing on digital replicas, and Part 2, released January 29, 2025, addressed the copyrightability of AI-generated outputs. By December 2023, the office had received over 10,000 public comments, highlighting just how contentious these issues are. One major debate is whether AI-generated content meets the threshold of "human creativity" needed for copyright protection.

Things get even murkier when AI twins are built using large datasets of copyrighted material. If a brand hires a third party to create an AI twin without clear "work-for-hire" or assignment agreements, the brand might not own the intellectual property rights to the model. Jennifer E. Rothman, a professor at the University of Pennsylvania Law School, warns:

"Copyright law can protect personality rights and privacy, but if not properly circumscribed it can also be a mechanism for owning a person's attributes and controlling and silencing that person."

Additionally, brands could find themselves in hot water if their AI twin is too similar to an existing copyrighted character or digital model, potentially violating U.S. copyright law. Without proper clearance, these disputes can lead to costly lawsuits and further complicate intellectual property management.

Misuse and Sub-licensing of Likeness

Even with a valid licensing agreement, brands can face significant risks if they don’t tightly control how an AI twin is used downstream. Sub-licensing clauses, for example, can allow a creator’s likeness or voice to be used in ways they never approved, causing both legal troubles and reputational harm. Imagine an AI twin endorsing a product, cause, or viewpoint that clashes with the creator’s personal values or professional image - it’s a recipe for public backlash and a potential PR disaster.

The Federal Trade Commission (FTC) has also flagged undisclosed use of synthetic voices or likenesses as potentially deceptive marketing. If an AI twin implies a real endorsement that doesn’t exist, brands could face regulatory penalties for failing to disclose the AI-generated nature of the content.

Enrico Schaefer, Founding Partner at Traverse Legal, puts it bluntly:

"Control over downstream use is one of the most overlooked clauses in AI likeness deals."

Without clear protocols for deactivating avatars or removing AI-generated content after a deal ends, a creator’s digital likeness could continue to appear in campaigns, prolonging the legal risks. Consider the example of CGI influencer Lil Miquela, who has amassed over 1.5 million followers and taken over major brand accounts like Prada. If a real creator’s AI twin achieved similar reach without proper safeguards, the risks would multiply dramatically.

Examples of AI Twin Licensing Challenges

Consent and Compensation Disputes

In May 2024, professional voice actors Paul Lehrman and Linnea Sage filed a class-action lawsuit in the U.S. District Court for the Southern District of New York against Lovo Inc., an AI startup. The lawsuit highlighted the risks associated with AI twin technology. Lehrman and Sage claimed they were hired through Fiverr for what they believed was academic research. However, their recordings were later used by Lovo to create AI voice clones, marketed to subscribers under the names "Kyle Snow" and "Sally Coleman." By July 2025, Judge J. Paul Oetken allowed the case to proceed, focusing on claims of violating New York publicity rights and engaging in deceptive consumer practices.

This case highlights a growing concern: companies misusing voice or likeness data obtained under misleading circumstances. What starts as a one-time gig can be transformed into a commercial AI twin capable of generating endless content - without further consent or compensation for the original creator.

The issue extends beyond voice actors. In December 2023, SAG-AFTRA approved an agreement requiring explicit consent and fair compensation whenever a digital replica substitutes for a human performance.

Backlash Over Job Displacement and Authenticity

In 2023, Levi Strauss & Co. announced its intention to use AI-generated models to enhance diversity in its digital shopping platform. The announcement was met with intense criticism online, as many viewed it as replacing real, diverse models with synthetic ones. Facing the backlash, the company abandoned the initiative to safeguard its reputation.

The fashion industry remains particularly sensitive to these concerns. In March 2025, H&M Group launched a project to create digital twins for 30 models. Spearheaded by Chief Creative Officer Jörgen Andersson and business developer Louise Lundquist, this approach differed from Levi Strauss & Co.'s. H&M allowed the models to retain ownership of their digital replicas, giving them the freedom to license their AI twins to H&M or even to competing brands. While innovative, this move sparked debates about authenticity and the potential for job displacement.

At the same time, Shanghai-based marketing agency PLTFRM reported a 30% boost in livestream sales when using AI-generated virtual sales avatars instead of human representatives. While the efficiency gains are clear, this practice raises questions about the broader impact on professionals - such as photographers, stylists, and makeup artists - whose work traditionally supports such productions. Beyond the legal implications, the real challenge lies in whether brands can maintain consumer trust when prioritizing efficiency over genuine human interaction.

These examples highlight the pressing need for clear licensing agreements and ethical standards, which will be explored further in the next section.

How to Reduce Licensing and Ethical Risks

When working with AI twins, it's crucial to address potential licensing and ethical risks. Here’s how you can navigate these challenges effectively.

Create Clear Licensing Agreements

A well-defined licensing agreement is a must for deploying AI twins. This contract should clearly outline how the digital replica will be used - whether for AI training, internal testing, or commercial purposes - and include termination details, like content deactivation and data deletion. State-specific laws, such as those in New York and California, require precise usage descriptions to ensure agreements remain valid.

"The specificity requirement in California and New York's laws deters the use of broad, catch-all provisions and likely requires more project-specific language in the license." - Jesse B. Levin, Partner, Glaser Weil

Compensation terms should be explicit, whether it’s a one-time fee or ongoing royalties. A moral rights clause can protect creators from uses that go against their values. Additionally, contracts with non-union performers should involve legal review to avoid compliance issues.

Use State-Specific Publicity Releases

Publicity laws in the U.S. differ by state, with 24 states currently having laws that regulate the use of likenesses. Some of these laws now specifically address AI-generated likenesses. For instance, Tennessee’s ELVIS Act (effective July 1, 2024) was the first to protect voices from unauthorized AI simulations, while Arkansas’s HB 1071 (effective February 2025) expanded the definition of "likeness" to include AI-generated images and voices.

To stay compliant, secure written publicity releases that cover AI training, dataset inclusion, derivative works, and posthumous use. In states like Illinois, the Biometric Information Privacy Act (BIPA) mandates explicit consent before collecting or using biometric identifiers, such as voiceprints or facial scans.

"Consent isn't a checkbox. It's a shield. Companies that rely on AI likenesses must treat publicity rights as core infrastructure." - Enrico Schaefer, Founding Partner, Traverse Legal

Post-mortem rights also need attention. For example, California’s AB 1836 (effective January 1, 2025) imposes civil liability for unauthorized digital replicas of deceased personalities. These protections can extend for decades after someone’s death, making clearance for historical figures just as important. Beyond legal obligations, respecting these rights helps maintain public trust, which is explored further in the next section.

Follow Ethical Deployment Practices

Legal compliance is just the baseline - ethical practices are essential for building consumer trust. Transparency is key: disclose synthetic elements clearly and document the creation and storage of digital assets to prevent unauthorized use. In February 2024, the FTC finalized a rule allowing civil penalties for entities that use AI to impersonate businesses or government organizations. Undisclosed synthetic voices in ads could also be flagged as deceptive under Section 5 of the FTC Act.

Contracts should explicitly address sub-licensing, ensuring a creator’s likeness isn’t used in third-party products without additional approval. Define the scope of use, including duration, territory, and medium (e.g., internal tools vs. public ads), to avoid misuse or "scope creep".

In December 2023, SAG-AFTRA reached an agreement with Hollywood studios (effective through June 30, 2026) requiring informed consent for AI-generated likenesses. The deal also mandates biannual meetings between the union and studios to discuss AI deployment plans. This proactive approach not only sets industry standards but also fosters trust between creators and organizations.

"The window for operating in a legal gray zone is closing. Compliance, transparency, and strong contracts aren't just best practices anymore." - Traverse Legal

How TwinTone Addresses Licensing Risks

TwinTone weaves licensing protections directly into its platform, offering brands a way to navigate AI content legal risks while respecting the rights of creators.

Explicit Consent and Staying True to Creators

TwinTone ensures every creator provides written consent, clearly outlining how their content will be used. This approach aligns with legal standards in states like New York and California. Beyond legal compliance, the platform is designed to preserve each creator's unique tone, style, and personality, making sure digital replicas reflect their authentic brand. For creators who are part of unions, existing collective protections apply, while non-union creators benefit from agreements reviewed by legal counsel. These measures create a strong foundation, complemented by advanced tools for brands.

API Tools to Define and Control Usage

TwinTone's API tools give brands the power to manage how AI-generated content is used. Brands can set clear limits on the volume of content produced and specify its use - whether for product demonstrations, livestreams, or social media posts. This helps prevent unauthorized use or "scope creep", where AI content approved for one purpose might unexpectedly show up in another campaign without additional consent.

The API also allows brands to integrate usage tracking into their workflows. This ensures every piece of content adheres to the original licensing terms, offering a safeguard against misuse. Alongside these controls, TwinTone provides valuable performance insights.

Real-Time Analytics for Compliance and Business Insights

TwinTone equips brands with real-time analytics to track both compliance and performance. These tools maintain a detailed audit trail of AI twin creation and consent records. They also flag potential risks, such as misattribution, ensuring that all content aligns with agreed-upon licensing terms. With these analytics, brands can confidently scale their content strategies without sacrificing legal protections or oversight.

Conclusion: Balancing Innovation with Responsibility

The Future of AI Twins in Branding

Throughout this discussion, it's clear that AI twins can bring incredible opportunities - but only when backed by strong legal and ethical safeguards. When used responsibly, they allow brands to create engaging content around the clock and connect with audiences worldwide. The real challenge isn't the technology itself - it's how brands choose to use it. Companies that prioritize consent and licensing as fundamental elements rather than afterthoughts will be better equipped to treat AI twins as enduring brand assets. The days of operating without clear legal guidelines are numbered, especially as states like Tennessee and California continue to enact laws protecting voice, likeness, and digital replicas.

The brands that succeed in this space will be those that blend innovation with transparency. This means openly acknowledging when content is AI-generated, keeping accurate records of all processes, and ensuring creators provide informed, written consent. As Enrico Schaefer from Traverse Legal aptly explains:

"The window for operating in a legal gray zone is closing. Compliance, transparency, and strong contracts aren't just best practices anymore. They're fast becoming baseline expectations".

The push for innovation must go hand-in-hand with accountability. The following takeaways outline how brands can navigate these responsibilities effectively.

Key Takeaways for Reducing Risks

To use AI twins responsibly, brands must adopt strategies that prioritize both legal compliance and ethical standards. Here are the key areas to focus on:

Obtain state-specific publicity releases: Clearly define the scope, duration, and territory of usage - especially in states like California and New York, where protections are stricter. Be explicit about whether the AI twin can appear in political content or be licensed to third parties.

Maintain thorough audit trails: Keep detailed records of creation and consent processes. These serve as critical evidence if disputes arise.

Ensure mandatory disclosures: Avoid FTC penalties for deceptive practices by being transparent about AI-generated content. Honesty isn’t just a legal requirement - it’s a way to build trust. With 88% of consumers valuing authenticity when choosing brands, being upfront about synthetic content safeguards both your legal standing and your reputation.

FAQs

What legal risks do brands face when using AI twins without proper consent?

Using AI twins without proper consent can land companies in hot water legally. In many U.S. states, individuals are protected by laws that safeguard their likeness and voice. For example, using an AI twin for marketing or sales without explicit permission could violate right of publicity and privacy laws, particularly in states like California, New York, and Tennessee. The consequences? Brands may face hefty fines, lawsuits, or even court orders halting their campaigns.

On top of that, copyright and trademark laws come into play if the AI twin is modeled on copyrighted performances or images. Without securing the right licenses, companies risk infringement claims, which can lead to expensive legal battles. To steer clear of these pitfalls, obtaining clear, written consent and locking down all necessary licensing agreements is a must.

Platforms such as TwinTone offer solutions by helping brands create AI twins with all the required permissions in place. This ensures the responsible use of AI-generated content for marketing and social commerce, keeping brands both creative and compliant.

How can brands comply with state-specific publicity laws when using AI-generated content?

To navigate the complexities of state-specific publicity laws, brands need to treat each state's regulations as distinct. For instance, New York requires a clear explanation of how a digital replica will be used and legal representation for such agreements. Meanwhile, California demands explicit written consent before a person’s likeness or voice can be used commercially. In states like Tennessee, there are additional expectations, such as clear licensing terms and the ability to stop unauthorized use.

A smart way to handle this is by creating a state-aware licensing process that includes these key steps:

Secure a written release that outlines the media, platforms, duration, and geographic scope for using the AI twin.

Have the release reviewed by a legal expert who understands the specific requirements of the relevant state.

Incorporate the release into a comprehensive contract that aligns with state laws.

Keep a centralized registry to track consents and usage logs for compliance.

Stay updated on legislative changes and revise agreements as necessary.

If you're working with TwinTone’s AI-generated creators, following these steps will help ensure proper permissions are in place. This not only safeguards your brand but also respects and protects the rights of the creators involved.

How can brands protect against the misuse of AI-generated likenesses?

To prevent the misuse of AI-generated likenesses, brands must prioritize clear, written consent from individuals whose likenesses are being replicated. This consent should be part of a comprehensive licensing agreement that outlines key details: how the likeness will be used, the duration of use, geographic scope, and a clause allowing for revocation. Such agreements are particularly important in states like California, New York, and Tennessee, where laws specifically address the use of digital replicas.

Beyond legal measures, brands should employ technical safeguards to protect AI-generated content. Embedding watermarks or digital signatures can help track usage and deter unauthorized distribution. Regularly monitoring content and acting quickly to enforce takedown requests are equally important steps. Additionally, ensuring that training data comes exclusively from licensed or consented sources reduces the risk of infringement. By combining legal protections, intellectual property registration, and technical tools, brands can establish a strong framework to guard against misuse.