AI Stress Detection in Social Media Behavior

How to build a futureproof relationship with AI

AI is now analyzing social media posts to detect stress by examining language, behavior, and visuals. It identifies patterns like negative words, posting habits, and even emojis or facial expressions. This technology helps businesses adjust their messaging, supports mental health studies, and improves social platforms' user care features. However, challenges like privacy concerns, biases, and ethical use remain critical.

Key Points:

How It Works: AI uses text analysis (e.g., word choice, sentiment), behavioral trends (e.g., posting frequency), and visual cues (e.g., emojis, photos) to detect stress.

Applications: Businesses can create stress-sensitive campaigns, platforms can offer mental health resources, and researchers can track community well-being.

Challenges: Privacy risks, misinterpretation of data, and biases in models require careful handling.

AI stress detection has potential but must balance technical accuracy with ethical responsibility.

Stony Brook University study uses AI to track public mental health via social media

Stress Indicators in Social Media Behavior

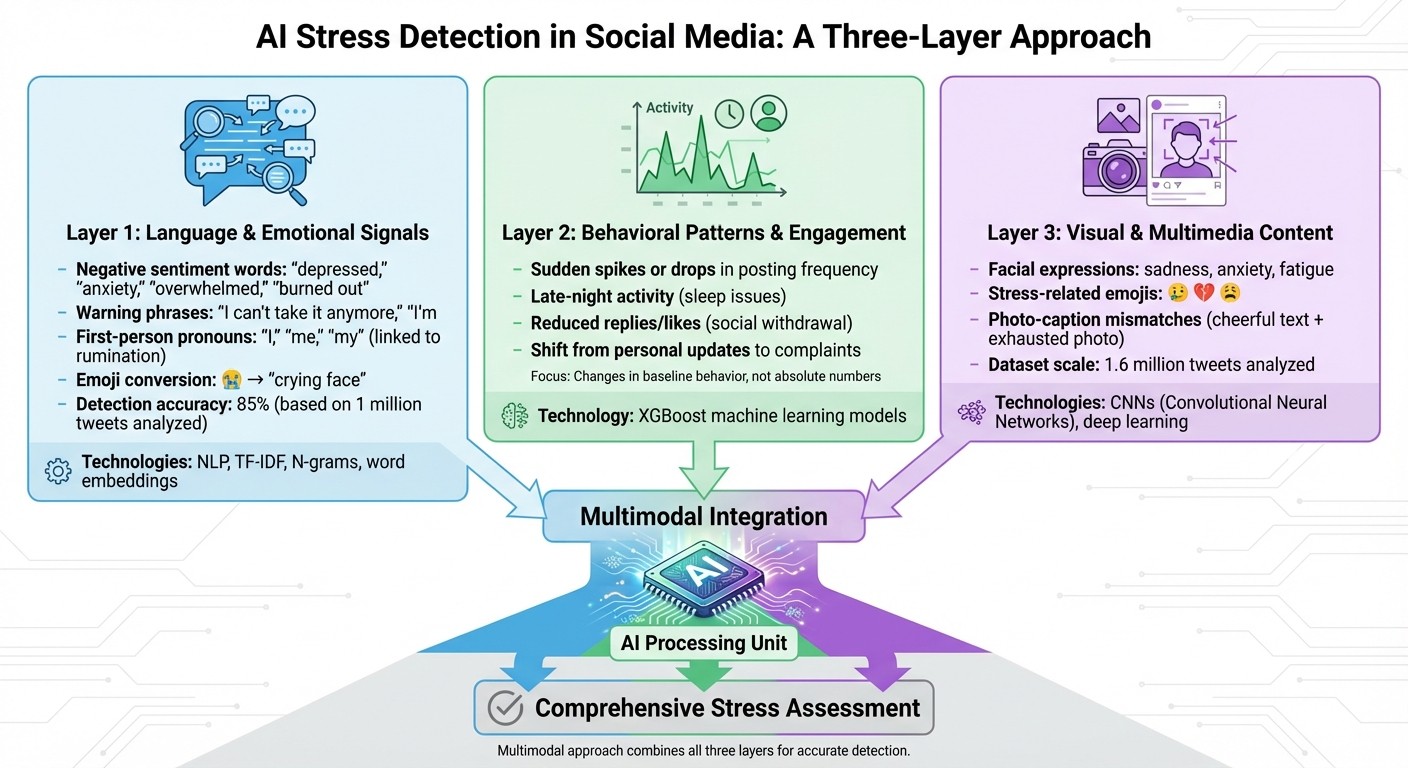

How AI Detects Stress in Social Media: Three-Layer Analysis System

AI systems are increasingly adept at identifying stress by analyzing language, behavior, and visual content. By combining these elements, they achieve a more accurate understanding of emotional states.

Language and Emotional Signals

The words people use online can reveal a lot about their mental health. AI models focus on negative sentiment words like "depressed", "anxiety", "overwhelmed", and "burned out." Phrases such as "I can't take it anymore" or "I'm so alone" often signal distress. For instance, a 2024 study analyzing 1 million tweets found that phrases like these were linked to high stress, with an 85% detection accuracy.

To go beyond simple keyword spotting, AI employs natural language processing techniques like TF-IDF, N-grams, and word embeddings. These methods help the system grasp the context of a message. Particular attention is given to first-person pronouns ("I", "me", "my"), which research connects to self-focused thinking and rumination - both common in stress. Slang and emojis, such as "im so done rn 😭", are converted into standard text during preprocessing to ensure accurate analysis.

Explainable AI tools have shown that the words flagged by these models align closely with established psychological research on depression and stress. This alignment reassures researchers that the technology isn't just identifying random patterns but is actually detecting meaningful emotional signals. These linguistic insights work hand-in-hand with behavioral and visual data to create a full picture of stress.

Behavioral Patterns and User Engagement

Stress often changes how people interact on social media. AI monitors posting frequency, noting sudden spikes or drops. Late-night activity can indicate sleep issues, while fewer replies or likes given to others may signal social withdrawal.

Stanford researcher Johannes Eichstaedt created an algorithm that uses machine learning on tweets to assess regional well-being. During the COVID-19 pandemic, his system tracked psychological states like anxiety by analyzing shifts in engagement and posting habits. For example, a user moving from sharing personal updates to posting complaints about work, health, or relationships can be a warning sign.

AI models, such as XGBoost, combine these behavioral metrics with text analysis for a more nuanced understanding of stress. Instead of focusing on absolute numbers, these systems look for changes in a user's baseline behavior. For instance, someone who typically posts twice a week but suddenly starts posting ten times a day raises different concerns than a naturally active user maintaining their usual pace.

Visual and Multimedia Content Analysis

Images and videos shared on social media can also hint at stress. Computer vision models, particularly convolutional neural networks (CNNs), analyze facial expressions in photos and videos to detect sadness, anxiety, or fatigue.

On mobile-first platforms in the U.S., emoji usage has become a key focus. Emojis are converted into text during preprocessing (e.g., 😢 becomes "sad face") and analyzed for patterns. Stress-related emojis, such as crying faces, broken hearts, or exhausted expressions, often appear more frequently in posts by younger users compared to explicit stress-related words.

Research involving 1.6 million tweets filtered by mental health keywords incorporates multimedia analysis by examining both emoji usage and image-linked posts. Deep learning models combine these visual elements with text analysis in a multimodal approach. For example, someone might post a cheerful caption alongside a photo showing exhaustion, or vice versa. This integration of text and visuals allows AI to pick up on stress signals that might be missed when analyzing only one type of data.

Together, these language, behavioral, and visual cues form the foundation of the advanced AI technologies discussed in the next section.

AI Technologies for Stress Detection

Specialized AI systems analyze stress indicators by focusing on three key areas: natural language processing (NLP), deep learning architectures, and data pipelines. These technologies form the backbone of stress detection, paving the way for practical applications explored in later sections.

Natural Language Processing (NLP) Methods

NLP techniques convert raw language data into meaningful stress signals. Modern transformer models, like BERT, are at the forefront of stress detection on platforms like social media. These models are fine-tuned on mental health datasets to identify contextual patterns in language, recognizing phrases such as "overwhelmed", "burned out", or "panic attacks." Importantly, they account for nuances like sarcasm and negation. For example, "I'm not stressed" is processed differently from "I'm stressed", a distinction older keyword-based methods often missed.

To make social media content usable for NLP, preprocessing pipelines handle tasks like converting emojis, expanding slang, and managing hashtags or mentions. For instance, a large-scale depression detection study on X/Twitter processed 1.6 million tweets by expanding abbreviations, converting emoticons, and applying specialized stopword lists before training its classifiers.

NLP workflows combine multiple techniques for a comprehensive analysis. Sentiment analysis provides an overall positive, neutral, or negative tone, while emotion classifiers focus on specific feelings like anxiety or frustration. Topic modeling methods, such as LDA, group posts into themes like exams, layoffs, or health concerns - topics often linked to stress. These features are then fed into supervised classifiers to estimate stress levels, either for individual posts or users.

Deep Learning Models for Stress Analysis

Deep learning models play a central role in stress detection. CNNs are effective for short posts, capturing local patterns, whereas LSTMs and transformer models excel at understanding longer, more complex content. Transformers, in particular, use self-attention mechanisms to identify subtle contextual cues and long-range dependencies. In production systems, lightweight versions of transformers can triage high-risk posts, while CNNs or LSTMs handle large-scale, continuous monitoring.

Deep learning also extends beyond text to include visual and audio signals, enabling a multimodal approach. For example, CNNs and vision transformers like ResNet analyze facial expressions, identifying stress-related micro-expressions such as furrowed brows or tightened lips. On the audio side, models extract features like pitch, speaking rate, and pauses, using these signals to classify stress levels. A 2024 review of wearable AI for stress detection reported impressive accuracy - 85.6% for distinguishing stress from no stress, and 94.4% for differentiating multiple stress levels.

Multimodal systems combine text, visual, and audio data through techniques like early fusion (merging all inputs before classification) or late fusion (integrating outputs from separate models). Late fusion is particularly useful for platforms like X, where data from certain modalities (e.g., muted videos) might be unavailable.

Data Collection and Preparation

Creating accurate stress detection models starts with gathering large datasets from platforms like X and Reddit. Initial datasets often rely on weak supervision, using keywords like "stressed" or "anxious" to identify relevant posts. However, not every post with these terms reflects genuine stress, so reducing noise in the labels is a critical step.

To improve labeling accuracy, weakly supervised data is often supplemented with expert-annotated or crowd-sourced labels. Expert annotation, involving clinicians or trained professionals, provides high-quality labels for smaller datasets. Crowd-sourced annotation, while scalable, requires strict quality controls, such as inter-annotator agreement checks, to maintain reliability.

After labeling, preprocessing ensures the data is ready for modeling. Techniques like stratified splits and cross-validation help reduce overfitting and noise. For U.S. English, it’s essential to account for variations in dialect, regional slang, and demographic language patterns to minimize bias. This is especially crucial when these models are used in applications like customer-facing analytics or automated systems. Properly prepared datasets are the foundation for accurate and ethical AI-driven stress detection.

Applications of AI Stress Detection

Consumer Sentiment Analysis for Brands

Brands are tapping into AI stress detection to dig deeper into customer emotions, identifying signs of burnout, anxiety, and other stress-related markers in online conversations. Unlike traditional sentiment analysis, these models pick up on nuanced indicators like cognitive overload, rumination, threatening language, and exhaustion, often found in social media posts.

Take a consumer electronics brand, for example. By scanning platforms like Twitter and TikTok for terms such as "overwhelmed" or "burned out", they can tailor their messaging to be more supportive, using phrases like "simplify your day." This approach not only creates emotionally resonant campaigns but also enhances customer support for users who consistently exhibit stress signals.

Marketing teams in the U.S. can put these insights to work by segmenting audiences into groups like "high-stress service complainers" or "stressed students before exams." They can then fine-tune their strategies - adjusting the tone, frequency, and channels of their messaging. For instance, they might A/B test ads featuring calmer visuals or reassurance-based copy, tracking metrics like click-through rates, watch time, and unsubscribe rates. Alongside these, they can monitor emotional KPIs and commercial outcomes, such as better conversion rates, reduced churn, and increased revenue per user, ensuring that stress-aware campaigns not only foster retention but also strengthen brand reputation.

Social Media Platform Features

The insights from AI stress detection aren't just for brands - they’re also influencing how social media platforms prioritize user well-being.

Platforms can use these insights to introduce features like safety prompts, tweak feed algorithms to highlight calming content, and provide contextual nudges such as "Are you okay?" for users posting high-stress content. By identifying language tied to crises - like mentions of self-harm or severe anxiety - platforms can discreetly share mental health resources, such as hotlines or coping tips, through in-app messages or directly in users' feeds.

When it comes to content moderation, AI stress detection helps platforms take a more nuanced approach. Instead of outright removing posts, the technology can flag those that combine high-stress language with harassment or threats for urgent human review. For users repeatedly posting stressed content in heated discussions, platforms might limit the reach of such posts to prevent pile-ons while still allowing direct access. This tiered system - signal, prioritize, and support - enables platforms to uphold community guidelines thoughtfully, balancing safety and free expression.

These interventions also pave the way for more emotionally responsive social commerce experiences.

Integration with Social Commerce Automation

AI-powered tools in social commerce, like TwinTone, are leveraging stress detection to create more empathetic interactions. TwinTone, for instance, uses AI to transform real creators into AI Twins for live shopping streams and on-demand videos. It can analyze customer comments for stress indicators like "shipping delays" or "can't afford this right now", and adjust its messaging to offer reassurance, flexible payment options, or clear shipping updates.

If customer feedback signals rising frustration - such as an increase in complaints or repeated messages - the AI host can slow down product pitches, acknowledge concerns, and extend Q&A sessions. For pre-recorded content, stress-aware models might opt for softer visuals, slower pacing, and supportive messaging to ensure the tone remains sensitive, avoiding the risk of a pushy "hard sell" approach.

These systems rely on inputs like public posts, comments, reactions, and timestamps. They integrate with tools such as social listening platforms, which feed stress data into CRM profiles, ad platforms for targeting adjustments, and commerce systems like TwinTone to refine scripts and interaction strategies.

Challenges and Ethical Considerations

Privacy and Data Ethics

As AI stress detection systems delve into real user data, ethical concerns take center stage, especially regarding privacy. Social media platforms using AI for stress detection face intense scrutiny. For example, a study analyzing 1.6 million tweets with mental health-related keywords like "depressed" and "anxiety" revealed significant privacy risks, as much of the data was collected without explicit user consent. Even though these posts are public, users often don’t anticipate their emotional expressions being analyzed or cataloged by AI systems.

The use of weak labeling techniques, such as keyword filtering, compounds the problem. Casual remarks can be misinterpreted as genuine stress, potentially exposing sensitive psychological data. For instance, a simple comment about feeling "stressed" over daily tasks could be flagged inaccurately, leading to unnecessary privacy violations.

To address these issues, platforms must adopt strong consent mechanisms. Opt-in systems, transparent privacy policies detailing how stress indicators are used, and anonymization techniques like tokenization are critical. U.S.-based platforms also need to comply with regulations like the CCPA. Moreover, employing mental health–specific preprocessing methods - such as converting emoticons to text or relying on expert-driven annotation instead of basic keyword matching - can refine data analysis while safeguarding privacy. These measures are crucial to balancing user protection with the system’s functionality.

Bias in AI Models

Fairness in AI models is another pressing challenge. The quality of training data plays a pivotal role in ensuring equitable outcomes. Research shows that models trained on larger datasets with subjective labels achieve only 76.9% accuracy, compared to 94.7% for smaller datasets with objective labels. This gap disproportionately affects underrepresented groups, including non-English speakers, minorities, and users with diverse linguistic styles, increasing the risk of misclassification.

Keyword filtering further exacerbates these biases. Words like "depressed" or "sad" may dominate certain demographics while failing to capture stress signals expressed through unique linguistic patterns. For instance, models trained primarily on English-language Twitter posts may overlook stress indicators embedded in regional slang or cultural expressions.

To reduce bias, training datasets need to be more inclusive and diverse. Fairness-aware algorithms and tools like LIME (Local Interpretable Model-Agnostic Explanations) can help validate predictions, ensuring that linguistic markers align with established psychological insights. These steps are essential to creating systems that work fairly across different populations.

Maintaining Empathy in Automation

Even with accurate detection and fairness, AI systems must be designed to respond empathetically. Missteps in automated responses can lead to unintended consequences. For example, a post like "sad movie" might be flagged as a sign of depression, while sarcasm or humor used to cope with real stress could go unnoticed. Such false positives and negatives can stigmatize users or result in insensitive interventions.

Platforms like TwinTone, which adjust messaging based on detected stress, must tread carefully to balance empathy with commercial goals. If an AI system ramps up promotional efforts in response to financial stress signals, it risks crossing ethical boundaries. During events like the COVID-19 pandemic, poorly calibrated stress detection algorithms have been shown to unintentionally amplify anxiety among users.

Human oversight remains vital in preserving empathy within these systems. While tools like LIME can trace AI predictions back to psychological cues, expert validation ensures that the system doesn’t worsen user concerns. Integrating sentiment analysis with transparent decision-making processes - so users understand why specific content or offers are shown - can help platforms build trust. By doing so, they can maintain the human touch that pure automation often lacks.

Conclusion

AI stress detection is changing the way we understand behavior on social media. By analyzing language, engagement patterns, visuals, and interactions, modern AI systems uncover stress signals that often go unnoticed with traditional sentiment analysis methods. Advanced models like CNNs, LSTMs, and transformers can now identify psychological distress in social media data almost instantly.

These detection capabilities offer brands and platforms a chance to gain a deeper understanding of emotional states. Stress-aware AI systems can group audiences by their emotional state, adapt messaging during high-stress times, or flag critical cases for manual intervention. For instance, TwinTone’s platform can incorporate these insights into its social commerce workflows, using AI Twins to create user-generated content videos and host livestreams with a tone that feels supportive and understanding.

Beyond brand applications, AI’s role in mental health support is expected to remain assistive rather than diagnostic. On a larger scale, stress mapping at the population level can help U.S. health systems allocate resources more effectively and monitor crises. On an individual level, these tools can encourage users to take breaks or seek help when needed.

However, responsible use of these technologies is key. This means prioritizing data minimization, conducting bias audits, and setting clear ethical guidelines. Models must work fairly across all demographics, and brands should focus on using stress insights to foster trust and build more empathetic connections.

As AI stress detection evolves, the organizations that succeed will be those that combine technical expertise with genuine care - leveraging these tools to support users and create healthier digital environments for everyone.

FAQs

How does AI protect user privacy when identifying stress on social media?

AI places a strong focus on protecting user privacy through rigorous data security protocols and by anonymizing personal details whenever feasible. It complies with key privacy regulations like GDPR and CCPA, ensuring that social media data is managed responsibly and ethically.

These measures not only prevent the misuse of sensitive information but also allow AI to identify stress patterns effectively - without sacrificing individual privacy.

How does AI ensure fairness and accuracy when detecting stress in social media behavior?

To promote equity and precision, AI models rely on diverse datasets that reflect a broad spectrum of social and cultural backgrounds. Developers incorporate fairness algorithms to reduce biases and perform regular bias audits to uncover and resolve any concerns. Moreover, outputs are consistently reviewed and adjusted to ensure dependable and fair results in stress detection.

How can businesses responsibly use AI to detect stress in social media behavior?

Businesses can use AI responsibly for stress detection by placing a strong emphasis on transparency and user consent. Users should be clearly informed about how their social media data is being analyzed and must provide explicit agreement before any data is used. At the same time, implementing robust data privacy measures is critical to protect sensitive information and prevent any form of misuse.

The insights generated by AI can play a key role in improving customer experiences. For example, businesses can use these insights to offer better support, tailor interactions to individual needs, and raise awareness about mental health. When ethical practices are prioritized, companies can not only leverage AI effectively but also foster trust and create meaningful, positive outcomes for their audience.