AI Moderation for UGC Platforms: What to Know

How to build a futureproof relationship with AI

AI moderation is reshaping how platforms manage user-generated content (UGC) at scale. With billions of posts, videos, and images uploaded daily, platforms like YouTube, Reddit, and Roblox rely on artificial intelligence to filter harmful material quickly and efficiently. AI systems use machine learning, natural language processing (NLP), and computer vision to detect violations, protect users, and reduce the burden on human moderators. This technology also powers AI messaging platforms that facilitate safe, 24/7 interactions between creators and fans.

Key points:

Massive scale: Platforms like YouTube process 500+ hours of video uploads every minute.

AI efficiency: AI detects harmful content in milliseconds, handling tasks human teams can't manage alone.

Human-AI balance: AI handles high-volume filtering, while humans review complex or context-heavy cases.

Challenges: False positives, context gaps, and language limitations remain hurdles.

Best practices: Combine AI with human oversight, regularly update models, and define clear moderation policies.

AI moderation is essential for maintaining safe, engaging online spaces while addressing the challenges of scale and complexity. Platforms must prioritize clear rules, hybrid systems, and ongoing improvements to keep pace with evolving threats.

AI Content Moderation with Google's Ninny Wan

How AI Moderation Works

AI moderation relies on machine learning models trained on massive datasets of text, images, and videos. These systems can identify safe versus harmful content by analyzing factors like intent, tone, and context - all in a matter of milliseconds.

Content Analysis and Filtering

AI systems examine posts from multiple angles. For images and videos, computer vision technology reviews each frame to detect explicit content, violent scenes, or weapons using visual patterns and cues. For text embedded in visuals - like memes or screenshots - Optical Character Recognition (OCR) steps in, ensuring users can't sidestep text-based filters. Once analyzed, the AI assigns confidence scores (ranging from 0 to 1) to categorize potential violations, such as hate speech, harassment, or violence. If a score crosses a predefined threshold, the content gets flagged, blocked, or shadow-blocked, depending on the platform's rules.

Modern systems also handle multimodal inputs, combining text and images to catch more subtle violations. For example, OpenAI’s moderation endpoint - currently available at no cost - can evaluate categories like harassment, hate, self-harm, sexual content, violence, and illegal activity across both text and images.

This initial filtering lays the groundwork for more advanced linguistic analysis powered by natural language processing (NLP).

Machine Learning and Natural Language Processing (NLP)

NLP enables AI to grasp deeper complexities, such as grammar, tone, slang, and even deliberate misspellings. Large Language Models (LLMs) take this a step further by analyzing entire conversations or threads instead of isolated words. This broader context helps detect subtle forms of harassment or misinformation that traditional keyword-based systems might overlook. These models can also identify the language of incoming content and apply the appropriate moderation rules across more than 30 languages.

AI systems go beyond basic detection by classifying content into severity levels - Low, Medium, High, or Critical - allowing platforms to prioritize their responses. Advanced techniques like sentiment analysis and entity recognition further help in uncovering the real intent behind user posts, making the moderation process more accurate and effective.

Real-Time Moderation

Building on detailed content analysis, AI-powered real-time moderation ensures quick action against harmful material. When content can spread within seconds, speed becomes crucial. AI engines review content instantly and take immediate steps, such as flagging it for human review, automatically blocking it, or shadow-blocking it based on pre-set policies.

This rapid response is made possible by serverless architectures and scalable services like AWS Lambda and Amazon SageMaker, which handle traffic spikes seamlessly. Platforms often choose between two approaches: pre-moderation, where content is reviewed before going live, and post-moderation, where content is published immediately while AI reviews it in the background. Pre-moderation works well for high-security environments, such as child-safe apps, while post-moderation suits social platforms where instant interaction is key. Automated actions are also in place to address high-severity issues immediately, without waiting for human input.

Benefits of AI Moderation for UGC Platforms

AI Moderation Statistics: Scale and Performance Metrics for UGC Platforms

Handling Massive Content Volumes

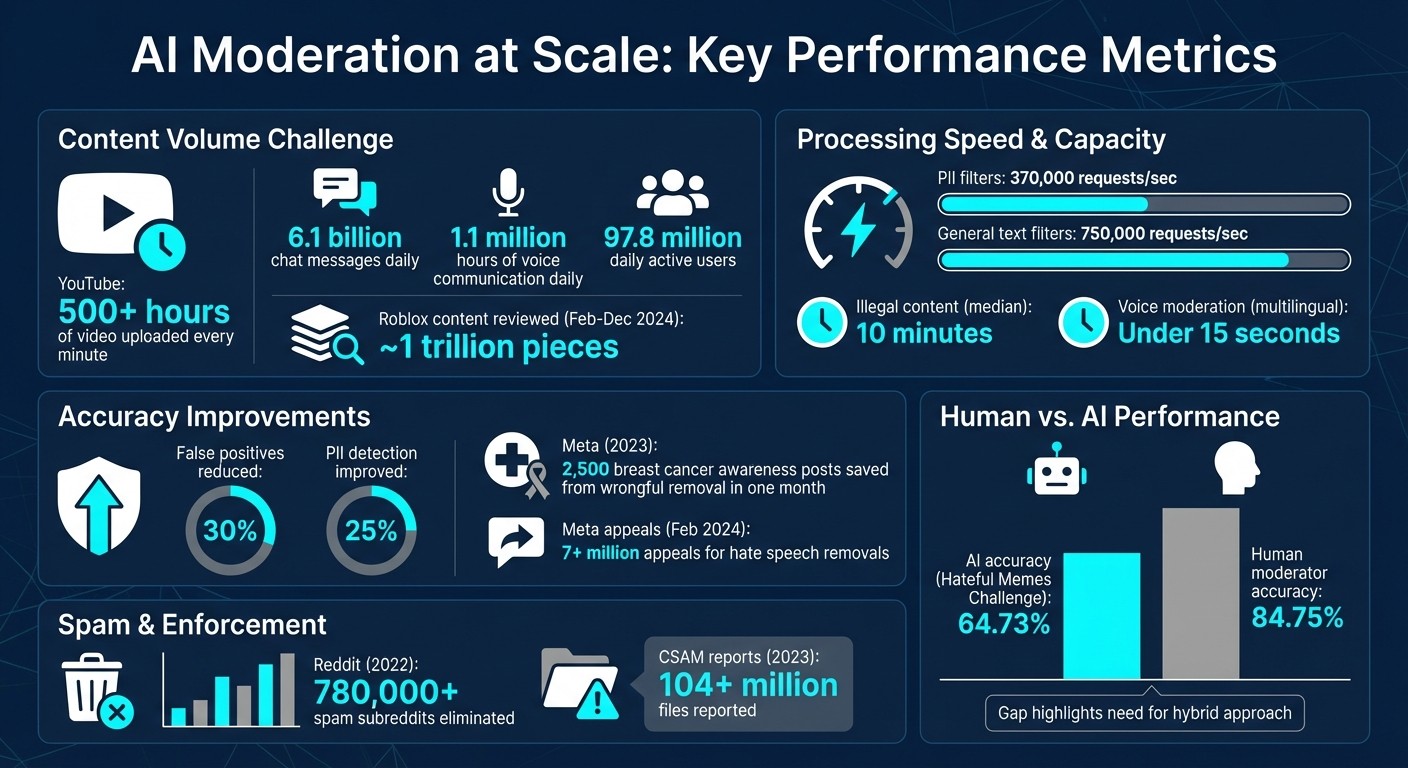

User-generated content (UGC) platforms deal with staggering amounts of uploads and interactions daily. Take YouTube, for instance - users upload over 500 hours of video every minute. On Roblox, users exchange 6.1 billion chat messages and engage in 1.1 million hours of voice communication every single day. Between February and December 2024, Roblox users collectively uploaded around 1 trillion pieces of content. Managing this scale is far beyond what human moderators can handle alone.

"Consistently moderating this volume of content within milliseconds is a job that humans cannot manage alone - irrespective of how many we have."

– Naren Koneru, Vice President of Engineering, Safety, Roblox

To tackle this challenge, Roblox's engineering team, led by Naren Koneru, revamped their moderation system in July 2025. They introduced a GPU-based serving stack and used model quantization to dramatically increase capacity. The system now processes 370,000 requests per second (RPS) for Personally Identifiable Information (PII) filters and 750,000 RPS for general text filters. This upgrade ensures that most violations are filtered out before they even reach the platform's 97.8 million daily active users. These advancements not only improve efficiency but also reduce operational costs significantly.

Saving Time and Cutting Costs

AI moderation reshapes how platforms handle content review, offering a cost-effective alternative to scaling up human moderation teams. With AI, platforms can handle growing content volumes without needing to hire hundreds of thousands of moderators, keeping operational costs in check. The speed is unparalleled - AI systems make decisions in milliseconds, while manual review of billions of messages would require an immense human workforce working around the clock.

In 2022, Reddit eliminated over 780,000 spam subreddits. Similarly, Roblox's 2025 updates reduced false positives by 30% and improved PII detection rates by 25%. The median response time for addressing illegal content dropped to just 10 minutes, and voice moderation systems now handle audio content in under 15 seconds across multiple languages. These gains came from technical advancements, like separating tokenization from inference and employing model distillation, rather than adding more staff. The result? Faster moderation, better safety, and lower costs.

Enhancing User Safety

In the fast-paced world of online platforms, harmful content can spread in seconds. AI moderation steps in to prevent this by identifying and blocking violations before they reach users. It proactively scans text, images, and videos in real time, eliminating the need to rely solely on user reports. This not only protects users from harmful material but also spares human moderators from the emotional toll of reviewing distressing content.

Users notice the difference. Platforms using AI moderation maintain a median response time of under 10 minutes for illegal content, a vast improvement over traditional methods. Real-time feedback also helps users quickly understand and follow platform rules. Interestingly, 30% of users aged 18–34 express support for stricter content moderation policies. This highlights how effective moderation fosters trust and encourages user engagement, creating safer and more welcoming online spaces.

Challenges and Limitations of AI Moderation

AI moderation, while offering many advantages, faces a range of challenges that impact its ability to operate fairly and accurately.

False Positives and False Negatives

One of the biggest hurdles for AI moderation is finding the right balance between false positives and false negatives. False positives occur when the system mistakenly flags legitimate content, while false negatives happen when harmful material slips through undetected. This balancing act is a constant struggle for platforms. For instance, in February 2024, Meta reported receiving over 7 million appeals from users whose content was removed under hate speech rules.

A major factor behind these errors is the difficulty AI has with understanding context. For example, it often struggles to differentiate between posts meant to educate or raise awareness - like breast cancer awareness campaigns - and content that violates platform guidelines, such as prohibited nudity. In 2023, Meta enhanced its AI's contextual understanding for breast cancer awareness content, successfully preventing the wrongful removal of 2,500 posts in just one month. On the flip side, false negatives continue to allow harmful material, including deepfake intimate images, hate speech, and coded language designed to bypass detection, to circulate. Such failures can erode user trust and compromise safety.

Context and Cultural Differences

AI moderation systems perform much better in English than in other languages, which creates a significant fairness issue. These systems are heavily reliant on "high-resource" languages with large datasets for training, but they struggle when it comes to "low-resource" languages like Yoruba or Swahili, where digitized text is scarce. Multilingual models often inherit biases from their English training data, which can lead to the imposition of Western values or gender stereotypes on other languages. For instance, gender-neutral languages like Chinese can end up with associations that don't naturally exist.

Cultural nuances add another layer of complexity. AI frequently misinterprets African American Vernacular English (AAVE) or reclaimed terms as offensive. It also struggles to distinguish between harmful speech and critiques of such speech.

"Calling something hate speech is not an act of classification, that is either accurate or mistaken. It is a social and performative assertion."

– Tarleton Gillespie, senior principal researcher at Microsoft Research

Additionally, users often exploit AI's weaknesses by using intentional misspellings - like "unalive" for suicide or "c0vid" to dodge filters - making moderation even harder.

Training Data Requirements

AI systems are only as effective as the data they are trained on, and this presents significant challenges. Training datasets often embed societal biases, which can lead to over-enforcement against marginalized groups.

"Whenever you see 'AI content moderation,' try mentally substituting 'machines efficiently applying all our biases, one by one, then all at once.'"

– Alex Feerst, leader of Murmuration Labs

Creating and maintaining high-quality training datasets is both time-intensive and costly. Moreover, as online trends shift, models experience "drift", causing their accuracy to decline over time. While foundational models can support over 80 languages, their accuracy drops sharply for non-English languages due to insufficient labeled data. For example, in Meta's "Hateful Memes Challenge", which involved 10,000 items, state-of-the-art AI models achieved just 64.73% accuracy, compared to 84.75% for human moderators. This gap underscores how much progress is still needed. Overcoming these issues is critical to building trust and ensuring effective moderation.

Best Practices for Implementing AI Moderation

To effectively implement AI moderation, it's crucial to combine automated tools with human oversight, ensuring a balanced and effective approach.

Define Clear Moderation Policies

Start by establishing clear community guidelines that align with local regulations like the EU Digital Services Act, the UK Online Safety Bill, and the U.S. REPORT Act. These policies should break harmful content into specific categories, such as hate speech, Child Sexual Abuse Material (CSAM), self-harm, misinformation, and harassment. For instance, in 2023, registered electronic service providers reported over 104 million files related to CSAM, underscoring the need to prioritize certain categories due to legal and ethical obligations.

Create tiered enforcement actions that correspond to the severity of violations. For example, a minor first-time infraction might result in a warning, while repeated or serious offenses could lead to temporary suspensions or permanent bans. To ensure fairness, build an appeals process that allows users to challenge erroneous decisions. Tools like GPT-4 can be used to draft and refine policy language, significantly speeding up the process from months to just hours.

These well-defined policies provide a foundation for integrating AI tools with human moderation.

Combine AI with Human Moderation

While AI is excellent at identifying clear-cut violations, such as explicit content or flagged keywords, human moderators are indispensable for interpreting context-heavy or complex cases. A hybrid system ensures that flagged content is filtered through prioritized review queues, allowing human moderators to focus on the most critical or ambiguous cases.

AI also plays a vital role in protecting human moderators by automatically blocking the most graphic or disturbing material, reducing the emotional toll on moderation teams. Furthermore, AI tools can supply moderators with valuable context - such as conversation threads, user metadata, and the rationale behind flagged content - enabling quicker and more informed decision-making.

Monitor and Update AI Models

AI models can lose accuracy over time as online behaviors and trends evolve, a phenomenon known as "drift." To address this, implement active learning pipelines that evaluate production data, identify key samples using uncertainty sampling, and adjust datasets to improve diversity.

"Active learning can effectively expand the training dataset to capture a significantly (up to 22x) larger amount of undesired samples when dealing with rare events." – OpenAI

Regularly fine-tune flagging thresholds to strike a balance between false positives and false negatives. Use input reduction techniques to identify overfitted tokens; for example, one study reduced the average sample length from 722.3 characters to just 15.9 without compromising the model's performance. For rare or emerging content categories, generate synthetic data using large language models (LLMs). Adding 69,000 curated synthetic examples improved hateful content detection, raising the average AUPRC from 0.417 to 0.551. To maintain system performance, leverage tools like Amazon CloudWatch to monitor infrastructure, identify bottlenecks, and track error logs in real time.

Conclusion

Managing content effectively in today's digital world requires a strategic mix of AI's efficiency and human discernment. With the sheer volume of global data and instant uploads far surpassing what human teams alone can handle, AI moderation has become a necessity. Consider the numbers: Roblox processes an astounding 6.1 billion chat messages daily, and between February and December 2024, their AI systems reviewed nearly 1 trillion pieces of content, responding to illegal material within an impressive median time of just 10 minutes.

This combination of speed and human insight forms the backbone of successful content moderation strategies. AI excels at quickly flagging and handling straightforward violations, while human moderators tackle the more nuanced cases that require context and judgment. This partnership not only safeguards users but also helps protect moderation teams from the emotional strain of confronting disturbing content.

"Safety is foundational to everything we do at Roblox. From the beginning, we've proactively moderated the content because we knew moderation was critical for a platform built on user-generated content." – Naren Koneru, Vice President of Engineering, Safety, Roblox

For those managing a UGC platform, it's wise to prioritize high-risk content categories like CSAM and hate speech first, before addressing broader issues like toxic behavior. Establish clear guidelines, follow best practices for AI moderation, and integrate human expertise where necessary. Regularly updating your systems ensures your platform stays ahead of emerging threats. Platforms that master this balance will not only create safer spaces but also foster trust and long-term user loyalty.

The future of user-generated content lies in moderation that balances efficiency with fairness, and AI is the key to achieving this at scale. By adopting this hybrid approach, you can build communities that are both safe and engaging for everyone.

FAQs

How do AI moderation tools ensure both speed and accuracy when filtering content?

AI moderation tools excel at scanning and filtering content at lightning speed. These systems use lightweight models to quickly review text, images, or video frames, flagging potentially harmful material in just milliseconds. This rapid response is essential for platforms that manage millions of posts every single day. To ensure accuracy, flagged content often undergoes a second layer of review, either by more advanced AI models or by human moderators, which helps minimize mistakes like false positives or negatives.

Platforms can tweak these tools to better suit their needs. For example, they can adjust flagging thresholds depending on how much risk they're willing to tolerate or retrain models with updated data to improve performance. Many modern AI moderation systems also come with a key advantage: context awareness. They can pick up on subtleties like sarcasm or regional differences without sacrificing speed. This balance - between fast, real-time protection and careful, accurate moderation - helps platforms stay secure while avoiding unnecessary censorship of legitimate content.

What challenges does AI face when moderating content in multiple languages?

AI moderation faces a tough road when it comes to handling multiple languages. One of the biggest challenges lies in grasping slang, idioms, and the subtle nuances that are unique to each language. These elements often don’t translate well, leaving room for misunderstandings. The problem becomes even more pronounced with low-resource languages - those that don’t have enough data available to properly train AI systems - making accurate moderation even harder.

On top of that, the differences in grammar, syntax, and vocabulary from one language to another can trip up AI, leading to mistakes or misinterpretations. While advancements are being made, these complexities underscore the constant need for improvement to ensure moderation systems work fairly and effectively for all linguistic communities.

How can platforms balance AI moderation with human oversight to ensure fairness?

To ensure moderation practices are fair and effective, platforms should blend AI tools with human oversight. AI excels at scanning large volumes of content quickly, identifying and flagging harmful or inappropriate material, such as hate speech or illegal posts. However, AI isn't flawless - it can misinterpret context, miss subtleties, or even amplify biases. This is where human moderators step in, offering critical thinking, cultural awareness, and the ability to catch nuances like sarcasm or new slang.

For moderation to work smoothly, platforms should follow a balanced approach:

Leverage AI for initial content filtering and flagging.

Forward complex or ambiguous cases to human moderators for review.

Conduct regular audits of AI systems to identify and correct biases.

Establish transparent appeal processes for users to challenge moderation decisions.

By merging AI's speed with human expertise, platforms can foster safer online spaces, build trust, and ensure moderation decisions are thoughtful and precise. Tools like TwinTone’s AI-generated creator twins can also benefit from these safeguards, ensuring content quality and compliance while reaching audiences effortlessly.