AI Emotion Recognition in Live Streams

How to build a futureproof relationship with AI

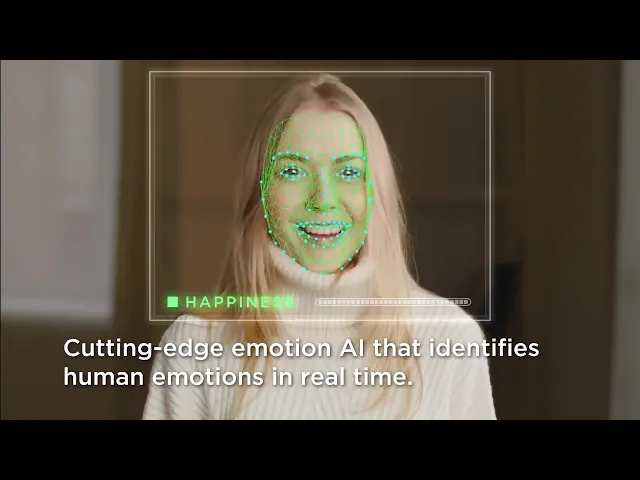

AI emotion recognition is transforming live streaming. By analyzing facial expressions, voice tones, and chat sentiment in real time, this technology helps streamers and brands understand audience emotions like happiness, frustration, or boredom. The result? Smarter, instant decisions during streams that keep viewers engaged and drive better outcomes.

Key Insights:

What It Does: Tracks emotions using video, audio, and text, assigning labels (e.g., "happy") with confidence scores.

How It Works: Combines facial expression analysis, voice emotion detection, and chat sentiment analysis for accurate insights.

Why It Matters: Enables real-time content adjustments, boosts engagement, and improves live shopping experiences.

Applications: Optimizing live shopping, AI-powered creator avatars, and emotion-driven storytelling.

Ethical Concerns: Addressing bias, ensuring privacy, and maintaining transparency are critical for responsible use.

By integrating emotion AI, brands can improve live-streaming outcomes while respecting viewer privacy and ethical considerations.

Emotion AI: Emotion Recognition Technology by Visage Technologies

Core Technologies Behind Emotion Recognition Systems

Grasping how emotion recognition systems operate means delving into the technologies that make them tick. These systems combine facial expression analysis, voice-based emotion detection, and text sentiment analysis to create a well-rounded understanding of audience emotions during live streams.

Facial Expression Analysis

Facial expression analysis relies on computer vision to identify and interpret facial features. Using tools like FaceNet, the system first detects a face and then focuses on key features - eyes, mouth, and eyebrows - to determine emotional states. A ResNet classifier steps in to categorize these features into emotions such as happiness, anger, sadness, surprise, fear, disgust, or neutrality. Training these models requires extensive datasets; for instance, one system trained on over 35,000 labeled images achieved a 71% accuracy rate when tested on new data. ResNet18, with its approximately 11 million parameters, enables real-time emotion detection, making it a practical choice for live applications.

Interestingly, these systems tend to identify positive emotions like happiness and surprise more effectively than negative ones, partly due to inconsistencies in labeled training data. Despite this, they remain essential for capturing live feedback. Real-time emotion detection APIs, such as Microsoft's Azure Face API and Arya.ai's Emotion Detection API, process inputs like live video streams and deliver structured JSON responses. These responses include emotion labels, confidence scores, and bounding-box coordinates, often within 100 milliseconds. This speed ensures a seamless, interactive streaming experience. To complement facial data, audio cues add depth to the emotional analysis.

Voice and Audio Emotion Recognition

Voice-based emotion recognition focuses on analyzing prosodic features like pitch, energy, speech rate, and voice quality. By processing audio streams through deep learning models that analyze spectrograms, these systems can identify emotional patterns in real time. This layer of analysis enriches the understanding of both the streamer’s tone and the audience's reactions, offering a more nuanced view of emotional dynamics during live broadcasts.

Text and Chat Sentiment Analysis

Natural Language Processing (NLP) plays a key role in analyzing chat messages during live streams. Tools like Hootsuite and Brand24 use AI-driven sentiment analysis to interpret viewer emotions, whether they’re engaged, frustrated, excited, or disappointed. Emojis often provide clear emotional signals - a thumbs-up might indicate approval, while a crying emoji could suggest sadness or distress. Beyond sentiment analysis, these tools also help moderate chats by filtering out spam and harassment, creating a safer environment for everyone. The insights gained from chat analysis can also guide content strategies, making streams more engaging and responsive to audience needs.

Multimodal Fusion for Better Accuracy

No single method can fully capture the complexity of human emotions. That’s where multimodal fusion comes into play, combining data from video, audio, and text for a more comprehensive analysis. By cross-referencing emotional signals from different sources, these systems can better understand the context. For example, if a viewer’s facial expression shows interest but their chat message conveys frustration, the combined data provides a clearer picture of their true sentiment.

Advanced systems even link face and voice cues to generate instant, aggregated emotion summaries. This approach is especially valuable for maintaining engaging live streams. Take TwinTone’s AI Twins, for instance - these AI hosts use multimodal fusion to adjust their tone, presentation style, and content recommendations in real time, making interactions feel more natural and adaptive. This level of responsiveness keeps audiences engaged and ensures that live streams remain dynamic and impactful.

Applications of Emotion Recognition in Live Streaming

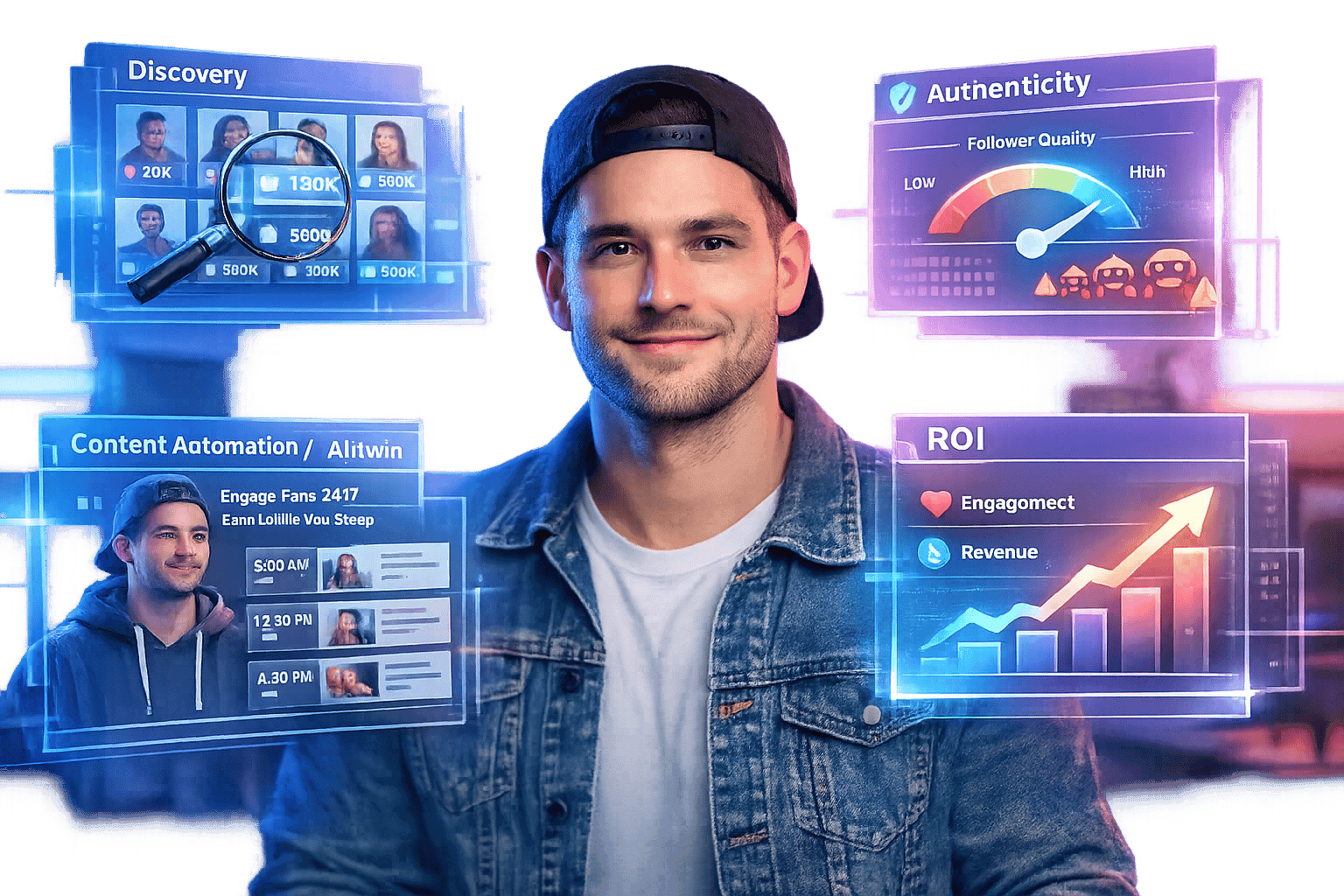

Emotion recognition technology is making waves in live streaming, shaping how brands approach live shopping, creator interactions, and storytelling in U.S.-based streams. By analyzing real-time emotional cues, this technology is rewriting the playbook for engaging audiences.

Improving Live Shopping Experiences

Timing is everything in live shopping. Emotion recognition helps brands identify key moments when viewers are excited, confused, or losing interest. For example, if excitement spikes, the platform can instantly highlight product details, showcase special offers, or even drop a time-sensitive discount. On the flip side, if frustration or confusion arises - say, due to unclear shipping details or sizing options - the host can pause, provide clarity, or display FAQs to keep the audience engaged.

Brands often track metrics like click-through rates, add-to-cart actions, conversions, and average order value. By linking these metrics with real-time emotion data - such as the percentage of viewers marked as "interested" versus "bored" - teams can fine-tune product presentations, adjust pricing strategies, and optimize the timing of offers to boost revenue. For instance, a cosmetics brand might notice heightened positive emotions during a demo of a specific shade. They could then spotlight complementary products, introduce a flash discount, and address common questions to overcome hesitation.

Emotion recognition works by processing video, audio, and chat data through protocols like WebRTC or RTMP. APIs analyze this input and generate structured emotion data, which is fed into dashboards and alerts. These insights allow brands to act quickly - for example, pinning a product card or offering a coupon when interest peaks during a sneaker showcase.

Creator AI Twins and 24/7 Engagement

AI Twins are changing the game for creators by enabling round-the-clock, emotion-driven live streams. Platforms like TwinTone offer AI-driven avatars that mimic a creator’s style, adjusting tone and pacing in real time to create a seamless experience for viewers.

These AI Twins are trained to respond naturally to shifts in audience sentiment. For example, if chat data signals confusion, the AI Twin might slow down and clarify details. If excitement builds, it could celebrate and suggest related products. This approach keeps 24/7 streams engaging without becoming monotonous, allowing creators to maintain their presence without being physically present.

Creators and brands can use emotion recognition to develop detailed response playbooks. These might include offering explanations during moments of confusion, showing empathy when frustration is detected, or using celebratory language to amplify positive emotions. In cases where repeated negative sentiment arises - like complaints about shipping - emotion thresholds can trigger alerts for the creator to step in. AI Twins can also adjust their outreach based on viewer mood, avoiding repetitive prompts and pacing calls-to-action to prevent fatigue. Privacy concerns are addressed through clear opt-in options and transparent disclosures.

Dynamic Content and Storytelling

Emotion recognition also breathes life into storytelling, turning static scripts into dynamic, audience-driven narratives. Creators can design flexible story arcs with branching points, allowing them to dive into technical details, shift toward entertainment, or speed up calls-to-action based on real-time audience reactions. For instance, if viewers appear bored, the host or AI Twin can pivot to a product demo, a testimonial, or even a quick poll. Alternatively, if curiosity is high - evident through detailed questions or focused discussions - the stream could expand to include behind-the-scenes content or expert interviews.

Dashboards displaying real-time emotion data help creators decide when to introduce interactive elements like giveaways, polls, or user-generated content. This flexibility keeps the narrative fresh and engaging, particularly for U.S. audiences who appreciate adaptability, often rewarding it with longer watch times and increased subscriptions.

After each stream, teams analyze emotion metrics alongside business outcomes. Spikes in joy or interest can be matched to product clicks and purchases, while moments of confusion or irritation might align with drop-offs or refund requests. Heatmaps overlaid on the stream timeline can highlight areas needing improvement, such as clearer explanations or enhanced visuals. By segmenting data by device, time zones, and audience type (new vs. returning viewers), brands can refine their scheduling, formats, and offers.

Integrating AI chatbots with emotion recognition further enhances the experience by providing personalized support and recommendations. This ensures efficient engagement without overwhelming the host. Industry surveys reveal that 71% of streamers expect virtual co-hosts to become standard in live streaming within the next five years. This shift signals that emotion-aware AI assistants are moving from experimental tools to essential components of live video strategies, setting the stage for scalable and ethical audience engagement.

Design and Ethical Considerations

When incorporating emotion recognition into live streams, it's not just about the tech - it’s about doing it responsibly. This technology raises important ethical questions, from biases in its algorithms to concerns over privacy and potential misuse. The challenge is to create systems that respect viewers while still offering meaningful insights.

Bias and Model Reliability

Emotion recognition models often fall short when it comes to accuracy across diverse demographic groups. Many models are trained on limited datasets - often featuring Western faces in controlled environments - which can lead to misinterpretations when analyzing expressions from underrepresented groups. This includes Black and Brown faces, older adults, individuals with unique facial expressions or speech patterns, and those from different cultural backgrounds. Such biases can result in skewed analytics and unintended content adjustments.

Take, for example, a ResNet18 classifier that achieved 71% accuracy across seven emotion categories. While it performed well at identifying happiness, it struggled with negative or mixed emotions, revealing a class imbalance. In live streaming scenarios, this imbalance could distort how brands perceive audience sentiment.

To tackle these issues, teams need to ensure their datasets represent a wide range of racial groups, genders (including non-binary identities), age groups, and individuals with disabilities. Evaluating performance by subgroup, rather than relying on overall averages, is essential. If accuracy for one group lags significantly behind others, it’s a clear sign that more work is needed.

Using multimodal approaches - combining facial, vocal, and textual cues - can reduce reliance on any single signal. Additionally, incorporating human oversight for high-stakes decisions, like automated moderation or content targeting, can help mitigate errors. Emotion outputs should always be treated as probabilities, not definitive truths. For groups where predictions are less reliable, systems should display confidence levels and warnings to avoid overreliance.

Addressing bias is just one piece of the puzzle. Protecting viewer data is equally critical.

Privacy and Consent

Emotion data is sensitive - it’s often classified as biometric data - and requires explicit viewer consent. Before a stream begins, viewers should see a clear consent screen outlining what data is collected, how it’s processed (on-device or in the cloud), its purpose, how long it’s stored, and whether it’s shared with third parties.

Best practice? Give viewers a genuine choice. Provide options like "Accept" or "Continue Without Emotion Analysis". If facial analysis is involved, make it clear that the camera is being used to detect emotions. Similarly, for voice or chat-based analysis, explain that tone and text are being processed to gauge sentiment and engagement.

When creators use AI Twins - digital avatars that mimic their likeness, like those on platforms such as TwinTone - contracts and dashboards should clarify how viewer emotion data influences the AI Twin’s behavior. Creators should also have separate consent flows for the use of their face, voice, and style, alongside emotion analytics.

For streams aimed at children, stricter standards apply. U.S. regulations require parental consent and impose tighter controls on how data can be used.

A privacy-first approach should guide every step of emotion recognition. Only collect what’s absolutely necessary - like per-frame emotion scores instead of raw video - and avoid storing identifiable images or audio if aggregate data suffices. Some APIs already follow this principle, processing frames securely and returning only JSON labels, confidence scores, and timestamps without retaining the media.

Where possible, prioritize on-device processing to minimize the transmission of raw data. Use transient buffers that discard data immediately after analysis. Emotion outputs should be pseudonymized, linking them to session IDs rather than personal information. Short-term data retention (seconds to days) should apply to granular data, while longer-term storage should be limited to aggregated, non-identifiable metrics (e.g., the percentage of viewers showing positive emotions per minute).

Encryption is non-negotiable - both in transit and at rest. Contracts with third-party vendors must ensure prompt data deletion, prohibit secondary uses like training unrelated models, and comply with privacy laws. Dashboards should focus on aggregate insights, such as heatmaps or group-level statistics, rather than zooming in on individual viewers unless absolutely necessary.

Ethical Implementation in Live Streams

Combining bias reduction with strong privacy protections is the cornerstone of ethical live streaming. Transparency plays a huge role here. Brands should adopt a layered approach to transparency, offering a simple, user-friendly notice when viewers join a stream, with links to more detailed explanations for those who want them. For example, a notice might say: "This stream uses AI to estimate audience emotion from video, voice, or chat to personalize content and measure engagement." Participation should always be optional.

In-stream indicators, like an “Emotion-Aware” icon, can signal active analytics - similar to how video calls display recording indicators. For creators using AI Twins on platforms like TwinTone, on-screen labels or host descriptions should clarify that viewers are interacting with an AI, not a live person, and explain how audience reactions may influence the AI Twin’s behavior. TwinTone’s framework includes clear guidelines to ensure emotion analytics inform AI Twin behavior without compromising creator or viewer rights.

Transparency pages can provide further details, such as the types of signals analyzed (face, voice, text), examples of how these signals influence content (e.g., adjusting product demos based on viewer frustration), and instructions for opting out or revoking consent. FAQs, explainer videos, and help-center articles can make this information more accessible.

Ethical implementations must avoid using emotion data for manipulative purposes. For instance, it’s unacceptable to exploit signs of anxiety or sadness to push high-pressure sales tactics. Instead, personalization should focus on improving the viewer experience - like slowing down explanations or offering extra FAQs when confusion is detected.

Automated decisions with major consequences - such as restricting access, flagging content, or adjusting prices - should never rely solely on emotion estimates given their limitations. If emotion recognition is used for monetization strategies, such as testing thumbnails or offers based on collective emotional responses, this must be transparently disclosed, with opt-out options available. Systems should avoid creating detailed emotion profiles that persist across streams unless users explicitly opt in.

For AI Twins, ethical design must balance creator rights with viewer expectations. Creators should have clear control over where and how their AI Twin is used, how emotion signals guide its behavior, and whether analytics from Twin-hosted sessions can be shared with brand partners. Viewers, in turn, should always know when they’re interacting with an AI Twin and whether it’s using emotion recognition to adjust its tone, pacing, or product highlights.

Because AI Twins can operate continuously, platforms should limit prolonged emotion tracking. Focus on session-level insights rather than long-term emotional patterns. Policies should also differentiate between fully automated responses and those that merely suggest actions to human moderators or creators. In high-stakes scenarios, human oversight is always the safer choice.

Concerns from groups like the FTC, ACLU, and EFF highlight the risks of emotion AI in areas like employment, education, and policing. These concerns underscore the importance of context-specific deployment and strong governance. Ethical live streaming should include independent audits, clear documentation of use cases and limitations, and user-facing explanations of how emotion data is used - and what it’s not used for.

Conclusion and Future Outlook

AI emotion recognition is changing the way brands and creators connect with audiences during live streams. By analyzing real-time data like facial expressions, vocal tones, and chat sentiment, these systems allow hosts to adjust their content, timing, and even offers to align with viewers' moods. This creates a more personalized, data-driven experience, where brands can swiftly act on audience reactions, setting a new standard for live shopping engagement.

Today’s deep learning systems are already delivering impressive speed and accuracy in identifying emotions, enabling dynamic interactions such as automated chat responses, on-the-fly camera adjustments, and timely on-screen displays when positive sentiment spikes.

Looking ahead, the majority of streamers believe virtual co-hosts will become the norm within the next five years. At the forefront of this shift are AI Twins - digital avatars modeled after real creators. Platforms like TwinTone make it possible for creators to transform their likeness into these AI Twins, which can host branded streams 24/7 in over 40 languages. These avatars not only showcase products and user-generated content around the clock but also enable creators to earn passive income even when they’re offline.

Early adopters are already leveraging proprietary datasets that connect emotional responses to specific products, messages, and creators. This data feeds into automated strategies, such as deploying live support when frustration rises, triggering limited-time offers during moments of high enthusiasm, or displaying reviews when skepticism emerges. Over time, this creates an emotional feedback loop that helps refine everything from product development to creative strategies and customer experiences.

The future of emotion analytics lies in advanced multimodal models that combine facial, vocal, and textual data, improving accuracy across various settings and demographics. Hybrid formats may also emerge, blending human creators for key moments with emotion-aware AI Twins managing routine demonstrations and shoppable streams.

Beyond live shopping, emotion recognition opens doors to exciting possibilities. It could shape more engaging storylines, adaptive learning content, and entertainment formats where plotlines shift based on audience mood. Creators could even license their AI Twin personas to multiple brands, unlocking new income streams while maintaining control over their personal brand identity.

To thrive in this evolving space, success will depend on tracking both emotional metrics (like positivity spikes or confusion levels) and business outcomes (such as engagement time, add-to-cart rates, or revenue per viewer). A/B testing will help brands fine-tune their messaging by linking emotional patterns - curiosity, excitement, assurance, and purchase - to measurable results that boost engagement and sales.

With over 20,000 creators and more than 1 billion views, TwinTone has shown how emotion-aware AI Twins can scale personalized engagement while driving meaningful connections and measurable sales. As this technology advances, brands that pair ethical practices with thoughtful implementation will lead the next era of social commerce, where every live stream evolves, adapts, and converts seamlessly.

FAQs

How does AI emotion recognition maintain accuracy for diverse audiences during live streams?

AI emotion recognition works by utilizing advanced machine learning models trained on large datasets that include diverse demographics, facial expressions, and backgrounds. This broad training ensures the AI can interpret emotional cues accurately across various groups, even in real-time settings.

What makes these systems even more effective is their ability to learn and adapt over time. By analyzing context and subtle individual differences, they refine their understanding of emotional nuances. This adaptability allows them to provide consistent and reliable emotion recognition during live interactions, boosting engagement and improving the overall user experience.

What ethical issues should brands consider when using AI for emotion recognition in live streams?

When leveraging AI-powered emotion recognition during live streaming, brands need to focus on three key aspects: privacy, consent, and fairness. Viewers should always be informed about how their data is collected and used. Transparency is non-negotiable, and obtaining explicit consent is a must.

Brands also have a responsibility to tackle potential biases in AI systems. This helps prevent unfair outcomes and promotes inclusivity in their applications. On top of that, securing personal data with strong protection measures is crucial for building trust and ensuring user privacy remains intact.

How do AI Twins improve engagement and personalize live streams while protecting viewer privacy?

AI Twins transform live streaming by delivering interactive, real-time experiences that keep audiences engaged. These AI-driven hosts can run branded streams, respond to viewer questions, and display products around the clock, providing a tailored and engaging experience for viewers.

What’s more, AI Twins prioritize viewer privacy by creating content without using personal data. This approach ensures genuine interactions while safeguarding individual identities and building trust.