How Adaptive AI Learns New Harassment Tactics

How to build a futureproof relationship with AI

Online harassment is evolving, and bad actors constantly find new ways to bypass detection systems. Traditional tools often fail to catch subtle, context-dependent abuse like gaslighting or manipulative vocal tones. Enter adaptive AI: systems designed to identify these evolving patterns in real-time by analyzing live data across text, audio, and video.

Key points:

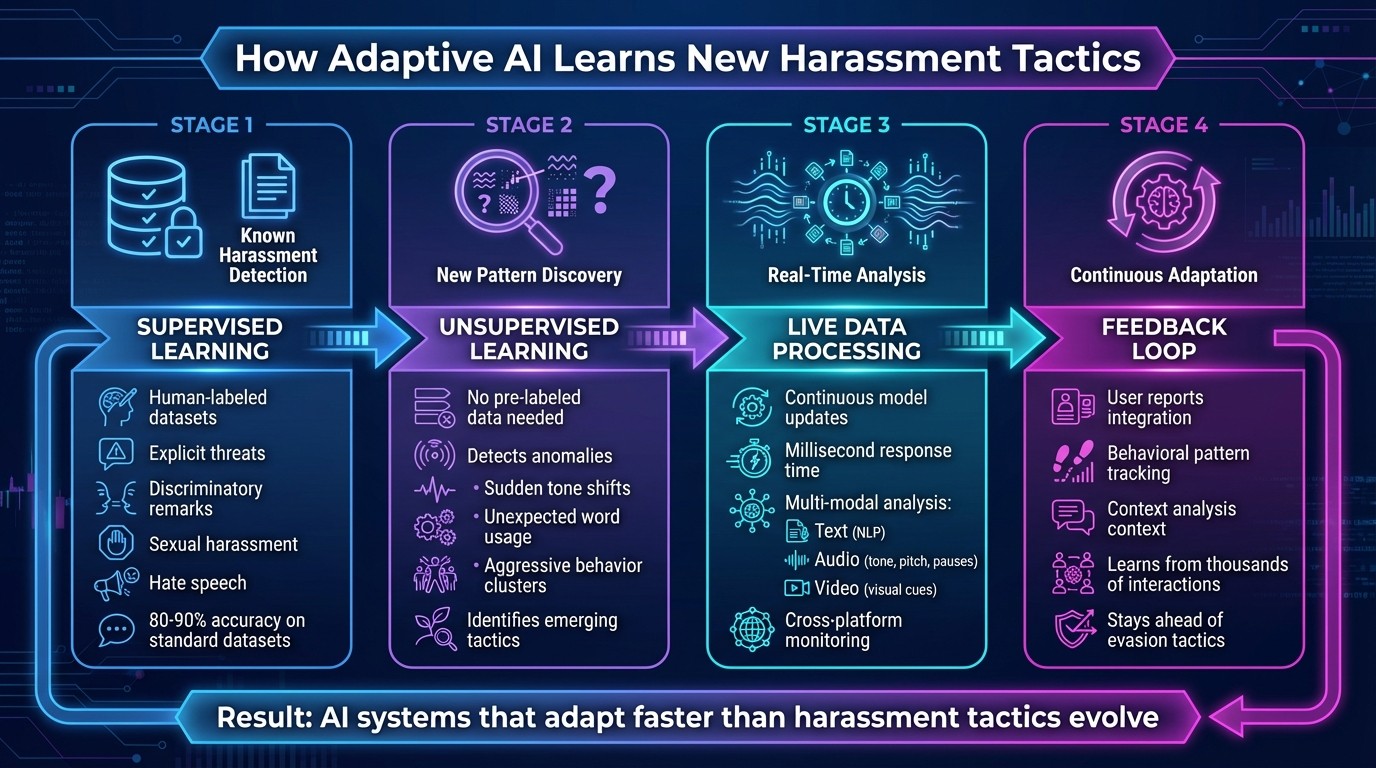

How it works: Combines supervised learning (for known harassment) with unsupervised learning (to detect new tactics) and updates models continuously.

What it detects: Subtle behaviors like guilt-inducing language, tone changes, or coded phrases.

Challenges: Context matters - phrases like "You're crazy" can mean different things depending on tone and setting.

Solutions: Advanced tools analyze entire conversations, cross-platform behaviors, and even live interactions to identify harassment before it escalates.

For example, TwinTone's AI-powered system protects creators in live social commerce by flagging harmful content in milliseconds, managing risks, and ensuring a safer environment for creators and audiences alike. Adaptive AI is reshaping how platforms handle harassment, focusing on speed and precision to stay ahead of evolving tactics.

AI Content Moderation with Google's Ninny Wan

How AI Systems Learn to Identify New Harassment Methods

How Adaptive AI Detects and Responds to Online Harassment in Real-Time

AI systems use a mix of supervised and unsupervised learning approaches to tackle harassment. By combining these methods, they not only address known forms of abuse but also adapt to new and evolving tactics over time.

Supervised and Unsupervised Learning Basics

Supervised learning relies on datasets that are carefully labeled by human annotators. These datasets include examples of harassment, such as explicit threats, discriminatory remarks, sexual harassment, insults, and hate speech. Using natural language processing (NLP) algorithms, these models learn to recognize abuse patterns. For instance, workplace AI tools may scan emails or chats for aggressive language or signs of social exclusion.

On the other hand, unsupervised learning doesn't depend on pre-labeled data. Instead, it identifies unusual patterns or anomalies in communication. This could include sudden shifts in tone, the use of unexpected words, or clusters of aggressive behavior.

By integrating both approaches, AI systems strike a balance: supervised learning detects known harassment types, while unsupervised learning spots emerging tactics. A 2022 study in Frontiers in Artificial Intelligence highlighted that AI systems designed to detect cyberbullying often achieve 80–90% accuracy on standard datasets. However, their performance can drop when faced with new slang or unfamiliar platforms, emphasizing the importance of continuous learning.

Live Data Processing and Algorithm Updates

Static models can quickly fall behind as abusive behaviors evolve. To stay effective, adaptive AI systems use live data processing, which allows them to update in real time. By analyzing ongoing communication streams, these systems refine their models continuously, ensuring they remain relevant.

For example, AI systems monitor chats and social media feeds in real time, flagging abusive content immediately rather than waiting for batch processing. Feedback loops play a critical role here. User reports and corrections feed back into the system, helping it learn from thousands of interactions. This ongoing process allows the AI to keep up with shifts in tactics, such as the use of new gaslighting phrases or coded language. As one study noted, this approach directly addresses "the evolving nature of abusive and hate speech online".

To further enhance detection, multi-modal analysis is often employed. This combines real-time NLP for text analysis, voice tone analysis to detect changes in pitch or intentional pauses, and anomaly detection to identify unusual behaviors across platforms. This integrated approach ensures that the AI can respond to harassment as it happens while also anticipating new methods, staying ahead of those trying to evade detection.

Methods for Detecting Changing Harassment Patterns

Building on real-time data processing, modern AI technologies are increasingly focused on identifying subtle and evolving harassment patterns. By combining advanced behavioral analysis with cross-platform monitoring, these systems are better equipped to address the complexities of online abuse.

Behavioral and Context-Based Pattern Detection

Instead of flagging individual offensive messages, AI systems now analyze entire conversation histories to uncover patterns of harassment. This includes identifying repeated cycles of abuse, such as consistent targeting, exclusion from groups, or escalating aggression.

A key component of this approach is anomaly detection. The AI establishes a baseline for each user's typical communication style - covering tone, vocabulary, and response timing. When a user suddenly shifts to controlling language, invalidating remarks, or exhibits unusual delays in emotionally charged conversations, the system flags these deviations as potential harassment. This method allows AI to spot new tactics that might bypass traditional keyword-based filters.

To enhance accuracy, these systems also integrate natural language processing (NLP) and voice tone analysis. They look for cues like guilt-inducing language, condescending tones, dismissive responses, or strategic pauses - all of which can signal manipulation. By focusing on context, these tools address gaps that simple keyword detection often overlooks. Additionally, by incorporating cross-platform data, AI can identify harassment patterns no matter where they occur.

Cross-Platform Analysis of Text, Audio, and Video

Harassment today often spans multiple formats and platforms, making it essential for AI to operate across text, audio, and video. For text, NLP helps detect abusive language; for audio, systems analyze pitch, tone, and pauses; and for video, deep neural networks flag harmful visual cues.

This multimodal approach is especially effective for managing live content. With stream-based machine learning, AI processes data incrementally and in real time, flagging harmful content before it reaches viewers. Beyond this, the system connects patterns across platforms, identifying repeat offenders who might use hostile tones in voice messages or visual cues in videos, even when their text appears neutral. By combining these capabilities with behavioral detection, AI systems are now better prepared for proactive content moderation in real time.

Obstacles in Identifying New Harassment Approaches

Even with advancements in behavioral analysis and cross-platform monitoring, AI systems still struggle to detect evolving forms of harassment. These challenges arise from the complexity of human communication and the persistent ingenuity of those aiming to evade detection.

Context-Dependent Meaning and Interpretation

Understanding the meaning of a phrase often requires considering its context, the speaker's intent, and the conversation's history. For example, a phrase like "You're crazy" might be playful in one scenario but manipulative in another, such as gaslighting. Similarly, a sarcastic "Nice job" could either be genuine praise or a passive-aggressive jab, depending on the setting - like a performance review versus a public comment after a mistake.

This issue isn't limited to text. The tone of delivery can completely change the meaning of neutral words. A seemingly caring comment, when said with a condescending tone, might signal manipulation instead of concern. The problem becomes even more complicated when factoring in cultural and community differences. For instance, words that are offensive in one group might be neutral - or even reclaimed and empowering - in another. U.S.-based platforms serving diverse audiences must account for regional slang, dialects like AAVE, and community-specific norms to avoid unfairly targeting marginalized groups while still addressing genuine harassment.

To handle these nuances, AI systems now analyze entire conversations rather than isolated messages. They track emotional patterns across interactions, making it easier to identify repeated dismissals of someone's feelings - a common sign of emotional abuse. Additionally, large language models can explain why certain content might be harmful, giving moderation teams more context and reasoning instead of just flagging content as acceptable or not. However, these advancements also push harassers to evolve their tactics further.

How Harassers Avoid Detection Systems

Harassers exploit these contextual challenges by constantly refining their methods to slip past detection. Common strategies include using obfuscated slurs with leetspeak, intentional misspellings, or emoji substitutions. They also rely on euphemisms and coded language that carry hateful meanings only understood by insiders. Dog whistles and memes are another tactic - seemingly harmless images or emoji sequences that abusive subcultures recognize as threats. When facing detection, manipulators may switch to neutral language but rely on delivery methods, like a condescending tone or feigned sympathy, to convey hostility.

On platforms like social commerce or creator-focused spaces, harassment can take more subtle forms. For example, bad actors may embed hateful messages in product reviews, comments during livestream shopping, or reaction videos targeting specific creators. They also use strategies like flooding or brigading, where many low-intensity messages are coordinated to overwhelm targets. Individually, these messages may seem borderline and avoid violating explicit policies, but together, they create a hostile environment.

AI systems combat these tactics with advanced tools like character-level and subword models, which can identify patterns in creatively misspelled slurs (e.g., "i d ! 0 t") that traditional filters might miss. Embedding clustering helps detect when new terms are used in contexts similar to known slurs, enabling the discovery of emerging codes without direct supervision. Stream-based and incremental learning pipelines allow models to adapt quickly to new patterns in real-time traffic, avoiding the delays of periodic retraining. These measures aim to stay one step ahead of evolving harassment strategies.

TwinTone's AI-Powered Creator Protection System

TwinTone has developed a cutting-edge protection system tailored for live social commerce, addressing the growing reliance on AI-generated content and livestreams in this space. With the rise of these formats, platforms need systems that can operate at the same rapid pace and scale as the content they host. TwinTone steps in by creating AI Twins - digital representations of real creators that can host livestreams and generate shoppable videos 24/7. These AI Twins ensure constant, real-time monitoring of interactions, keeping both creators and their audiences safe.

AI Twins and Live Content Monitoring

When AI Twins host livestreams, TwinTone’s system uses stream-based detection models to analyze chat messages and viewer behaviors in real time - processing interactions in mere milliseconds. It evaluates the context of messages, identifies repeat offenders, and distinguishes harmless jokes from harmful behavior. If abusive content spikes, the system immediately flags it for review.

The response framework is tiered based on the severity of the flagged content. Mild or borderline comments might be soft-filtered or sent for manual review, while more aggressive behavior - like repeated insults or coded slurs - can result in auto-hidden messages, temporary mutes, or restricted access to shopping features. For extreme cases, such as explicit hate speech or credible threats, the system enforces bans and notifies moderators. By learning from each incident and incorporating creator feedback, TwinTone continuously adapts to new forms of harassment without needing lengthy retraining cycles. This dynamic approach not only protects creators but also ensures a smoother, safer shopping experience for viewers and brands alike.

Safeguarding Social Commerce Through AI

TwinTone’s real-time behavioral analysis tools play a pivotal role in safeguarding social commerce. By proactively filtering out harassment, the system preserves a positive environment for live shopping events and shoppable content. This creates a ripple effect - brands see higher engagement, longer viewer watch times, improved click-through rates, and reduced customer drop-off. Studies have shown that when users feel safe, they’re more likely to stay engaged and less likely to abandon the experience due to toxic interactions.

The system doesn’t stop at live streams. It also scans content both before and after publication to catch potential issues. Before AI-generated user-generated content (UGC) goes live, it reviews scripts, captions, and overlays for language that could spark controversy, suggesting safer alternatives to maintain audience engagement. Once content is live, it continues monitoring comments and reactions, identifying harassment campaigns early. This allows brands to take swift action - whether that’s disabling comments, adjusting audience targeting, or regenerating content to maintain performance while minimizing risk.

For U.S.-based businesses, these safeguards mean fewer escalations to legal or PR teams and a stronger alignment with platform safety standards. At the same time, the system tracks key metrics like harassment rates and response times, ensuring brands can measure the impact of their safety efforts while maintaining a positive, secure environment for creators and consumers.

Conclusion: What's Next for AI in Harassment Prevention

AI is stepping up its game in addressing harassment, using real-time pattern recognition that evolves alongside the tactics of those who harass. The next wave of AI systems will bring in stream-based learning, multimodal analysis, and outputs that explain why content is flagged. These upgrades will help detect harassment hidden in sarcasm, coded language, or even coordinated attacks - areas where traditional moderation often falls short. This is especially important for live and interactive platforms where quick response times are essential.

For platforms like social commerce, speed is everything. AI capable of processing in milliseconds can block harassment before it disrupts a sale or damages brand trust. Features like auto-adjusting engagement tools - such as switching to follower-only mode during attacks - can protect both creators and their revenue streams. These tools ensure that creators and businesses can operate without constant manual intervention.

In this space, TwinTone has introduced a powerful solution with its AI Twins. These AI Twins not only host content but also act as a protective barrier for creators. They use personalized risk profiles to handle minor harassment automatically, while escalating more serious threats to human moderators. This approach helps safeguard creators' mental well-being and frees up their time. For brands using live product demos or shoppable videos, this means cleaner comment sections, better conversion rates, and fewer legal risks - all without requiring constant human oversight.

As harassment tactics evolve - whether through new platforms, emerging content formats, or increasingly coded language - AI systems that share threat intelligence and rotate their detection strategies will make it harder for bad actors to game the system. The future of creator protection lies in AI that can adapt faster than these tactics, ensuring a safer and more profitable environment for social commerce.

FAQs

How does adaptive AI identify harmful language while ignoring harmless content?

Adaptive AI leverages advanced algorithms to interpret the context, tone, and intent behind language. It doesn’t just rely on static rules; instead, it continuously learns from new data, allowing it to spot subtle patterns and adjust to evolving harassment methods. This dynamic learning process helps it distinguish between casual, harmless expressions and language that could be harmful - improving its accuracy over time.

With regular updates, adaptive AI becomes better equipped to understand the complexities of communication. This ensures it can tackle inappropriate language effectively while keeping false positives to a minimum.

What challenges does AI face in identifying new harassment tactics?

AI encounters a range of hurdles in spotting new harassment tactics. These challenges include detecting subtle or shifting behaviors, keeping up with changes in language and context, and differentiating between harmful content and legitimate conversations.

On top of that, it needs to accomplish all this while reducing false positives and safeguarding user privacy. Since online interactions are constantly evolving, AI must continuously learn and adapt to tackle emerging forms of harassment effectively.

How does real-time data processing improve the detection of harassment tactics?

Real-time data processing enables AI to analyze live interactions instantly, making it possible to identify new harassment tactics as they arise. By constantly updating detection models, the AI can adapt quickly to new threats and evolving patterns of behavior.

This proactive method improves detection accuracy while helping to ensure that platforms remain safe and respectful spaces for everyone.